OpenAI has reached an agreement with the Department of War to deploy advanced artificial intelligence systems in classified environments, a move the company forecasts will establish new standards for responsible AI implementation in national security. Unlike some other frontier labs, OpenAI is prioritizing robust safeguards through a multi-layered approach, retaining full discretion over its safety stack and maintaining human oversight of deployments. The agreement centers around three key “red lines”: preventing the use of OpenAI technology for mass domestic surveillance, autonomous weapons systems, and high-stakes automated decisions like “social credit” systems. “We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s,” the company stated, signaling a commitment to protecting democratic values while equipping the U.S. military with essential tools.

Cloud Deployment with Safety and Verification Mechanisms

A newly established contract with the Pentagon prioritizes stringent safety measures for artificial intelligence, exceeding the safeguards of previous classified deployments. Company officials revealed yesterday that an agreement was reached to deploy advanced AI systems within secure governmental environments, while simultaneously advocating for broader access to these systems for all AI developers. This commitment signifies a departure from earlier arrangements, notably those undertaken by Anthropic, by incorporating a more robust framework of protective protocols. According to the company, the core of their approach rests on three fundamental “red lines” that govern their collaboration with the Department of War, principles they state are widely embraced by other leading AI organizations. The deployment is cloud-only, intentionally avoiding both direct model access and edge device implementation to mitigate potential misuse.

Company representatives emphasize they are not furnishing the Department of War with unconstrained or inadequately trained models, nor are they facilitating deployment on edge devices, a critical decision made to preclude the possibility of these systems being utilized in the development of autonomous lethal weapons. “We are not providing the DoW with ‘guardrails off’ or non-safety trained models, nor are we deploying our models on edge devices (where there could be a possibility of usage for autonomous lethal weapons),” the company stated. The company intends to leverage its deployment architecture to independently verify adherence to its established red lines, ensuring the integrity of the AI systems throughout their operational lifecycle.

This verification process will involve continuous operation and updates to internal classifiers, providing an ongoing assessment of system behavior and compliance. “Our deployment architecture will enable us to independently verify that these red lines are not crossed, including running and updating classifiers,” explained a company spokesperson. The company will have cleared forward-deployed engineers helping the government, with cleared safety and alignment researchers in the loop.

AI System Use Guidelines & Limitations for the Department of War

Industry leaders predict a significant shift in how artificial intelligence systems are integrated into defense operations, with a new agreement between a company and the Department of War establishing stringent guardrails for classified deployments. This arrangement, reached yesterday, reportedly surpasses previous agreements, including those established with Anthropic, in terms of safety protocols and limitations on autonomous functionality. Central to this evolving framework is a commitment to maintaining human oversight in critical decision-making processes, a response to growing concerns surrounding the ethical and practical implications of increasingly autonomous systems. The contract explicitly outlines permissible uses of the AI System, stating that the Department of War “may use the AI System for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols.” However, the agreement firmly prohibits the AI from independently directing autonomous weapons systems when human control is mandated by law, regulation, or departmental policy.

Furthermore, the AI will not be authorized to make high-stakes decisions without prior approval from a human decisionmaker operating under the same authorities. The system is deployed via cloud, with cleared OpenAI personnel in the loop, enabling independent verification that these red lines are not crossed. Any implementation of AI within autonomous or semi-autonomous systems will be subject to “rigorous verification, validation, and testing” as stipulated by DoD Directive 3000.09, dated January 25, 2023, to guarantee performance in real-world scenarios before operational deployment. Looking ahead, the handling of sensitive data by these AI systems is also receiving heightened scrutiny, particularly regarding privacy protections for U.S. persons.

The contract stipulates that all intelligence activities utilizing the AI System must adhere to a comprehensive legal framework, including the Fourth Amendment, the National Security Act of 1947, the Foreign Intelligence and Surveillance Act of 1978, Executive Order 12333, and relevant DoD directives, all requiring a clearly defined foreign intelligence purpose. “The AI System shall not be used for unconstrained monitoring of U.S. persons’ private information as consistent with these authorities,” the contract states, reinforcing a commitment to civil liberties. Additionally, the use of the system for domestic law enforcement is restricted to instances permitted under the Posse Comitatus Act and other applicable legislation, signaling a deliberate effort to delineate the boundaries between national security applications and domestic policing activities.

We have three main red lines that guide our work with the DoW, which are generally shared by several other frontier labs: No use of OpenAI technology for mass domestic surveillance. No use of OpenAI technology to direct autonomous weapons systems. No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

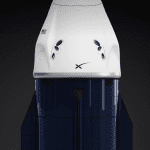

OpenAI

AI expert involvement

Following an agreement reached with the Pentagon, OpenAI is positioning itself as a proponent of responsible AI integration within the Department of War, a move that diverges from previous arrangements, including those undertaken by Anthropic. The company is actively deploying cleared OpenAI engineers, alongside safety and alignment researchers, to work directly with government systems, a level of oversight they believe is crucial for maintaining ethical boundaries. OpenAI’s decision to enter into a classified deployment contract stemmed from a belief that the U.S. military requires “strong AI models to support their mission especially in the face of growing threats from potential adversaries who are increasingly integrating AI technologies into their systems,” according to company statements.

Initially hesitant due to concerns about existing safeguards, OpenAI proceeded only after “working hard to ensure that a classified deployment can happen with safeguards to ensure that red lines are not crossed.” A core tenet of this approach is a firm refusal to compromise on technical safety measures, as the company maintains it is “unwilling to remove key technical safeguards to enhance performance on national security work.” This stance, they suggest, is not about hindering national security, but rather about ensuring it is supported “in the correct approach.” Looking ahead, OpenAI is actively working to foster broader collaboration between the government and AI labs, having specifically requested that the terms of their agreement be extended to other companies, including Anthropic. The company hopes the government will “try to resolve things with Anthropic,” viewing the current situation as detrimental to future partnerships.

OpenAI believes their contract offers superior guarantees and safeguards compared to earlier agreements, citing a cloud-only deployment architecture, the preservation of their safety stack, and the continuous involvement of cleared personnel as key differentiators. “We think our red lines are more enforceable here because deployment is limited to cloud-only (not at the edge), keeps our safety stack working in the way we think is best, and keeps cleared OpenAI personnel in the loop,” the company explained.

Furthermore, OpenAI has publicly stated its opposition to designating Anthropic as a “supply chain risk.” Addressing concerns about potential misuse, OpenAI has explicitly outlined safeguards against both autonomous weapons and mass surveillance, asserting that “based on our safety stack, our cloud-only deployment, the contract language, and existing laws, regulation and policy, we are confident that this cannot happen.” The company also emphasizes its commitment to maintaining full control over its safety stack and will not deploy models without established guardrails, a contrast to other labs that have reportedly “reduced model guardrails and relied on usage policies as the primary safeguard.” Should the Department of War violate the contract’s terms, OpenAI retains the right to terminate the agreement, though they express confidence that such a scenario is unlikely. The contract also explicitly references existing surveillance and weapons laws, ensuring continued alignment even if those policies evolve in the future.

We were—and remain—unwilling to remove key technical safeguards to enhance performance on national security work. That is not the correct approach to supporting the US military.