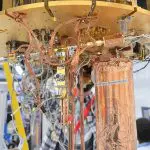

NVIDIA has announced its next-generation AI supercomputer, the NVIDIA DGX SuperPOD, powered by NVIDIA GB200 Grace Blackwell Superchips. The supercomputer is designed to process trillion-parameter models for generative AI training and inference workloads. It features a new, highly efficient, liquid-cooled rack-scale architecture and provides 11.5 exaflops of AI supercomputing. The DGX SuperPOD can scale to tens of thousands of GB200 Superchips connected via NVIDIA Quantum InfiniBand. Jensen Huang, founder and CEO of NVIDIA, stated that the new DGX SuperPOD combines the latest advancements in NVIDIA accelerated computing, networking, and software.

NVIDIA’s New AI Supercomputer: DGX SuperPOD

NVIDIA has announced the launch of its next-generation AI supercomputer, the DGX SuperPOD. This new system is powered by NVIDIA GB200 Grace Blackwell Superchips and is designed to process trillion-parameter models for generative AI training and inference workloads. The DGX SuperPOD features a new, highly efficient, liquid-cooled rack-scale architecture and is built with NVIDIA DGX GB200 systems. It provides 11.5 exaflops of AI supercomputing at FP4 precision and 240 terabytes of fast memory, with the potential to scale up with additional racks.

Each DGX GB200 system within the SuperPOD is equipped with 36 NVIDIA GB200 Superchips. These include 36 NVIDIA Grace CPUs and 72 NVIDIA Blackwell GPUs, all connected as one supercomputer via fifth-generation NVIDIA NVLink. The GB200 Superchips deliver up to a 30x performance increase compared to the NVIDIA H100 Tensor Core GPU for large language model inference workloads.

Grace Blackwell-Powered DGX SuperPOD: Scalability and Connectivity

The Grace Blackwell-powered DGX SuperPOD can feature eight or more DGX GB200 systems and can scale to tens of thousands of GB200 Superchips connected via NVIDIA Quantum InfiniBand. For a massive shared memory space to power next-generation AI models, customers can deploy a configuration that connects the 576 Blackwell GPUs in eight DGX GB200 systems connected via NVLink.

The new DGX SuperPOD with DGX GB200 systems features a unified compute fabric. In addition to fifth-generation NVIDIA NVLink, the fabric includes NVIDIA BlueField-3 DPUs and will support NVIDIA Quantum-X800 InfiniBand networking. This architecture provides up to 1,800 gigabytes per second of bandwidth to each GPU in the platform.

New DGX SuperPOD Architecture: Efficiency and Performance

The new DGX SuperPOD architecture is designed for the era of generative AI. It features fourth-generation NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol (SHARP) technology, which provides 14.4 teraflops of In-Network Computing. This is a 4x increase in the next-generation DGX SuperPOD architecture compared to the prior generation.

The new DGX SuperPOD is a complete, data-center-scale AI supercomputer that integrates with high-performance storage from NVIDIA-certified partners to meet the demands of generative AI workloads. Each is built, cabled, and tested in the factory to dramatically speed deployment at customer data centers.

Intelligent Predictive-Management Capabilities of DGX SuperPOD

The Grace Blackwell-powered DGX SuperPOD features intelligent predictive-management capabilities. These capabilities continuously monitor thousands of data points across hardware and software to predict and intercept sources of downtime and inefficiency, saving time, energy, and computing costs. The software can identify areas of concern and plan for maintenance, flexibly adjust compute resources, and automatically save and resume jobs to prevent downtime, even without system administrators present.

If the software detects that a replacement component is needed, the cluster will activate standby capacity to ensure work finishes in time. Any required hardware replacements can be scheduled to avoid unplanned downtime.

NVIDIA DGX B200 Systems: Advancing AI Supercomputing

In addition to the DGX SuperPOD, NVIDIA also unveiled the DGX B200 system, a unified AI supercomputing platform for AI model training, fine-tuning, and inference. The DGX B200 is the sixth generation of air-cooled, traditional rack-mounted DGX designs used by industries worldwide. The new Blackwell architecture DGX B200 system includes eight NVIDIA Blackwell GPUs and two 5th Gen Intel Xeon processors. Customers can also build DGX SuperPOD using DGX B200 systems to create AI Centers of Excellence that can power the work of large teams of developers running many different jobs.

DGX B200 systems include the FP4 precision feature in the new Blackwell architecture, providing up to 144 petaflops of AI performance, a massive 1.4TB of GPU memory, and 64TB/s of memory bandwidth. This delivers 15x faster real-time inference for trillion-parameter models over the previous generation.

External Link: Click Here For More