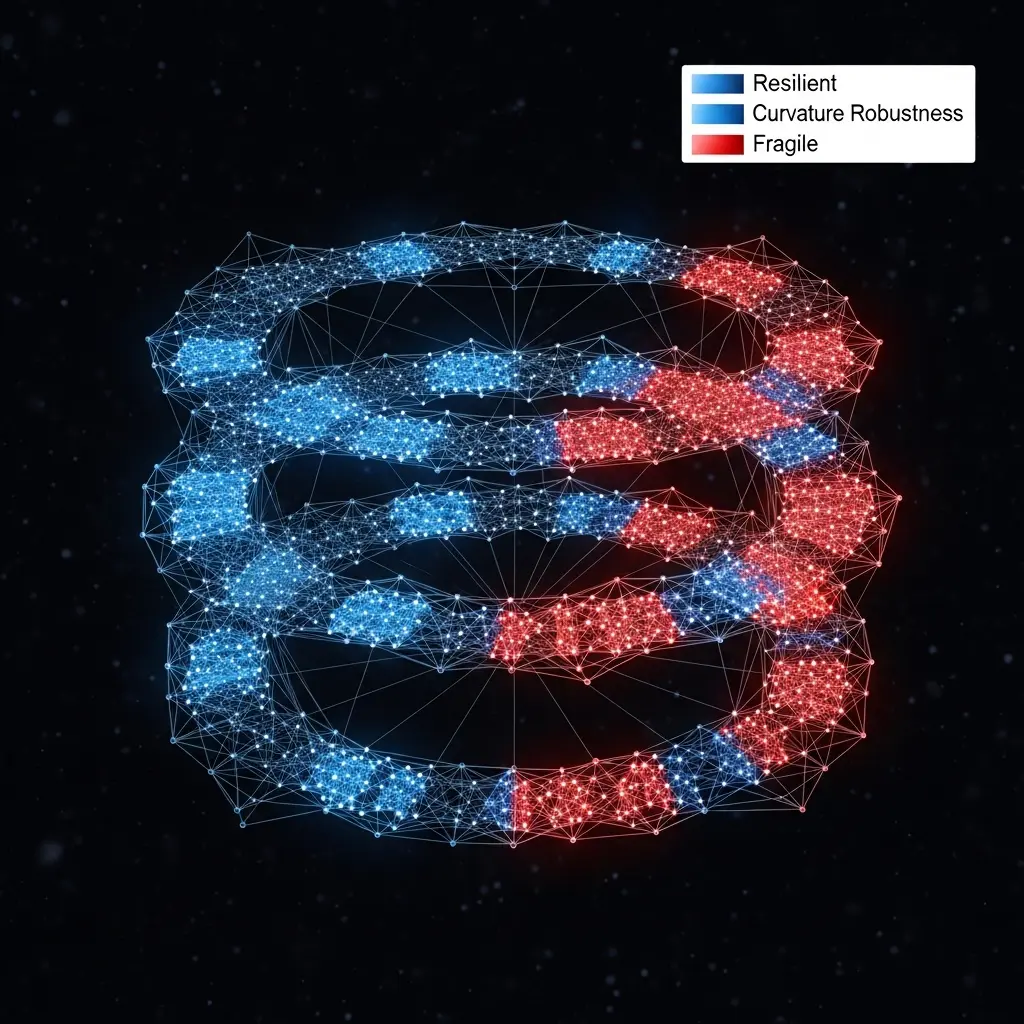

Researchers are tackling the critical challenge of understanding information flow within neural networks, seeking to pinpoint the connections most vital to a model’s performance. Shuhang Tan from Rensselaer Polytechnic Institute, alongside Jayson Sia and Paul Bogdan from the University of Southern California, with Radoslav Ivanov also of Rensselaer Polytechnic Institute, present a novel approach using differential geometry to analyse this complex process. Their work moves beyond traditional information-theory based methods, instead employing Ollivier-Ricci curvature , a technique previously successful in fields like traffic and biological network analysis , to identify ‘bottleneck’ edges crucial for network functionality. By calculating curvature based on activation patterns, the team demonstrates that edges with negative curvature are key to performance, and their removal rapidly degrades accuracy, offering a potentially more effective method for network pruning and symbolic analysis than current state-of-the-art techniques.

Unlike existing techniques that often rely on complex optimisation or strong distributional assumptions, this work offers a geometrically-informed perspective on NN data flow. The study establishes a framework where a neural network is represented as a weighted directed graph, with neurons as nodes and connections as edges, allowing for the application of graph-theoretic tools. They then calculated these curvatures using activation patterns from a set of input examples, aiming to demonstrate that NC accurately reflects the significance of edges for the network’s overall performance.

This improved identification of dispensable connections opens avenues for more efficient model compression and a deeper understanding of the underlying data pathways within complex neural networks. This research establishes a continuous measure of edge importance, avoiding the layer collapse issues often seen in other pruning methods. By leveraging the principles of differential geometry, the scientists prove that graph curvature can effectively rank connections across the entire model, providing a robust and insightful tool for analysing NN data flows. The work opens possibilities for future advancements in symbolic NN analysis, including robustness analysis and model repair, ultimately contributing to the development of more reliable and interpretable artificial intelligence systems.

Neural curvature maps data flow in networks

The study constructs a weighted directed graph representing the NN, where neurons become nodes and connections define edges, enabling the application of graph-theoretic tools to understand information propagation. This innovative method directly estimates data flows using concepts from differential geometry, unlike traditional pruning techniques focused on model size reduction. Specifically, researchers generated activation patterns by feeding input examples through the trained NNs and then used these patterns to calculate the curvature of each edge within the induced graph. This process yielded a ranking of edges based on their NC values, allowing the team to identify potentially unimportant connections for subsequent pruning experiments. This breakthrough enables a new pathway for symbolic NN analysis, including robustness assessment and model repair, by providing a tool to pinpoint crucial connections within the network.

Neural curvature identifies critical network connections for learning

The research introduces a new approach to understanding NN data flow, moving beyond traditional Information theory-based methods and focusing on graph curvature, specifically Ollivier-Ricci curvature (ORC). This innovative technique allows for the identification of critical connections within NNs, offering a tool for symbolic NN analysis such as robustness assessment and model repair. Researchers calculated curvatures based on activation patterns from input examples, demonstrating that NC effectively ranks edges according to their importance to NN functionality. Conversely, removing positive-ORC edges had minimal impact, validating the method’s ability to distinguish between critical and unimportant connections.

The team’s analysis demonstrates that NC provides a continuous measure of edge importance, robustly ranking connections across the entire model and avoiding the layer collapse issues often seen with other methods. Measurements confirm that the technique accurately identifies key data pathways, offering a powerful tool for future development of curvature-based methods for NN analysis and potentially enabling advancements in model repair and robustness. The breakthrough delivers a new perspective on NN analysis, offering a means to understand the internal workings of these complex systems without relying on complex optimisation or strong distributional assumptions. Tests prove that the proposed NC metric effectively captures edge importance, providing a valuable resource for researchers seeking to improve the reliability and interpretability of neural networks. This work opens avenues for compositional analysis of NN graph structure and corresponding data patterns, potentially leading to more robust and trustworthy artificial intelligence systems.

👉 More information

🗞 Analyzing Neural Network Information Flow Using Differential Geometry

🧠 ArXiv: https://arxiv.org/abs/2601.16366