Medical vision-language models hold immense potential for transforming healthcare, offering tools for automated report generation and assisting physicians with complex diagnoses, but realising this promise requires addressing significant security and accessibility challenges. Xiao Li, Yanfan Zhu, Ruining Deng, and colleagues present MedFoundationHub, a new toolkit designed to deploy these powerful models safely and efficiently. This system allows clinicians to utilise advanced models without programming expertise, while also enabling engineers to integrate open-source options with ease, all within a secure, privacy-preserving framework. Importantly, MedFoundationHub operates on standard hardware, requiring only a single high-end GPU, and the team demonstrates its capabilities through evaluations with board-certified pathologists assessing five state-of-the-art models on colon and renal cases, revealing both the potential and current limitations of these emerging technologies.

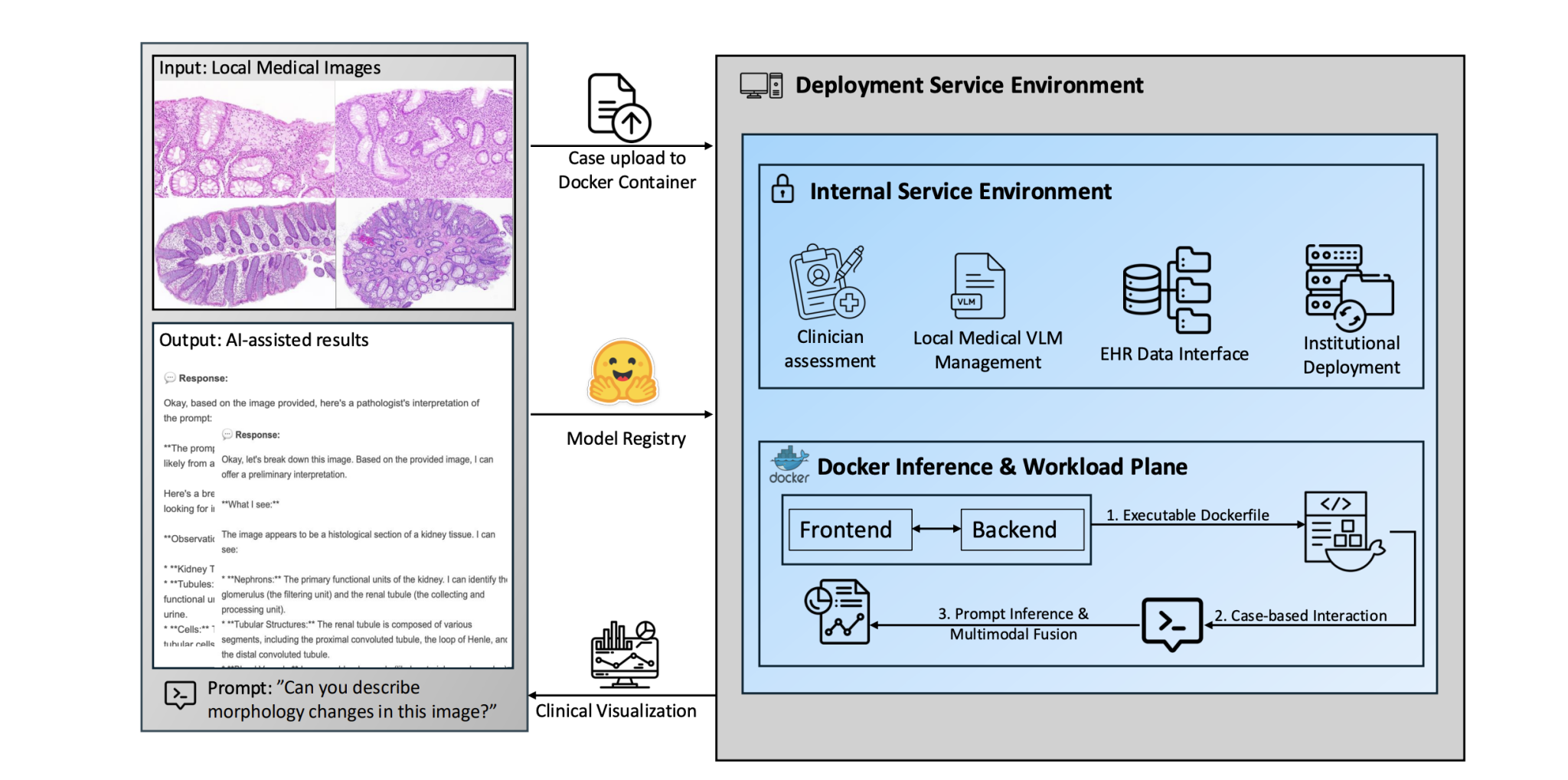

Open up remarkable opportunities for clinical applications such as automated report generation, physician copilots, and uncertainty quantification. Despite their promise, medical Vision-Language Models (VLMs) raise serious security concerns, including the risk of Protected Health Information (PHI) exposure, data leakage, and vulnerability to cyberthreats, concerns that are especially critical in hospital environments. Even when adopted for research or non-clinical purposes, healthcare organizations must exercise caution and implement safeguards. To address these challenges, researchers present MedFoundationHub, a graphical user interface (GUI) toolkit that enables physicians to manually select and use different models.

Pathology AI Deployment Toolkit and Evaluation

This research details the development and evaluation of MedFoundationHub, a toolkit designed to facilitate the deployment of large multimodal models (LMMs), specifically vision-language models (VLMs), within a clinical pathology workflow. The core goal is to bridge the gap between promising AI models and practical, reliable diagnostic use. Key contributions and findings include a deployment toolkit, clinical evaluation, and insights into performance gaps. MedFoundationHub provides a secure, HIPAA-compliant infrastructure for deploying and testing VLMs on real-world pathology data, such as whole slide images and reports.

It addresses challenges related to data privacy, model access, and integration with existing clinical systems. The team rigorously evaluated several state-of-the-art VLMs, including MedPaLM 2 and Qwen2, using a curated dataset of pathology questions from PathologyOutlines. com and Arkana Labs, covering both colon and renal pathology. This evaluation revealed that even advanced VLMs struggle with complex diagnostic reasoning, often providing vague answers, lacking domain-specific knowledge, or failing to grasp nuanced terminology. This highlights the need for specialized training and adaptation of these models for medical applications.

The authors emphasize the crucial role of pathologists in the evaluation and refinement of AI models, as integrating clinical expertise into the development loop is essential for building trustworthy and reliable AI systems. Future plans include expanding MedFoundationHub’s capabilities with support for more models, advanced visualization tools, and longitudinal user studies to assess the impact of AI on clinical workflows. Key takeaways include that LMMs show promise but aren’t ready for widespread use, domain-specific adaptation is critical, human-AI collaboration is essential, and infrastructure matters. In essence, the paper advocates for a responsible and clinically-informed approach to AI adoption in pathology, emphasizing the need for rigorous evaluation, domain-specific adaptation, and ongoing collaboration between AI developers and medical professionals.

Secure Medical VLM Deployment and Evaluation Toolkit

Researchers developed MedFoundationHub, a toolkit that addresses critical security and accessibility challenges associated with the increasing use of medical vision-language models (VLMs). This system empowers both clinicians and engineers to deploy and evaluate these models locally, without requiring extensive programming expertise, and ensures privacy by operating within a secure, isolated environment. The toolkit supports efficient integration of open-source models from Hugging Face, ensuring both accessibility and control over sensitive patient data. MedFoundationHub operates effectively with a single A6000 GPU, making it a practical solution for academic research labs and clinical environments alike.

To rigorously evaluate the capabilities of current VLMs, the team engaged board-certified pathologists to assess five state-of-the-art models, including Google-MedGemma3-4B and Qwen2, across 1015 clinician-model scoring events. These evaluations, focused on colon and renal cases, revealed recurring limitations in the models’ performance, including instances of off-target answers, vague reasoning, and inconsistencies in pathology terminology. The findings demonstrate both the potential and current shortcomings of medical VLMs in real diagnostic scenarios. The system’s architecture isolates sensitive data and inference processes within a secure, containerized environment, minimizing the risk of data breaches and cyberattacks.

A dual registry design accommodates both open-source and institution-specific models, ensuring reproducible deployments and version control. Clinicians interact with the system through a case-centric dashboard, allowing them to compare predictions from different models and provide structured feedback, which is then used to generate high-value ground truth data for benchmarking. This physician-in-the-loop evaluation establishes an end-to-end workflow for secure institutional deployment, systematic performance assessment, and integration into diagnostic practice.

Local Evaluation Reveals VLM Performance Variation

Evaluations using five state-of-the-art VLMs reveal varying levels of performance, with Qwen2-7B and Qwen2. 5-7B generally achieving the highest scores based on expert pathologist assessment. These models correctly diagnosed approximately half of the cases, demonstrating potential for clinical utility, while Google-MedGemma3-4B showed slightly lower performance. However, performance deteriorated considerably when assessing a separate dataset, with Qwen2. 5-7B largely failing to provide even partially correct diagnoses.

Recurring issues included inaccurate or incomplete reasoning, inconsistent use of pathology terminology, and a tendency to provide confident but morphologically incorrect answers. The authors acknowledge that consistent scoring proved difficult due to the models’ varying output styles and that the evaluation focused on a limited set of cases, potentially influencing the observed results. Future work should focus on improving model reasoning capabilities, standardising terminology, and expanding datasets to encompass a wider range of pathologies, ultimately striving for trustworthy and safe performance in real-world clinical settings.

🗞 MedFoundationHub: A Lightweight and Secure Toolkit for Deploying Medical Vision Language Foundation Models

🧠 ArXiv: https://arxiv.org/abs/2508.20345