The increasing demand for long-context language models, essential for tasks ranging from document analysis to complex reasoning, currently faces significant limitations due to the computational cost of processing extensive text sequences. Jiale Cheng, Yusen Liu, and Xinyu Zhang, along with colleagues at Tsinghua University, present a novel approach called Glyph that tackles this challenge by shifting from token-based processing to visual context scaling. Glyph compresses lengthy texts into images, allowing vision-language models to efficiently process the information while retaining crucial semantic details. This innovative method achieves three to four times compression in token length, maintains accuracy comparable to state-of-the-art language models, and dramatically accelerates both processing and training speeds, potentially enabling a 128K-context model to handle tasks requiring over a million tokens.

Glyph, Efficient Long Context Compression for LLMs

Applications increasingly rely on long-context modeling for tasks such as document understanding, code analysis, and multi-step reasoning. However, scaling context windows to handle millions of tokens presents significant computational and memory challenges, limiting the practicality of long-context large language models. Researchers have introduced Glyph, a framework that tackles this challenge by visually scaling context. Instead of processing increasingly long sequences of tokens, Glyph renders long texts into images and processes these images with vision-language models, substantially compressing textual input while preserving semantic information.

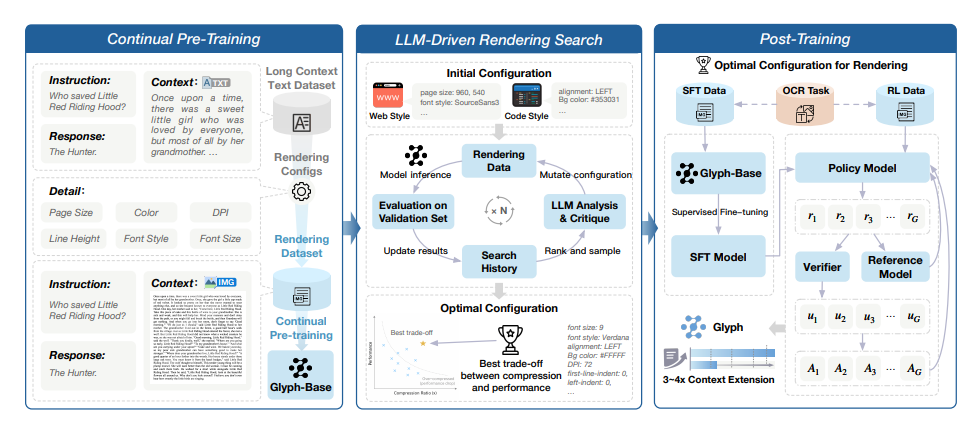

The team further designed an LLM-driven genetic search to identify optimal configurations. Glyph compresses long documents before they are fed to a language model, reducing the sequence length and improving both speed and memory usage without sacrificing performance. This allows for training and inference with longer effective context lengths than would otherwise be feasible, given hardware limitations. The method involves a controllable rendering pipeline that adjusts factors like page layout, font choices, spacing, margins, and indentation to maximize compression while maintaining document readability and information content.

This optimization is achieved through a search process, followed by continual pre-training of a long-context backbone model and supervised fine-tuning with reinforcement learning. Experiments demonstrate that Glyph achieves competitive performance on long-context tasks, significantly improving efficiency and allowing for effective training and inference with longer effective context lengths. The method was evaluated on benchmarks including LongBench, MRCR, Ruler, and MMLongBench-Doc, and compared against models such as GPT-4, LLaMA-3, Qwen3, and GLM-4. Results show substantial gains in both training and inference efficiency, demonstrating that Glyph is a promising approach for tackling the challenges of long-context modeling.

Text Compression via Vision-Language Models

Scientists have developed Glyph, a new framework that addresses the computational challenges of processing extremely long sequences of text. Instead of extending the capacity of traditional language models, the team compressed textual input into images and processed these images with vision-language models, bypassing the prohibitive memory and computational costs associated with very long sequences while preserving semantic information. The method achieves 3 to 4times compression of long text sequences, maintaining accuracy comparable to leading language models. This compression not only extends the effective context length but also significantly improves processing speed, delivering up to 4.

8times faster pre-filling and 4. 4times faster decoding, with supervised fine-tuning training approximately 2times faster. The team achieved this performance through a three-stage process, beginning with continual pre-training to teach the vision-language model to understand rendered text with diverse visual styles. Furthermore, an LLM-driven genetic search automatically identifies optimal rendering configurations, ensuring the best balance between compression and performance. Results show that incorporating rendered text data enhances performance on real-world multimodal long-context tasks, such as document understanding, demonstrating the practical benefits of this visual compression technique. The work paves the way for scaling context length without incurring substantial computational costs, potentially enabling a 128K-context vision-language model to handle tasks requiring 1 million tokens.

Visual Glyphs Compress Long Contexts Efficiently

Glyph presents a novel framework for efficient long-context modeling, achieving substantial compression of textual input by rendering it into images for processing with vision-language models. Through continual pre-training and an LLM-driven search for optimal visual configurations, the team demonstrates 3-4times context compression while maintaining performance comparable to leading large language models. Experiments reveal significant gains in both inference speed and memory efficiency, alongside cross-modal benefits for tasks such as document understanding. These findings demonstrate the potential of enhancing token information density as a promising new approach to scaling long-context models, offering an alternative to existing attention-based methods.

The team successfully expanded effective context by a factor of eight, achieving performance on par with established models designed for million-token contexts, and suggests considerable headroom for further extending usable context beyond current limitations. While performance can be sensitive to rendering parameters like resolution and font, the method represents a significant step forward in long-context modeling. Future research directions include developing adaptive rendering models that tailor visualizations to specific tasks and exploring a wider range of applications, particularly those requiring agentic capabilities or complex reasoning.

🗞 Glyph: Scaling Context Windows via Visual-Text Compression

🧠 ArXiv: https://arxiv.org/abs/2510.17800