MIT researchers have developed a system that reconstructs indoor scenes, including hidden objects and people, using only reflected wireless signals, a capability that could enhance robotic perception and spatial understanding. Building on more than a decade of work with surface-penetrating wireless technology, the team is now leveraging generative artificial intelligence to achieve improved precision in shape reconstruction and scene interpretation. Unlike many existing methods, this approach utilizes a single stationary radar, eliminating the need for sensors on mobile robots and preserving privacy within the monitored space; potential applications range from optimizing warehouse logistics to improving human-robot interaction in smart homes. “What we’ve done is develop generative AI models that help us understand wireless reflections,” says Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science. “This opens up a lot of interesting new applications, and represents a qualitative leap in capabilities.”

Millimeter Wave Specularity Limits 3D Object Reconstruction

Millimeter waves typically bounce off surfaces in a predictable manner, creating a significant hurdle for accurate 3D object reconstruction. Researchers at MIT have long explored using wireless signals to “see” through obstacles and locate hidden objects, but a fundamental limitation has hampered precision: specular reflection. This phenomenon, where waves reflect in a single direction, leaves large portions of an object’s surface effectively invisible to sensors, hindering complete shape reconstruction. The team, led by Fadel Adib, has now overcome this challenge by integrating generative artificial intelligence models, unlocking a new level of detail in wireless object detection. Previously, the Adib Group relied on interpreting reflected signals using principles of physics, a method that inherently limited reconstruction accuracy.

The new approach builds a partial reconstruction of a hidden object from these wireless signals and then employs a specially trained generative AI model to intelligently fill in the missing parts of its shape. “We were simulating the property of specularity and the noise we get from these reflections so we can apply existing datasets to our domain,” says Maisy Lam, a research assistant on the project. By embedding the physics of mmWave reflections into these adapted data, they created a synthetic dataset to teach the AI to perform plausible shape reconstructions.

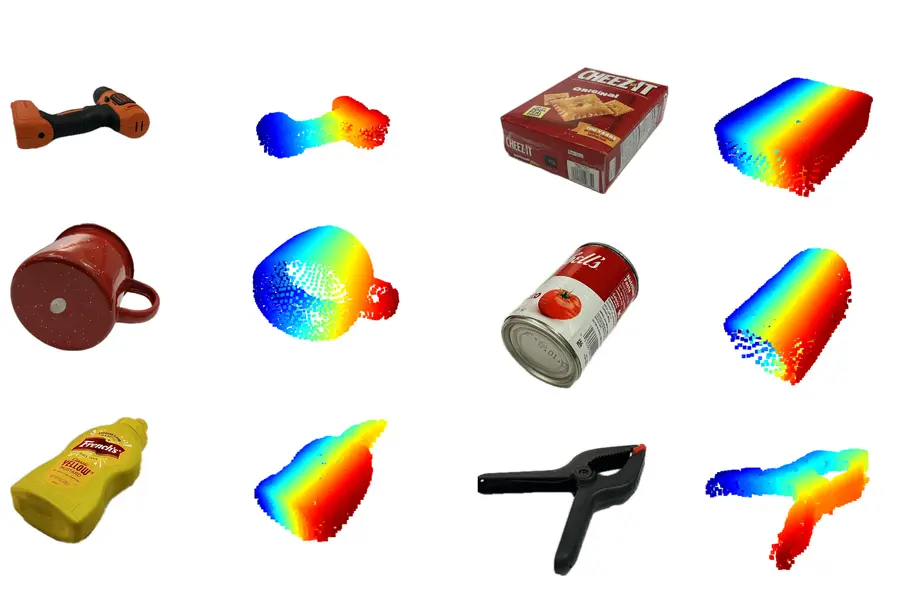

The resulting system, dubbed Wave-Former, was able to generate faithful reconstructions of about 70 everyday objects, representing a nearly 20 percent improvement over existing methods. “When we want to reconstruct an object, we are only able to see the top surface and we can’t see any of the bottom or sides,” explains Laura Dodds, lead author and research assistant, highlighting the problem the team successfully addressed.

Wave-Former: Generative AI Completes Hidden Object Shapes

The ability for robots to perceive and interact with obscured objects remains a significant challenge in robotics, yet researchers have consistently refined techniques utilizing wireless signals to “see” through obstacles. Now, a new approach leverages the power of generative artificial intelligence to dramatically improve the precision of these reconstructions. For over a decade, the Adib Group at MIT has pioneered methods employing surface-penetrating wireless signals, specifically millimeter waves (mmWaves), that reflect off concealed items, but a longstanding limitation has hindered complete shape recognition. The team’s latest innovation introduces a system capable of completing the shape of hidden 3D objects, promising more reliable grasping and manipulation by robots operating in complex environments. Unlike previous iterations relying heavily on physics-based interpretation of signals, this method overcomes inherent inaccuracies stemming from the specular nature of mmWave reflection, where waves bounce off surfaces in a single direction, leaving large areas unobserved.

The critical advancement lies in how the researchers trained the AI; lacking sufficiently large mmWave datasets, they adapted existing computer vision datasets to simulate the characteristics of mmWave reflections. The resulting system, dubbed Wave-Former, proposes potential object surfaces based on mmWave data, refines them using the generative AI, and ultimately generates faithful reconstructions of about 70 everyday objects. Beyond individual object recognition, the team extended this approach to reconstruct entire indoor scenes, leveraging mmWave reflections off moving humans to map room layouts, a capability enabled by analyzing “ghost signals” created by multiple reflections. “By analyzing how these reflections change over time, we can start to get a coarse understanding of the environment around us,” Dodds notes.

What we’ve done now is develop generative AI models that help us understand wireless reflections. This opens up a lot of interesting new applications, but technically it is also a qualitative leap in capabilities, from being able to fill in gaps we were not able to see before to being able to interpret reflections and reconstruct entire scenes.

Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science, director of the Signal Kinetics group in the MIT Media Lab, and senior author of two papers on these techniques

RISE System Reconstructs Indoor Scenes via Multipath Reflections

Researchers at MIT are pushing the boundaries of indoor scene reconstruction, moving beyond object identification to comprehensive environmental mapping using wireless signals and generative artificial intelligence. The innovation hinges on interpreting “ghost signals”, secondary mmWave reflections bouncing off people and then off surfaces, which are typically dismissed as noise. They trained a model to interpret these coarse reconstructions and understand the behavior of multipath mmWave reflections, effectively filling in the gaps to create a complete scene representation. This approach required a creative solution to the challenge of limited training data. “Usually, researchers use extremely large datasets to train a generative AI model,” Adib explains, “But no mmWave datasets are large enough for training.” Instead, the researchers adapted existing computer vision datasets, simulating the properties of mmWave reflections to create a synthetic dataset for training the AI. The result is a system that, when tested with over 100 human trajectories, generated reconstructions twice as precise as existing techniques.

We are using AI to finally unlock wireless vision.

Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science, director of the Signal Kinetics group in the MIT Media Lab, and senior author of two papers on these techniques

Accuracy Gains: 20% Improvement & Precise Human Tracking

The ability to “see” through obstacles is rapidly gaining precision, with implications for warehouse automation, search and rescue, and even in-home robotics. This advancement moves beyond simply detecting the presence of hidden items to reliably determining their shape and location, a critical step for robots needing to interact with the physical world. The core of this improvement lies in a novel approach to handling the limitations of millimeter wave (mmWave) signals. While mmWaves can penetrate common materials, they typically reflect in a specular manner, creating blind spots that hinder complete reconstruction. Rather than relying solely on physics-based interpretation of these signals, the team integrated generative AI models to intelligently fill in the missing information. Beyond individual object reconstruction, the team developed a system called RISE that generated reconstructions about twice as precise as existing techniques, utilizing these secondary reflections previously dismissed as noise to provide crucial data about the surrounding environment.

By analyzing how these reflections change over time, we can start to get a coarse understanding of the environment around us. But trying to directly interpret these signals is going to be limited in accuracy and resolution.