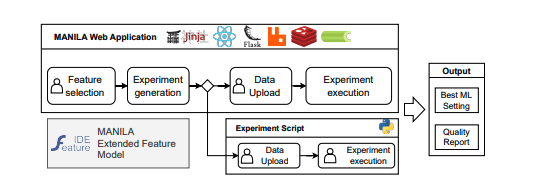

On April 29, 2025, Giordano d’Aloisio introduced MANILA, a web-based low-code application designed to benchmark machine learning models and fairness-enhancing methods. It offers a structured approach to evaluating both effectiveness and equity in AI systems.

The paper introduces MANILA, a web-based low-code application designed to benchmark models and fairness-enhancing methods. It enables users to select solutions that balance fairness and effectiveness. Grounded in an Extended Feature Model, it guides experiment creation to avoid execution errors by defining constraints within a Software Product Line framework. The architecture is evaluated for expressiveness and correctness, providing a structured model selection and evaluation approach.

Machine learning (ML) systems have become indispensable across industries, from diagnosing diseases to approving loans. Yet, as these systems proliferate, concerns about bias and fairness have intensified. Recent research underscores the critical need to detect and mitigate biases embedded within ML models to ensure equitable outcomes. This article delves into cutting-edge approaches to addressing bias in machine learning, examining detection tools, mitigation strategies, and their implications for industry adoption.

Detecting bias in ML systems demands a sophisticated approach. Researchers have developed tools such as Aequitas and the What-If Tool to audit models for fairness. These tools scrutinise datasets and model outputs to identify disparities across protected attributes like race, gender, or age. For example, the What-If Tool enables users to interactively probe model decisions, uncovering potential biases that may not be immediately obvious.

Beyond detection, mitigation strategies have evolved to address identified biases. Techniques range from preprocessing data to adjust for imbalances to postprocessing outputs to ensure fairness. Recent studies also explore model-based approaches, where bias mitigation is integrated into the model training process itself. This ensures that fairness considerations are embedded in the system from the outset.

Research has demonstrated that automated preprocessing techniques can significantly reduce biases in datasets. For instance, methods like reweighing or resampling have proven effective in balancing skewed representations of different groups. Similarly, postprocessing approaches, such as threshold adjustments, can ensure equitable outcomes without altering the underlying model.

However, challenges remain. The effectiveness of these strategies often depends on the specific context and dataset. While some techniques may work well for credit scoring models, they may not directly apply to healthcare diagnostics. This underscores the need for tailored approaches when addressing bias in different domains.

The growing emphasis on fairness in machine learning reflects a broader recognition of its societal impact. Innovations in detection and mitigation tools provide practical solutions for industries seeking to adopt ML systems responsibly. As these technologies continue to evolve, collaboration between researchers, policymakers, and industry leaders will be crucial to ensuring that ML systems perform well and promote equitable outcomes.

In conclusion, addressing bias in machine learning is not just a technical challenge but a societal imperative. By leveraging advanced tools and methodologies, organisations can build more trustworthy and inclusive AI systems, fostering public confidence and driving sustainable innovation.

👉 More information

🗞 MANILA: A Low-Code Application to Benchmark Machine Learning Models and Fairness-Enhancing Methods

🧠 DOI: https://doi.org/10.48550/arXiv.2504.20907