Measurements form the bedrock of computation, yet they also create complex connections between quantum bits, known as entanglement, which can be difficult to harness. Wanda Hou from University of California, San Diego, Samuel J. Garratt from University of California, Berkeley and Princeton University, and Norhan M. Eassa from Purdue University, alongside colleagues, now present a new method for detecting entanglement created by numerous quantum measurements. The team successfully created entangled states using superconducting qubits and then employed unsupervised machine learning to model the resulting post-measurement states, revealing previously hidden long-range entanglement. This approach not only characterises the entanglement itself, but also identifies a critical point where a classical computer struggles to accurately predict the quantum behaviour, hinting at a fundamental shift in the system’s properties and paving the way for improved quantum control strategies.

Repeated Quantum Measurements and System Dynamics

Approximating Complex Quantum Evolution Using Machine Learning

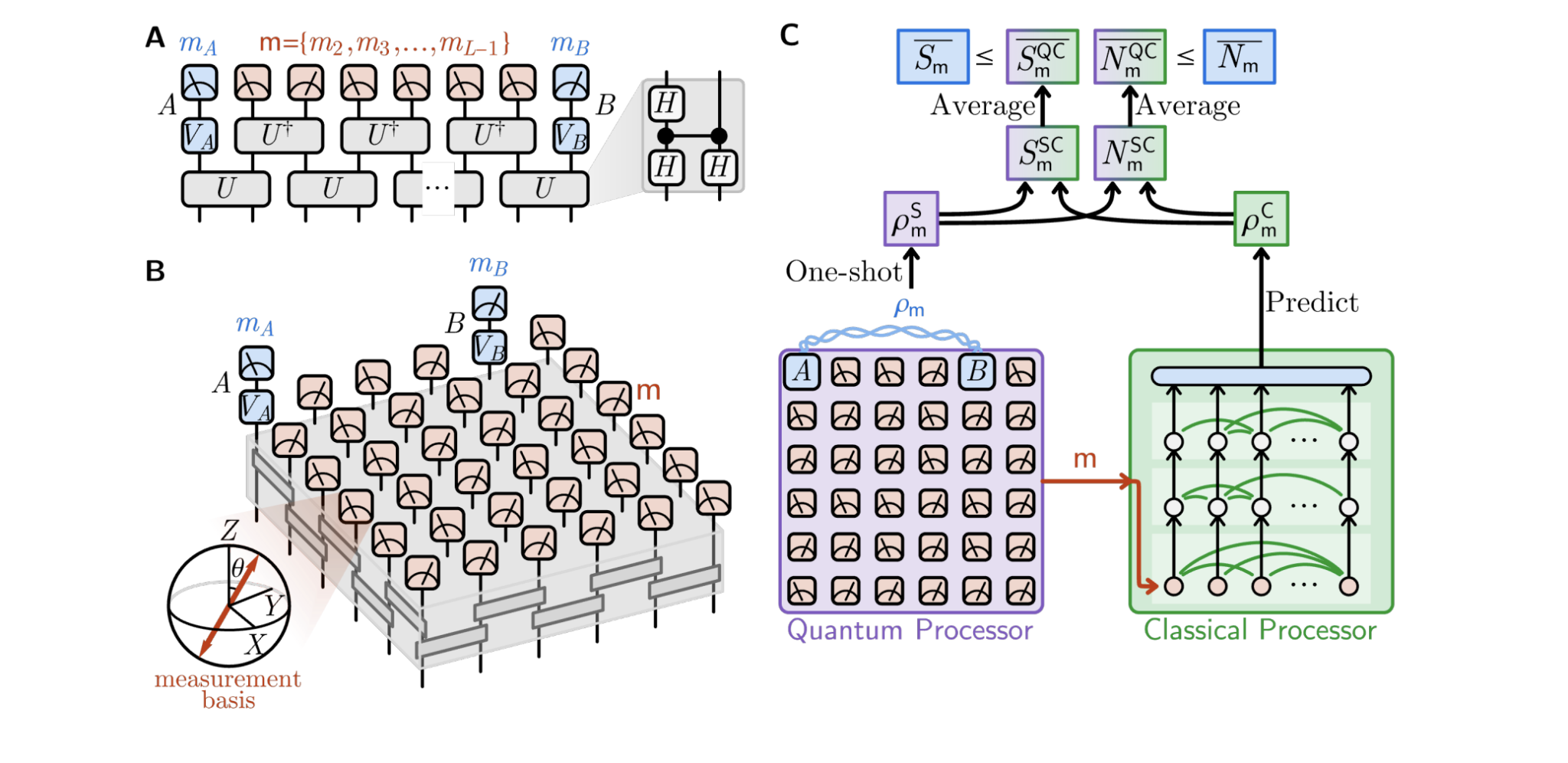

Scientists are investigating the effects of performing numerous measurements on a quantum system, crucial for quantum error correction and preparing complex quantum states. To address the complex dynamics induced by repeated measurements, researchers employ machine learning techniques, specifically neural networks, to predict the final state of the system. This approach avoids computationally expensive simulations, offering a scalable method for analysing complex measurement processes. The method involves training a neural network to map initial quantum states to their corresponding states after a predetermined number of measurements.

The network learns to approximate the complex evolution caused by the measurement process, effectively capturing the underlying dynamics and accurately predicting the final state, even for complex initial states and large numbers of measurements. A key contribution of this work is the development of a machine learning framework capable of handling the high-dimensional spaces characteristic of many-body quantum systems. This allows scientists to explore measurement dynamics in regimes inaccessible to traditional numerical methods and identify the effects of measurement-induced entanglement and decoherence, providing new insights into quantum information processing and many-body physics.

Distant Measurements Reveal Entanglement Sensitivity

Detecting Nonlocal Entanglement in Qubit Arrays

This research details how machine learning can detect entanglement in two-dimensional arrays of qubits, even when measurements are complex. Scientists demonstrate that the post-measurement state of qubits is strongly influenced by measurement outcomes on distant qubits, confirming nonlocal entanglement, meaning the state isn’t determined by immediate neighbours, but correlated across the entire array. This validation confirms the neural network is capturing genuine entanglement, not just local correlation. Researchers analysed the statistics of measurement outcomes to determine if the neural network was simply memorising frequently observed states.

They found that the distribution of measurement outcomes is broad, and no single outcome is observed a large number of times, confirming the network must be generalising from a diverse set of outcomes to accurately predict the post-measurement state. These findings validate the machine learning approach as a way to detect and characterise entanglement in complex quantum systems. The nonlocal entanglement observed confirms that distant measurements can significantly influence the state of the qubits, offering a potential path towards scaling up the study of entanglement to larger and more complex systems.

Machine Learning Reveals Hidden Quantum Entanglement

Unsupervised Methods for Long-Range Entanglement Detection

This research demonstrates a novel method for detecting long-range entanglement created by performing measurements on qubits, even when the outcomes are complex and unpredictable. The team successfully uses unsupervised machine learning to build computational models of the post-measurement states, revealing entanglement that would otherwise be difficult to observe and establishing a link between this entanglement and a transition in a classical agent’s ability to model the experimental data. The findings suggest that machine learning can provide a scalable method for detecting measurement-induced entanglement, offering a potential advantage over traditional post-selection techniques. While accurately reconstructing post-measurement states becomes increasingly challenging as the system size grows, future work may focus on exploring this machine learning approach in more complex systems and applying it to broader problems in control, building upon the demonstrated link between entanglement and the capacity of classical models.

🗞 Machine learning the effects of many quantum measurements

🧠 ArXiv: https://arxiv.org/abs/2509.08890

The development of machine learning for quantum characterization moves beyond mere data fitting; it requires constructing high-dimensional state representations, such as using tensor network decompositions or reduced density matrices, as input features. Training the network involves optimizing weights across diverse measurement protocols to effectively map the time evolution operator $U$ to the output state $\rho’$, circumventing the exponential scaling issues inherent in full quantum state tomography. This approach provides a crucial pathway toward modeling mixed states and irreversible process dynamics that are experimentally challenging to fully resolve.

A significant technical challenge remains the integration of environmental noise, or decoherence, into the predictive model. Real-world superconducting qubits are subject to ambient coupling and dissipation, which rapidly degrade fragile entangled states. The current ML framework must therefore evolve to incorporate quantum noise channels, potentially via methods like variational quantum machine learning, to accurately distinguish true entanglement signatures from system-induced decay and measurement noise during iterative characterization.

Furthermore, the scalability of these models hinges on efficient generalization across different qubit architectures and connectivity topologies. While the current work confirms capability on fixed 2D arrays, extending the methodology to arbitrary graph states or larger lattice geometries requires novel regularization techniques. Future research must focus on modular network design that can predict entanglement properties for non-local Hamiltonian interactions, paving the way for robust quantum error correction codes.