Researchers are addressing the challenge of creating robust and adaptable robot learning systems by moving beyond abstract prompting and focusing on physical interaction. Zichen Jeff Cui from New York University, Omar Rayyan from University of California, Los Angeles, and Haritheja Etukuru from University of California, Berkeley, et al., present Contact-Anchored Policies (CAP), a novel approach that conditions robot actions on points of physical contact rather than relying on broad, abstract instructions. This work is significant because it introduces a modular library of utility functions, enabling rapid development and refinement through a real-to-sim iteration cycle using the newly created EgoGym benchmark. Demonstrating substantial improvements over existing methods, CAP achieves 56% better performance in zero-shot evaluations on fundamental manipulation skills with limited demonstration data, paving the way for more reliable and generalisable robotic systems.

This innovation addresses a fundamental limitation in current robot learning paradigms, where abstract language instructions often hinder the development of robust physical understanding.

CAP structures policies as modular libraries of utility, enabling a streamlined real-to-sim iteration cycle for rapid refinement of models and datasets. Researchers constructed EgoGym, a lightweight simulation benchmark, to efficiently identify failure modes and accelerate the development process prior to real-world deployment.

By conditioning on physical contact, CAP demonstrates an ability to generalise to new environments and robotic embodiments without requiring further training, achieving success on three core manipulation skills using only 23 hours of demonstration data. Performance evaluations reveal that CAP surpasses state-of-the-art vision-language-action models in zero-shot assessments by 56%.

The research team achieved 83% success on the ‘Pick’ task, 90% on ‘Pi’, and 25% on ‘Droid’, significantly improving upon existing methods. This breakthrough is facilitated by a shift from language-based instructions to direct physical contact information, modelling both observation and action jointly with the robot’s interactions with its surroundings.

The modular design of CAP allows for efficient training and deployment across diverse robotic platforms, as demonstrated by successful implementation on handheld grippers. Furthermore, the development of EgoGym provides a valuable tool for iterative model improvement, focusing on object and scene diversity to enhance generalisation capabilities. All model checkpoints, code, hardware specifications, simulation environments, and datasets will be made publicly available to foster further research and development in the field.

Contact-anchored policy learning via real-to-sim iteration and EgoGym benchmarking

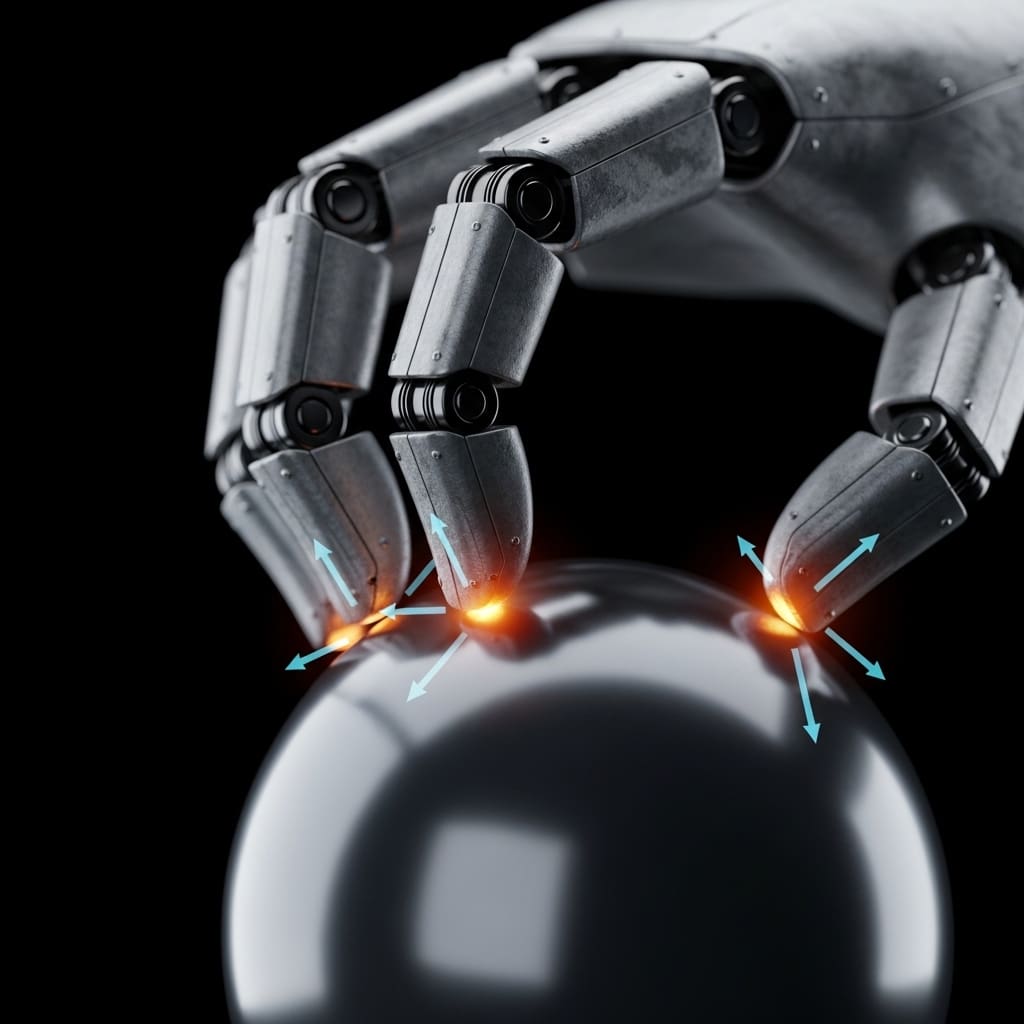

Researchers introduced Contact-Anchored Policies (CAP), a novel approach to robot learning that centres on conditioning policies with points of physical contact in space rather than relying on language prompts. This work replaces traditional conditioning methods with precise spatial coordinates representing contact points, thereby addressing the imprecision inherent in natural language instructions for robotic tasks.

CAP is structured as a library of modular utilities, enabling a cyclical process of real-to-sim iteration for rapid refinement of policies and datasets. To facilitate this iterative process, the team developed EgoGym, a lightweight simulation benchmark designed to quickly identify failure modes and improve model performance.

EgoGym prioritises object and scene diversity over photorealism, allowing for efficient evaluation of generalisation capabilities under distribution shift. The study trained CAP using only 23 hours of human demonstration data on three fundamental manipulation skills: picking up objects, opening doors, and closing drawers.

Performance was assessed through zero-shot evaluations in novel environments and with unseen objects, demonstrating a 56% improvement over state-of-the-art vision-language-action models, such as π0.5. The policies were trained using data collected from a handheld gripper, facilitating immediate deployment on multiple robot embodiments without requiring further adaptation.

Data labelling involved identifying and recording contact point coordinates, which were then used to train the policy head alongside vision tokens and frame sequences. This approach allows the model to learn a direct mapping from observations and contact information to appropriate actions, resulting in robust and generalisable robotic behaviour.

Contact-anchored policies achieve high-success manipulation with limited demonstration data

Success rates reached 90.4% ±6.0% on the Pick task using the Stretch robot with CAP and a retry mechanism. The research demonstrates a single-try performance of 83% for Pick, 81% for Open, and 96% for Close, showcasing robust zero-shot generalisation to unseen environments. Conditioning on physical contact and iterating through simulation enabled the Contact-Anchored Policies (CAP) to achieve these results using only 23 hours of demonstration data.

Evaluations across five cabinet doors and five drawers on the Stretch 3 robot revealed a 91.0% ±5.3% success rate for the Open task and 98.0% ±3.0% for the Close task, both utilising CAP with retry functionality. The policy attempted to open and close each door and drawer for 10 trials, randomizing the robot’s initial position by 16 × 11cm (horizontal × vertical), for a total of 100 trials.

Autonomous generation of contact prompts using Gemini Robotics-ER 1.5 yielded comparable performance, achieving 81% in Pick, 80% in Open, and 97% in Close, validating the effectiveness of vision-language model generated anchors. Further testing on diverse robotic embodiments, Franka FR3, XArm 6, and Universal Robotics UR3e, demonstrated consistent performance, with success rates of 79.0% ±10.9%, 83.0% ±17.9%, and 70.0% ±15.2% respectively on the Pick task.

These evaluations involved 10 trials per object across 10 unseen objects, randomized in position, and confirm the versatility of the policy beyond its initial training environment. External evaluations conducted by collaborators at Hello Robot, UCLA, and Ai2 largely aligned with internal results, with the Franka FR3 achieving 88% success, the XArm 6 achieving 79%, and the UR3e achieving 72% on the Pick task.

An iPhone application was also developed, deploying the 52 million parameter model for real-time inference and allowing users to interact with the policy via camera input and ARKit pose tracking. This application provides a means to visualize the policy’s behaviour in real-world scenes and emulate gripper motions, offering a preliminary assessment of its sensibility before robotic deployment.

Physical Contact Conditioning yields robust zero-shot manipulation performance

Contact-Anchored Policies (CAP) represent a new approach to robot learning by conditioning policies on points of physical contact rather than abstract prompts. This conditioning strategy, combined with a modular library of utility functions, enables generalisation to new environments and robot embodiments using only 23 hours of demonstration data.

CAP outperforms existing vision-language action models in zero-shot evaluations by 56% across three fundamental manipulation skills. The system employs a real-to-sim iteration cycle facilitated by EgoGym, a lightweight simulation benchmark designed for rapid identification of failure modes and refinement of policies and datasets.

This allows for efficient development and deployment in real-world scenarios. Furthermore, CAP facilitates the chaining together of individual policies through tool calling, enabling complex, long-horizon manipulations. Limitations acknowledged by the researchers include the current system’s focus on single contact anchors and single-arm tasks.

Future work will focus on extending CAP to handle multiple contact points and bimanual manipulation. Investigating the weighting of input modalities within CAP could also reveal insights into supervised policy learning. Finally, integrating the verification and retry process into an end-to-end reinforcement learning framework may improve the practicality of CAP for high-stakes applications.

👉 More information

🗞 Contact-Anchored Policies: Contact Conditioning Creates Strong Robot Utility Models

🧠 ArXiv: https://arxiv.org/abs/2602.09017