Google Research has unveiled a suite of new quantization algorithms designed to improve the efficiency of artificial intelligence systems, particularly in vector search and large language models. The techniques, including TurboQuant, Quantized Johnson-Lindenstrauss, and PolarQuant, address a critical bottleneck in AI: the memory-intensive process of handling high-dimensional vectors that represent complex information. These advancements aim to compress vectors while minimizing performance loss, a challenge previously hampered by memory overhead in traditional quantization methods. “TurboQuant is a compression method that achieves a high reduction in model size with zero accuracy loss, making it ideal for supporting both key-value cache compression and vector search,” said Amir Zandieh, Research Scientist, and Vahab Mirrokni, VP and Google Fellow. The algorithms, to be presented at upcoming conferences, promise faster similarity searches and lower memory costs for a wide range of applications reliant on compression.

High-Dimensional Vectors and Key-Value Cache Bottlenecks

The growth of artificial intelligence is increasingly constrained by the volume of data required to operate complex models, rather than by algorithmic innovation. High-dimensional vectors, essential for representing intricate information like image features or semantic meaning, create significant bottlenecks in key-value caches, the rapid-access memory crucial for AI performance. These caches function as “digital cheat sheets,” storing frequently accessed data to avoid slow database searches, but their capacity is limited by the size of the vectors they hold. Google researchers Amir Zandieh and Vahab Mirrokni have introduced TurboQuant, a compression algorithm designed to alleviate this pressure, alongside supporting techniques Quantized Johnson-Lindenstrauss (QJL) and PolarQuant.

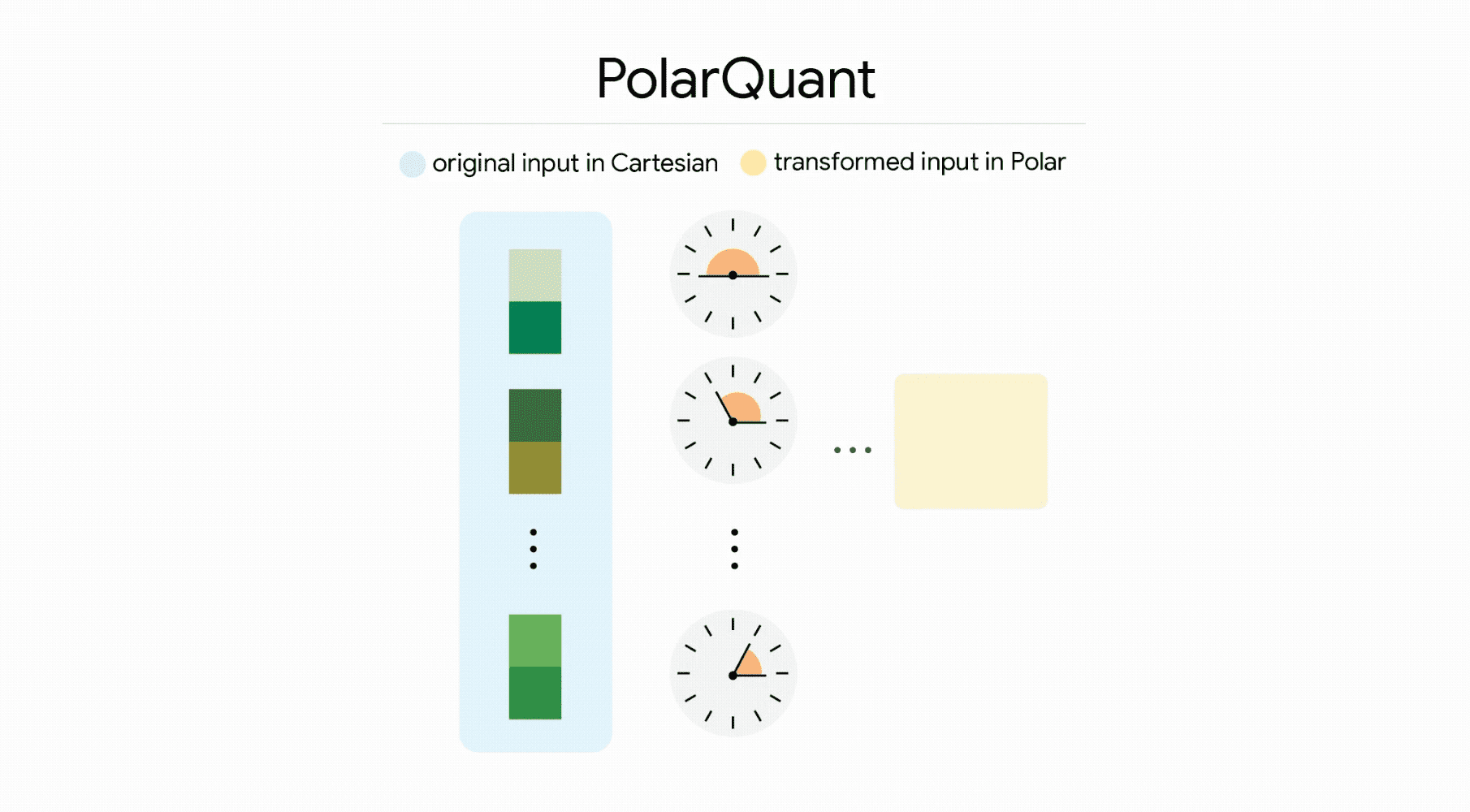

The core principle behind TurboQuant is to drastically reduce vector size without sacrificing accuracy, accomplished through a two-stage process beginning with data simplification via random rotation using the PolarQuant method. Zandieh and Mirrokni explain that this initial stage captures the core concept of the vector. The second stage employs the QJL algorithm, utilizing one bit to eliminate residual errors and refine the attention score, ensuring precise calculations. QJL itself leverages the Johnson-Lindenstrauss Transform, a mathematical technique that shrinks data while preserving essential relationships, reducing each vector number to a single sign bit, a process requiring zero memory overhead. PolarQuant reimagines how vectors are represented, shifting from standard Cartesian coordinates to polar coordinates.

This conversion, analogous to describing location by distance and angle rather than east-west and north-south, allows the model to predict data boundaries, eliminating memory overhead. The researchers note that “Because the pattern of the angles is known and highly concentrated, the model no longer needs to perform the expensive data normalization step.” Testing across benchmarks like LongBench and Needle In A Haystack demonstrates TurboQuant’s effectiveness, achieving optimal performance in both dot product distortion and recall while minimizing key-value memory footprint. Notably, the algorithm can quantize the key-value cache to just three bits without requiring training or fine-tuning and without compromising model accuracy, while also achieving a faster runtime, offering a reduction in key-value memory size of at least six times during ‘needle-in-haystack’ tasks. This efficiency extends to high-dimensional vector search, consistently achieving superior recall ratios compared to existing methods, establishing a benchmark for speed and precision.

TurboQuant Compression via PolarQuant and QJL Algorithms

The demand for increasingly complex artificial intelligence models is rapidly colliding with limitations in memory and processing power, prompting researchers to explore innovative compression techniques for high-dimensional vectors, the fundamental building blocks of AI understanding. Current vector quantization methods, while effective at reducing data size, often introduce memory overhead by requiring the storage of quantization constants, partially offsetting the gains achieved through compression. Google Research scientists are presenting TurboQuant, a novel algorithm designed to overcome this hurdle, alongside its core components, Quantized Johnson-Lindenstrauss (QJL) and PolarQuant. These advancements, slated for presentation at ICLR 2026 and AISTATS 2026 respectively, promise substantial improvements in both key-value cache performance and vector search capabilities. The team reports achieving a faster runtime than the original LLMs (Gemma and Mistral) with four-bit TurboQuant, and superior recall ratios in high-dimensional vector search compared to existing methods. They also demonstrated a reduction of at least six times in key-value memory size during ‘needle-in-haystack’ tasks, and showed that TurboQuant can quantize the key-value cache to three bits without requiring training or fine-tuning and causing any compromise in model accuracy, all while achieving a faster runtime.

TurboQuant proved it can quantize the key-value cache to just 3 bits without requiring training or fine-tuning and causing any compromise in model accuracy, all while achieving a faster runtime than the original LLMs (Gemma and Mistral).

Quantized Johnson-Lindenstrauss: Zero-Overhead, 1-Bit Error Correction

Google Research is pushing the boundaries of artificial intelligence efficiency with a novel approach to data compression centered around the Quantized Johnson-Lindenstrauss (QJL) algorithm, a technique designed to minimize memory usage without sacrificing performance. Researchers Amir Zandieh and Vahab Mirrokni are spearheading this effort, which focuses on reducing the substantial memory demands of high-dimensional vectors used in large language models and vector search engines. To ensure accuracy despite this extreme compression, QJL employs a specialized estimator that balances high-precision queries with the simplified, low-precision data. Zandieh and Mirrokni detail that “QJL uses a special estimator that strategically balances a high-precision query with the low-precision, simplified data.” Complementing QJL is PolarQuant, another algorithm utilized within the broader TurboQuant compression method, which tackles memory overhead by converting vector coordinates from standard Cartesian form to polar coordinates.

This allows our nearest neighbor engines to operate with the efficiency of a 3-bit system while maintaining the precision of much heavier models.

6x Memory Reduction Achieved on Long-Context Benchmarks

This compression isn’t achieved through incremental improvements, but via a fundamentally new approach to vector quantization, detailed in upcoming presentations at both ICLR 2026 and AISTATS 2026. The core of this efficiency lies in a suite of algorithms, including TurboQuant, Quantized Johnson-Lindenstrauss (QJL), and PolarQuant, each addressing a specific facet of the compression challenge. Researchers rigorously tested these techniques across established long-context benchmarks, LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval, utilizing open-source LLMs like Gemma and Mistral. Notably, the team achieved at least a six-fold reduction in key-value memory size during “needle-in-haystack” tasks, where models must identify specific information within massive datasets, without any loss of accuracy. This level of compression is particularly impactful for vector search, a technology powering increasingly complex AI applications.

This is accomplished through a two-step process, beginning with high-quality compression using the PolarQuant method, which simplifies data geometry through random rotation. The implications are far-reaching, promising to unlock faster, more efficient AI systems and expand the possibilities for resource-constrained applications, and the team emphasizes that these methods are not merely practical solutions, but are “fundamental algorithmic contributions backed by strong theoretical proofs.”

This makes it ideal for supporting use cases like vector search where it dramatically speeds up index building process.