Researchers are tackling the challenge of scaling text-to-image diffusion models to achieve higher quality and stability in image generation. Shengbang Tong, Boyang Zheng, and Ziteng Wang, all from New York University, alongside Bingda Tang, Nanye Ma, and Ellis Brown, demonstrate a significant advancement using Representation Autoencoders (RAEs). Their work investigates whether RAEs , previously successful in image modelling , can be effectively applied to large-scale, freeform text-to-image generation, and crucially, reveals that RAEs not only outperform state-of-the-art VAEs across various model sizes, but also offer a simpler and more robust framework for building powerful generative models. This research establishes RAEs as a promising foundation for future multimodal models capable of reasoning directly over generated images, potentially unlocking new possibilities in artificial intelligence.

RAE Scaling Improves Text-to-Image Generation significantly

Scientists have demonstrated a significant advancement in text-to-image (T2I) generation by successfully scaling Representation Autoencoders (RAEs) to large-scale, freeform image creation. The research team investigated whether the RAE framework, previously successful in diffusion modeling on ImageNet, could be adapted for more complex T2I tasks. They achieved this by scaling RAE decoders using a frozen representation encoder, SigLIP-2, and training on a diverse dataset comprising web images, synthetic data, and text renderings. This work reveals that while increasing scale generally improves image fidelity, carefully curated data composition is crucial for excelling in specific domains like text rendering.

The study rigorously stress-tested the original RAE design choices proposed for ImageNet, uncovering a surprising simplification at larger scales. Their analysis establishes that dimension-dependent noise scheduling remains critical for performance, but architectural complexities such as wide diffusion heads and noise-augmented decoding offer minimal benefits when models are scaled up. Building on this streamlined framework, researchers conducted a controlled comparison between RAE and the state-of-the-art FLUX VAE across diffusion transformer scales ranging from 0.5B to 9.8B parameters. Experiments show that RAEs consistently outperformed VAEs during pretraining, regardless of model size.

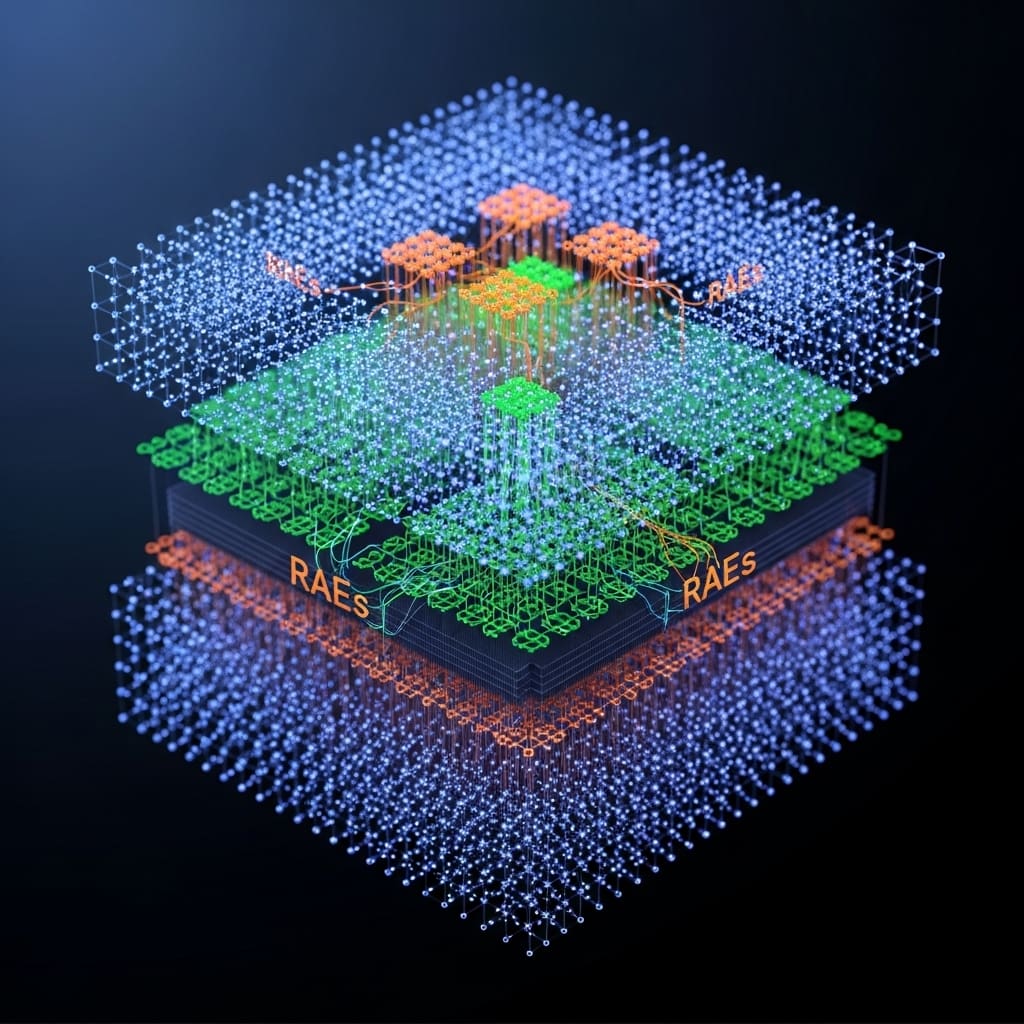

Furthermore, during finetuning on high-quality datasets, VAE-based models exhibited catastrophic overfitting after only 64 epochs, whereas RAE models maintained stability for up to 256 epochs and consistently achieved superior performance. Across all experiments, RAE-based diffusion models demonstrated faster convergence and better generation quality, firmly establishing RAEs as a simpler and more robust foundation for large-scale T2I generation. As illustrated in Figure 1, a Qwen-2.5 1.5B + DiT 2.4B model using RAE achieved a 4.0× speedup on GenEval and a 4.6× speedup on DPG-Bench compared to a VAE-based model. Additionally, the research highlights a key advantage of RAEs: the ability to operate both visual understanding and generation within a shared representation space. This allows the multimodal model to directly reason over generated latents, opening exciting new possibilities for unified models capable of more sophisticated image manipulation and understanding. Table 1 demonstrates that training RAEs on diverse data, including web-scale images, synthetic data, and text, significantly improves reconstruction fidelity across various domains, with the addition of text-rendering data proving particularly beneficial for accurate text reproduction.

RAE Decoder Scaling with SigLIP-2 for T2I improves

Scientists rigorously investigated Representation Autoencoders (RAEs) for large-scale text-to-image (T2I) generation, building on their prior success in ImageNet diffusion modeling. The research team scaled RAE decoders while keeping a frozen representation encoder, SigLIP-2, and trained these decoders on a diverse dataset consisting of web images, synthetic data, and rendered text. They found that increasing model and data scale improves overall image fidelity, but that carefully curated data composition is essential for achieving strong performance in specific domains, particularly text rendering.

This work conducted a systematic stress test of the original RAE design choices proposed for ImageNet, revealing that several architectural simplifications become possible at larger scales. While dimension-dependent noise scheduling remains critical for optimal performance, more complex components, such as wide diffusion heads and noise-augmented decoding, provide diminishing returns as model size increases.

The study then performed a controlled comparison between RAE-based models and the state-of-the-art FLUX Variational Autoencoder (VAE) across diffusion model scales ranging from 0.5B to 9.8B parameters. Across all scales, RAEs consistently outperformed VAEs during pretraining, demonstrating faster convergence and stronger initial learning. A key distinction emerged during finetuning on high-quality datasets. VAE-based models exhibited catastrophic overfitting after approximately 64 epochs, whereas RAE models remained stable even after 256 epochs and achieved superior overall performance.

Training was conducted using the AdamW optimizer with a cosine learning-rate schedule and a warmup ratio of 0.03, using a global batch size of 1024. Models were trained for 4, 16, 64, and 256 epochs. The maximum learning rate was set to 5.66 × 10⁻⁵ for the Qwen2.5 language model backbone and 5.66 × 10⁻⁴ for the DiT diffusion head. Optimizer betas were set to (0.9, 0.999) for the language model and (0.9, 0.95) for the diffusion model to balance learning speed and stability. Architectural configurations varied across DiT models, with hidden sizes ranging from 1280 for DiT-0.5B to 4096 for DiT-9.8B, consistently using 32 attention heads and depths ranging from 16 to 32 layers.

Leveraging this simplified RAE framework, the team achieved faster convergence and improved generation quality, establishing RAEs as a more robust and efficient foundation for large-scale T2I generation. Extended finetuning experiments of up to 512 epochs further confirmed the resilience of RAE-based models, with GenEval scores remaining stable, while VAE-based models continued to suffer severe performance degradation. Because both visual understanding and image generation operate within a shared representation space, the resulting multimodal system can directly reason over generated latent representations, opening new opportunities for unified multimodal models and advanced multimodal reasoning.

RAE Scaling Boosts Text-to-Image Fidelity

Scientists achieved substantial improvements in text-to-image (T2I) generation by leveraging Representation Autoencoders (RAEs), demonstrating their superiority over Variational Autoencoders (VAEs) in large-scale models. The research focused on scaling RAE decoders using a frozen representation encoder, SigLIP-2, and training on diverse datasets including web imagery, synthetic data, and text-rendering examples. Experiments revealed that while increasing scale generally improves fidelity, carefully curated data composition is vital for achieving optimal results in specific domains like text rendering. The team measured reconstruction fidelity using rFID-50k across three datasets: ImageNet-1k, YFCC, and a held-out RenderedText set.

Results demonstrate that expanding decoder training beyond ImageNet to include web-scale and synthetic data yields only marginal gains on ImageNet itself, but provides moderate improvements on the more diverse YFCC dataset, indicating enhanced generalizability. However, generic web data proved insufficient for text reconstruction; substantial performance gains were only observed when incorporating text-specific training data, confirming the sensitivity of reconstruction quality to data composition. Specifically, training with Web + Synthetic data showed little improvement over ImageNet-only training, while the addition of text data significantly boosted performance. Further analysis involved comparing RAEs to state-of-the-art FLUX VAEs across diffusion scales ranging from 0.5B to 9.8B parameters.

Measurements confirm that RAEs consistently outperformed VAEs during pretraining at all model scales. Crucially, VAE-based models catastrophically overfit after only 64 epochs of finetuning on high-quality datasets, whereas RAE models maintained stability through 256 epochs and consistently achieved better performance. Data shows that RAE-based diffusion models exhibit faster convergence and superior generation quality, establishing them as a simpler and more robust foundation for large-scale T2I generation. Scientists also investigated the necessity of specialized design choices originally proposed for ImageNet RAEs.

Tests prove that adapting the noise schedule to the latent dimension is critical for convergence, but architectural modifications like wide diffusion heads and noise augmentation offer negligible benefits at larger scales. The work utilizes the MetaQuery architecture with a 256-token sequence, projecting representations into a DiT model trained with a flow-matching objective, avoiding compressed VAE spaces and directly modeling high-dimensional semantic representations. Evaluation using GenEval and DPG-Bench scores further validates the effectiveness of the RAE approach.

RAE Scaling Simplifies High-Fidelity Image Generation by reducing

Scientists have demonstrated the successful scaling of Representation Autoencoders (RAEs) to large-scale text-to-image (T2I) generation, achieving superior performance compared to Variational Autoencoders (VAEs). The researchers found that while increasing data scale improves overall generation fidelity, careful data composition remains essential for domain-specific capabilities such as text rendering.

Their analysis shows that scaling the RAE framework leads to architectural simplification. Although dimension-dependent noise scheduling remains important, more complex components, such as wide diffusion heads, provide limited benefits at larger scales. Building on this streamlined design, the study demonstrates that RAE-based diffusion models consistently outperform VAE baselines in both convergence speed and image quality across a wide range of model sizes, from 0.5B to 9.8B parameters.

Notably, RAE models exhibit significantly greater stability during fine-tuning. While VAE-based models suffer from catastrophic overfitting after approximately 64 epochs, RAE models maintain stable performance for up to 256 epochs. The authors note that their current approach relies on a strong diffusion decoder, partly due to the smaller DiT width relative to the EVA-CLIP embedding dimension, and does not incorporate noise-schedule shifting.

Future work may address these limitations by refining the architecture and exploring alternative scaling strategies to further improve efficiency and performance. Overall, these results establish RAEs as a simpler and more effective foundation for large-scale T2I generation, offering faster convergence and higher image quality. Moreover, the ability to operate within a shared representation space for both visual understanding and image generation opens new opportunities for unified multimodal models, as illustrated by latent-space test-time scaling. This work provides a strong basis for future research in scalable generative modeling and unified multimodal systems.

.

🗞 Scaling Text-to-Image Diffusion Transformers with Representation Autoencoders

🧠 ArXiv: https://arxiv.org/abs/2601.16208