Wound care represents a significant global healthcare challenge, burdened by economic and logistical difficulties. Remi Chierchia, Léo Lebrat, and David Ahmedt-Aristizabal, from Queensland University of Technology and CSIRO Data61, alongside Yulia Arzhaeva, Olivier Salvado, and Clinton Fookes et al, present a novel approach to automated wound assessment using neural fields. Their research tackles the complex problem of creating consistent 3D wound models from standard 2D images, a crucial step towards more accurate healing progress tracking. By introducing WoundNeRF , a NeRF SDF-based method , and demonstrating its superiority over existing techniques, this work promises to accelerate the development of fast, precise, and accessible wound segmentation tools for clinicians worldwide.

Their research tackles the complex problem of creating consistent 3D wound models from standard 2D images, a crucial step towards more accurate healing progress tracking. By introducing WoundNeRF, a NeRF SDF-based method, and demonstrating its superiority over existing techniques, this work promises to accelerate the development of fast, precise, and accessible wound segmentation tools for clinicians worldwide.

3D Wound Segmentation via Neural Radiance Fields offers

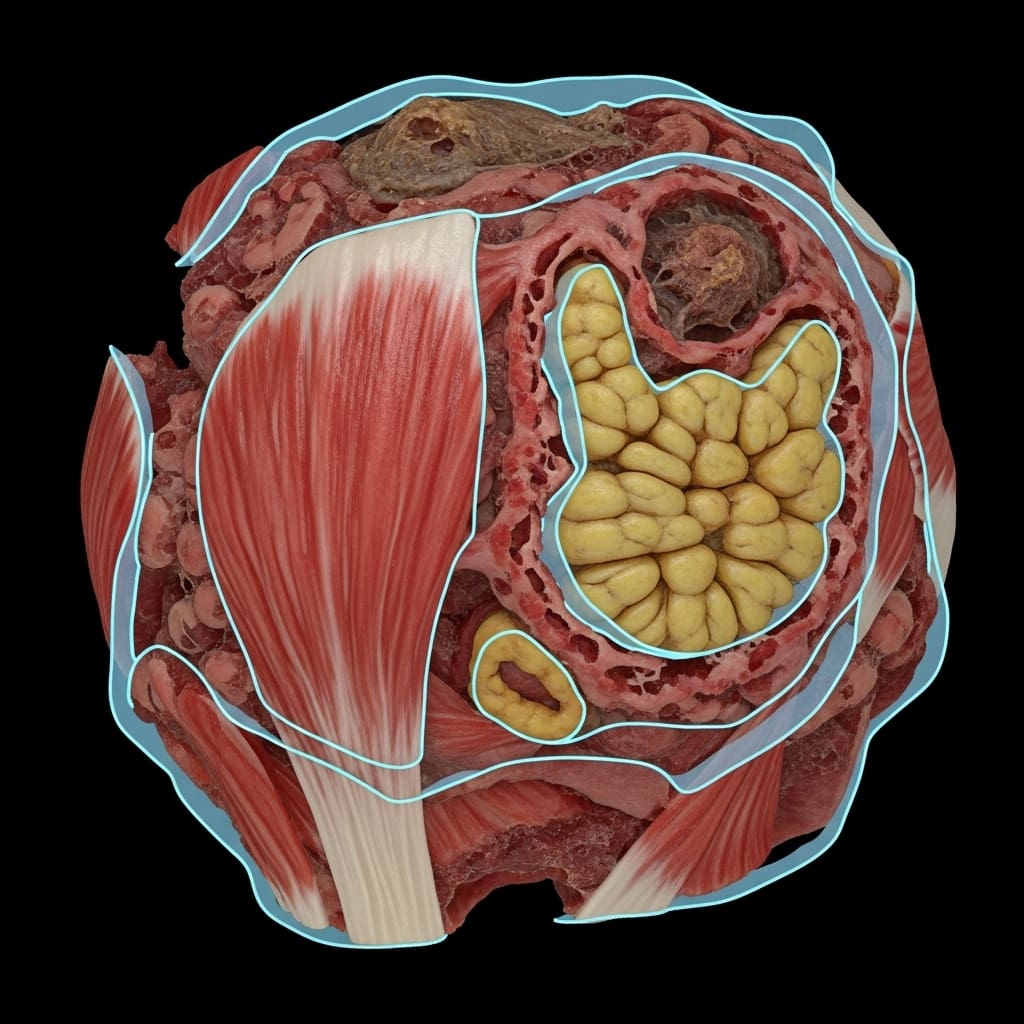

This breakthrough addresses the challenges of accurately tracking wound healing progress, a process often hampered by economic and logistical burdens on patients and hospitals globally. The research team achieved multi-view consistent 3D wound segmentation by employing a NeRF SDF-based approach, effectively learning a 3D-consistent wound segmentation field directly from automatically generated annotations. The study unveils a new paradigm for wound assessment, focusing on optimal aggregation of 2D segmentations rather than generative tasks like hallucinating missing regions. This allows for detailed wound bed tissue classification and accurate wound boundary delineation, crucial for effective monitoring and treatment planning.

Experiments demonstrate that WoundNeRF surpasses state-of-the-art Vision Transformer networks and conventional rasterisation-based algorithms in recovering accurate segmentations. The team optimised the semantic field using a weighted cross-entropy loss, addressing the common class-imbalance problem inherent in medical imaging datasets. Notably, the architecture allows for integration of alternative segmentation objectives, such as Boundary or Dice loss, providing flexibility for customisation and refinement. The implementation closely follows established NeRF methodologies, employing a two-step strategy where the geometry MLP converges initially before activating the semantic head, stabilising the learning process and enhancing performance.

This research establishes a promising new direction for digital wound care, offering the potential for streamlined monitoring, improved prognosis, and timely intervention. By providing accurate and repeatable 3D wound measurements, WoundNeRF facilitates more effective treatment cycles and reduces the burden on healthcare systems. The code will be released, enabling further development and wider adoption of this innovative paradigm, potentially revolutionising how wounds are assessed and managed in clinical settings and beyond. The work opens avenues for automated wound healing trajectory analysis and personalised treatment strategies, ultimately improving patient outcomes and quality of life.

Scientists Method

The research team developed WoundNeRF, a method that learns a 3D-consistent wound segmentation field directly from multi-view images, addressing the limitations of traditional 2D segmentation techniques and inconsistent viewpoint data. Here, sC represents the semantic logits at x, normalised using the softmax operation to obtain per-class probabilities, enabling precise tissue classification. Experiments utilise multi-view images to train the system, enabling the inference of 3D wound structures from 2D data. The study meticulously compares WoundNeRF against state-of-the-art fine-tuned Vision Transformer models and 2D-to-3D mapping strategies, demonstrating its superior performance in accurate and robust 3D wound segmentation.

This method achieves consistent segmentation quality by learning an optimal aggregation function from automatically generated 2D segmentations, rather than attempting to hallucinate missing data or correct misclassifications. Crucially, the research highlights the method’s potential for clinical analysis and digital documentation, offering a valuable tool for monitoring healing progress. The team’s innovative use of NeRFs enables inherently viewpoint-consistent segmentation, overcoming the limitations of previous approaches reliant on manually tuned heuristics or inconsistent depth awareness, a critical advancement for reliable wound assessment and treatment planning. The code will be released to facilitate further development and wider adoption of this promising paradigm.

WoundNeRF improves 3D wound tissue segmentation with high

The research addresses the longstanding challenge of inferring consistent 3D structures from 2D images, a limitation hindering accurate healing progress tracking. Experiments demonstrate the potential of WoundNeRF in recovering accurate segmentations by comparing its performance against state-of-the-art Vision Transformer models and conventional rasterisation-based algorithms. The team measured segmentation accuracy across 73 processed videos, revealing significant improvements in wound bed, granulation, and slough tissue identification. The wound bed class is computed by aggregating the logits of these five tissues, enabling comprehensive wound bed analysis.

Measurements confirm a Dice Score Coefficient (DSC) of 0.857 for wound bed segmentation using WoundNeRF, accompanied by a recall of 0.893. For granulation tissue, the method achieved a DSC of 0.775 and a recall of 0.786, while slough segmentation yielded a DSC of 0.686 and a recall of 0.666. These quantitative results, obtained from a dataset of 73 videos, showcase the method’s ability to delineate different tissue types with high precision. The team employed a weighted cross-entropy loss function to address class imbalance, a common issue in medical imaging, and incorporated dropout with a rate of 0.5 to enhance robustness and mitigate false positives.

Tests prove that decoupling geometry optimisation from semantic learning, through a two-step training strategy, stabilises the process and improves performance. The optimization strategy minimises a weighted cross-entropy loss, addressing the common class-imbalance problem prevalent in medical imaging. By integrating semantic distribution over each ray, the architecture obtains pixel-level predictions, enabling detailed digital documentation and clinical analysis. This breakthrough delivers a promising paradigm for automated, accurate, and 3D-consistent wound segmentation, potentially revolutionising wound care practices.

This approach addresses limitations inherent in traditional 2D wound segmentation, which often struggles to accurately capture the complete three-dimensional topology of wounds. By learning a semantic field directly in 3D space, WoundNeRF aggregates information across multiple views, resulting in more accurate and coherent segmentations, and enabling data augmentation to improve 2D segmentation models. The significance of this work lies in its potential to improve wound assessment and documentation through automatic mesh extraction and precise healing progress tracking. WoundNeRF’s ability to reconstruct wounds in 3D offers a more comprehensive understanding of wound characteristics than conventional methods. However, the authors acknowledge that the method currently experiences challenges with misclassified pixels. Future research will focus on integrating a confidence-driven segmentation framework to enhance robustness and semantic fidelity for clinical applications.

👉 More information

🗞 Multi-View Consistent Wound Segmentation With Neural Fields

🧠 ArXiv: https://arxiv.org/abs/2601.16487