Researchers present a novel network architecture that incorporates a Spatio-Temporal State Space Model (STSSM) module to efficiently estimate motion from event cameras. This approach captures spatio-temporal correlations in event data, achieving 4.5 times faster inference and significantly reducing computational cost compared to existing methods, such as TMA and EV-FlowNet, on the DSEC benchmark.

The pursuit of efficient motion estimation continues to drive innovation in computer vision, particularly with the emergence of event-based cameras which operate fundamentally differently from traditional frame-based systems. These cameras, responding to changes in brightness rather than capturing entire frames, offer the potential for significantly reduced latency and power consumption, crucial for applications ranging from autonomous robotics to high-speed tracking. Researchers at Khalifa University, including Muhammad Ahmed Humais, Xiaoqian Huang, Hussain Sajwani, Sajid Javed, and Yahya Zweiri, address limitations in current event-based optical flow estimation techniques with their work, entitled ‘Spatio-Temporal State Space Model For Efficient Event-Based Optical Flow’.

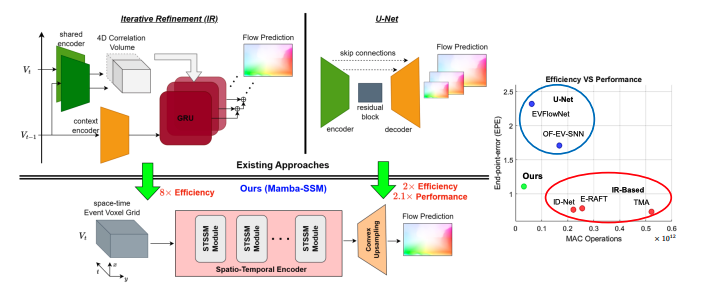

Their novel approach introduces a Spatio-Temporal State Space Model (STSSM) module. This computational framework captures correlations within event data, and integrates it into a new network architecture designed for enhanced performance and reduced computational demand. Optical flow refers to the apparent motion of objects in a visual scene caused by the relative motion between the observer and the scene. State-space models are a mathematical framework used to model dynamic systems, where the system’s state is represented as a set of variables that evolve. The team demonstrates substantial gains in inference speed and reduced model complexity compared to existing methods, achieving up to 4.5 times faster processing and an 8-fold reduction in model parameters, while maintaining competitive accuracy on the DSEC benchmark dataset.

Event cameras, unlike conventional cameras, do not capture

Event cameras, unlike conventional cameras, do not capture images at fixed intervals. Instead, they register changes in brightness asynchronously, generating data streams that represent motion directly. This characteristic makes them particularly suitable for applications requiring low-latency motion estimation, commonly known as optical flow, where determining the direction and speed of objects is crucial. Traditional deep learning methods, including Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs) and Vision Transformers (ViTs), often struggle with the computational demands of processing video data efficiently. CNNs excel at spatial feature extraction, RNNs at temporal dependencies, and ViTs at long-range dependencies, but all can be resource-intensive.

Researchers now introduce a novel network architecture incorporating a Spatio-Temporal State Space Model (STSSM) module, designed to overcome these limitations. State-space models are a mathematical framework used to represent a system’s evolution over time, capturing its internal state based on input and previous states. The STSSM module leverages these principles to efficiently capture correlations within the asynchronous event data, offering improved performance with reduced computational complexity. This approach effectively bridges the gap between computational efficiency and the ability to model complex spatio-temporal dynamics, crucial for understanding motion.

Performance evaluations on the DSEC (Dynamic and Static Event Camera) benchmark demonstrate the efficacy of this new model. It achieves a 4.5x speed increase in inference and requires 8x less memory than the TMA (Time-to-Arrival Map) algorithm and 2x less memory than EV-FlowNet, while maintaining competitive accuracy. These results highlight the potential of STSSM to unlock efficient and high-performing optical flow estimation for event-based vision systems, enabling real-time applications.

The core innovation lies in the STSSM module’s

The core innovation lies in the STSSM module’s ability to distil relevant spatio-temporal information from asynchronous event streams. This avoids the computational overhead associated with processing entire frames, as required by traditional frame-based methods. Event-based vision benefits from efficient spatio-temporal modelling techniques, offering a compelling alternative for applications in robotics, autonomous navigation, and high-speed tracking, where responsiveness is paramount.

The model effectively leverages the asynchronous and sparse nature of event data, providing a compelling alternative for applications that demand both accuracy and real-time responsiveness. By focusing on changes rather than entire frames, it significantly reduces computational burden and memory requirements, paving the way for deployment on resource-constrained platforms.

🗞 Spatio-Temporal State Space Model For Efficient Event-Based Optical Flow

🧠 DOI: https://doi.org/10.48550/arXiv.2506.07878