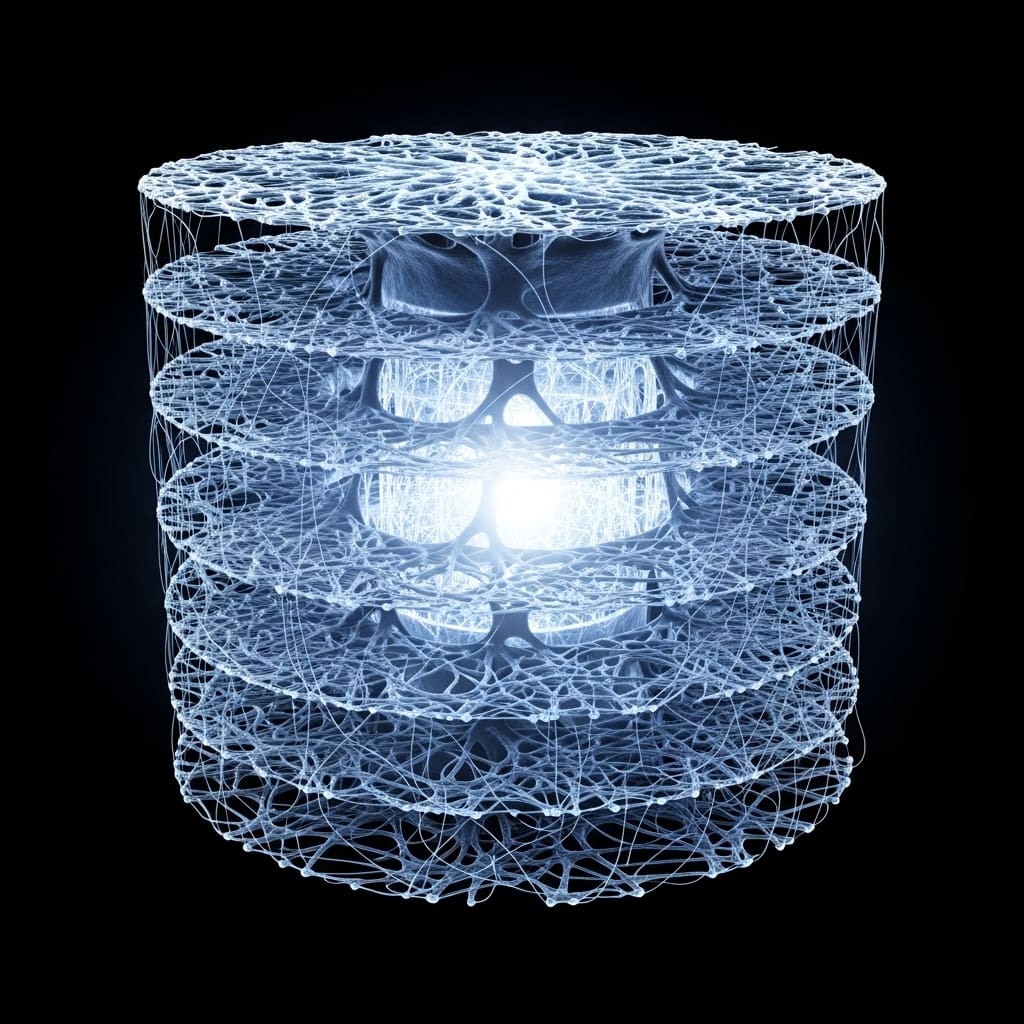

Researchers are increasingly focused on mirroring the energy efficiency of biological systems, such as the human brain, in modern communication networks. Haoyuan Zhu and Haonan Hu, both from the Department of Electronic and Electrical Engineering at the University of Sheffield, alongside Jie Zhang from Cambridge AI+ Ltd and Ranplan Wireless Network Design Ltd, present a novel framework for information abstraction inspired by these natural processes. Their collaborative work introduces the Degree of Information Abstraction (DIA), a metric designed to quantify data compression alongside the preservation of essential semantic content. This research is significant as it establishes a formal, computational theory of information abstraction, demonstrating a substantial reduction in transmission volume, while maintaining fidelity, when applied to a large language model-guided video transmission task, and potentially paving the way for advancements in areas like neuromorphic computing and semantic communication.

While the human brain excels at processing information with minimal energy expenditure by abstracting data across multiple levels, current communication systems often waste energy transmitting raw, low-level data. This work introduces the Degree of Information Abstraction (DIA), a novel metric designed to quantify how effectively a representation compresses data while preserving the essential information needed to complete a task. By formally defining and measuring information abstraction, researchers have created a framework to rebalance energy consumption in intelligent systems. The core innovation lies in the development of a tractable information-theoretic formulation of DIA, allowing for practical application and analysis. Results demonstrate that abstraction-aware encoding, guided by DIA, can significantly reduce transmission volume by 99.75%, without compromising the semantic integrity of the video. This substantial reduction in data transmitted promises significant energy savings and improved network efficiency. The DIA metric and abstraction framework offer a principled approach to designing more efficient neural networks, exploring new avenues in neuromorphic computing (which aims to mimic the brain’s structure and function), and advancing the field of semantic communication, a paradigm shift focused on transmitting meaning rather than raw data. The implications of this work are far-reaching, suggesting a path towards more sustainable and efficient information technologies inspired by the remarkable energy efficiency of biological intelligence. By prioritizing abstraction, researchers are moving closer to systems that can process and communicate information with a fraction of the energy currently required, paving the way for a future where data transmission is no longer a major drain on global resources. Minimising transmission volume shifts the energy burden from costly wireless communication to more efficient local computation and representation learning. A video-level Degree of Information Abstraction (DIA) forms the primary optimisation objective within this research, guiding a design search over system configurations for semantic video transmission. The methodology centres on transmitting compact semantic information from a source video, enabling reconstruction that preserves both structural fidelity and semantic consistency. This is achieved through an Optimisation-based Progressive Refinement Operation (OPRO) schedule, conducted over five rounds on a fixed, multi-category video set. Initially, the system instantiates a video understanding module utilising Qianwen Qwen-VL-Max, responsible for producing high-level semantic abstractions. These abstractions then condition downstream semantic transmission and receiver-side reconstruction. The receiver employs sora-video2-landscape for video generation and prediction, consistently generating videos at a resolution of 1280 × 704 pixels to avoid confounding effects from heterogeneous generative backends. Crucially, the latent space used for DIA and the semantic embedding space within the Video Semantic Drift Suppression (VSDS) module are both implemented with CLIP RN-50, ensuring alignment between the optimisation signal and the semantic-correction pathway. To evaluate performance, a dataset of 20 videos at 1280 × 704 resolution was constructed, divided into two categories: human-centric videos focusing on actions and interactions, and object/scene-centric videos emphasising background dynamics. This balanced approach aims to improve dataset coverage and reduce bias towards specific content types, reflecting the diversity of real-world video semantics. Four optimisation strategies were compared: a local baseline optimising only the prediction module, OPRO driven by DIA, an Information Bottleneck (IB)-based counterpart, and VSDS-augmented DIA-OPRO, allowing for controlled, hypothesis-driven comparisons. The VSDS module operationalizes the temporal evolution of semantics into a prioritization mechanism, improving continuity and motion fidelity in the reconstructed video. more efficient neural network design, neuromorphic computing that mimics brain-like energy usage, and even the emerging field of semantic communication, where the meaning of data is prioritised over its raw form. Approximately 95% of the brain’s energy supports internal processes rather than direct signal transmission, aligning with the energy efficiency observed in biological systems. The development of DIA offers a valuable tool for rebalancing energy and information in intelligent systems, potentially influencing advancements in neural network design, neuromorphic computing, and semantic communication architectures. Limitations remain, including the need to generalise the DIA metric across diverse data types and tasks, and the inherent subjectivity in defining ‘task-relevant semantics’. Looking ahead, research will likely explore how to build abstraction directly into AI architectures, potentially through novel training algorithms or hardware specifically designed to support it.

🗞 Information Abstraction for Data Transmission Networks based on Large Language Models

🧠 ArXiv: https://arxiv.org/abs/2602.11022