Scientists are tackling the computational challenges of modelling complex 3D atomistic systems with a new approach to Equivariant Graph Neural Networks (EGNNs). Lin Huang, Chengxiang Huang, and Ziang Wang, all from 1IQuest Research, alongside colleagues Du, Wang et al, present E2Former-V2, a scalable model that significantly improves efficiency by integrating algebraic sparsity with hardware-aware execution. This research is significant because it overcomes critical scalability bottlenecks found in existing EGNNs, which often struggle with the computational cost of dense tensor products , E2Former-V2 achieves a remarkable 20x improvement in TFLOPS. Through innovations like Equivariant Axis-Aligned Sparsification and On-the-Fly Equivariant Attention, the team demonstrates that large equivariant models can be trained efficiently on standard GPU platforms, paving the way for faster and more accurate molecular simulations.

Traditional EGNNs struggle with scalability due to the explicit construction of geometric features and dense tensor products calculated on every edge, hindering their application to larger systems. This new research addresses these bottlenecks by integrating algebraic sparsity with hardware-aware execution, paving the way for efficient simulations of complex molecular structures. By eliminating the need to materialize edge tensors and maximizing SRAM utilization, this kernel achieves a remarkable 20× improvement in TFLOPS compared to standard implementations. This optimisation is crucial, as traditional EGNNs suffer from severe latency due to their edge-centric nature, while standard Transformers have previously addressed similar issues through hardware-aligned execution. Extensive experiments conducted on the SPICE and OMol25 datasets demonstrate that E2Former-V2 maintains comparable predictive performance to existing methods while significantly accelerating inference speeds.

Designing Scalable Architecture for Molecular Simulations

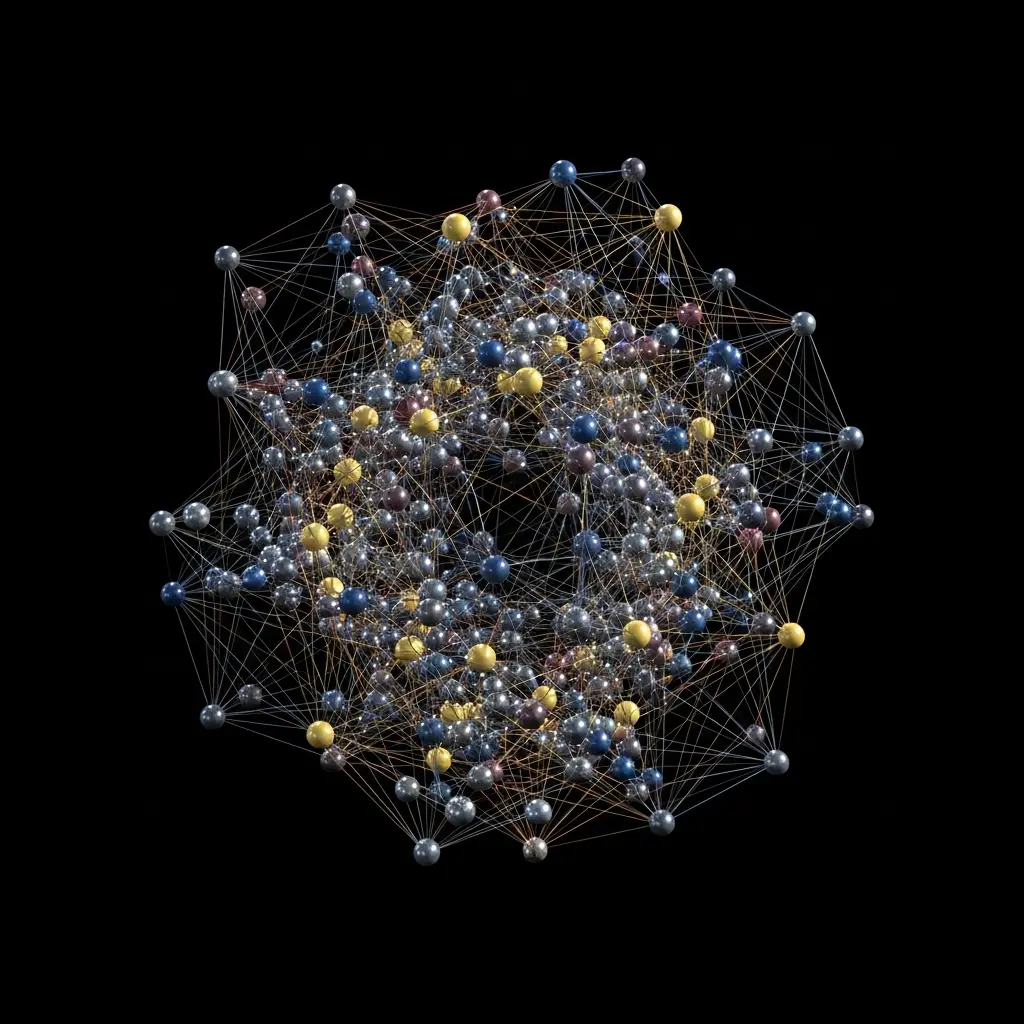

The study reveals that E2Former-V2 achieves O(|V|) activation memory, preserving theoretical exactness through its innovative design. This design combines the SO(2) rotational basis for sparsification with the custom equivariant attention kernel, enabling efficient computation of interactions via a fused Triton kernel. As illustrated in accompanying figures, E2Former-V2’s performance advantage becomes increasingly pronounced as the number of atoms (N) grows, highlighting its efficacy in handling large-scale atomic structures previously considered computationally prohibitive. The work establishes that large equivariant transformers can be trained efficiently using widely accessible GPU platforms, opening new avenues for research in areas like drug discovery and materials science.

Furthermore, the research demonstrates a ∼6× speedup during the convolution stage through EAAS, and a 20× improvement in TFLOPS with the On-the-Fly Equivariant Attention kernel. This combination of techniques not only enhances computational efficiency but also reduces memory requirements, making it possible to simulate larger and more complex systems than previously feasible. The code for E2Former-V2 is publicly available, facilitating further research and development in the field of equivariant machine learning for atomistic modeling, and is accessible at https://github. The research team addressed limitations inherent in existing methods that rely on explicit construction of geometric features or dense tensor products calculated on every edge of a graph. This kernel eliminates the need to materialize edge tensors, thereby reducing activation memory from O(|E|) to O(|V|) and maximizing SRAM utilization. Experiments employing this kernel demonstrated a remarkable 20 improvement in TFLOPS compared to standard implementations, signifying a substantial leap in computational efficiency. The team quantified the impact of their design through observational analysis, comparing traditional EGNNs with standard attention mechanisms and revealing severe latency issues in the former.

Quantitative Performance Gains on Large Atomistic Systems

FlashAttention, a streaming, tile-based attention mechanism, was used as a benchmark, consistently improving performance over traditional EGNNs across varying system sizes. However, the advantage of E2Former-V2 became increasingly pronounced as the number of atoms (N) grew, highlighting its efficacy in handling large-scale atomic structures. The work demonstrated that E2Former-V2 achieves O(|V|) activation memory while maintaining theoretical exactness, a feat enabled by the combination of EAAS and the custom attention kernel. Extensive experiments conducted on the SPICE and OMol25 datasets confirmed that E2Former-V2 maintains comparable predictive performance while notably accelerating inference, proving its potential for efficient training on widely accessible GPU platforms.

E2Former-V2 accelerates 3D atomistic modelling significantly

Scientists have developed E2Former-V2, a new scalable architecture for modelling 3D atomistic systems using equivariant graph neural networks. This innovation addresses critical scalability bottlenecks present in existing methods, which often rely on explicit construction of geometric features or dense tensor products on every edge. Extensive experiments conducted on the SPICE and OMol25 datasets demonstrate that E2Former-V2 maintains comparable predictive performance to existing models while significantly accelerating inference, achieving a 20x improvement in TFLOPS.

Enabling Accurate and Complex Molecular Dynamics Simulations

This work establishes that large equivariant neural networks can be efficiently trained using widely accessible GPU platforms, opening avenues for more complex and accurate simulations of molecular dynamics. The authors acknowledge a limitation in that the current implementation is tailored for GPU acceleration, and further work could explore broader hardware compatibility. Future research directions include extending the method to even larger systems and investigating its application to diverse scientific domains beyond molecular modelling.

🗞 E2Former-V2: On-the-Fly Equivariant Attention with Linear Activation Memory

🧠 ArXiv: https://arxiv.org/abs/2601.16622

The inherent challenge in applying standard deep learning architectures to physical systems lies in the mismatch between abstract graph representations and the localized, highly structured memory access patterns characteristic of compute-intensive simulations. E2Former-V2 circumvents this by reformulating the interaction kernel to operate directly on feature-space tensor projections, rather than necessitating the explicit storage of full, high-dimensional edge feature matrices. This memory-centric optimization minimizes costly global memory reads and maximizes the reuse of weights within the faster on-chip SRAM, a critical factor for achieving high TFLOPS efficiency on modern accelerator hardware.

Addressing the $O(N^2)$ complexity endemic to many pairwise interaction models is key to realizing truly large-scale simulations. Unlike methods that rely on computationally expensive fast multipole expansion (FMM) pre-processing, E2Former-V2 integrates geometric filtering directly into the attention mechanism. This allows the model to implement approximate, yet highly accurate, interaction cutoff schemes dynamically during inference. By focusing computational effort only on atoms within a defined interaction radius, the network achieves near-linear scaling complexity, thereby extending the computational applicability to systems containing thousands of atoms while maintaining prediction fidelity.

From an algorithmic perspective, the integration of the $\text{SO}(2)$ rotational basis within the Equivariant Axis-Aligned Sparsification (EAAS) provides a sophisticated method for feature compression without losing directional information critical for molecular geometry. This rotational invariance is mathematically enforced during the optimization process, meaning the model learns physically meaningful, rotationally consistent features. This architectural choice solidifies the framework’s grounding in physical symmetries, moving beyond mere structural adaptation to embed fundamental laws of physics directly into the computational graph.