The pursuit of genuinely autonomous research agents receives a significant boost from Tongyi DeepResearch, a new system designed to tackle complex, long-term information-seeking tasks. Baixuan Li, Bo Zhang, and Dingchu Zhang, leading the Tongyi DeepResearch Team, present a framework that trains agents through a novel end-to-end process, combining mid-training and post-training techniques to enable scalable reasoning and information retrieval. This system distinguishes itself through a fully automatic data synthesis pipeline, eliminating the need for expensive human annotation, and achieves state-of-the-art performance on challenging benchmarks including Humanity’s Last Exam and WebWalkerQA. With 30. 5 billion parameters, yet activating only 3. 3 billion per token, Tongyi DeepResearch represents a substantial advance in the field, and its open-source release promises to accelerate further innovation in autonomous research capabilities.

The team designed a highly scalable data synthesis pipeline, fully automatic and reducing the need for costly human annotation, which powers all training stages. By constructing customized environments for each stage, the system ensures stable and consistent interactions throughout. 5 billion total parameters, efficiently activates only 3. The evaluation prompts align with those used in the original papers for each benchmark to ensure reproducibility, with manual evaluation for mathematical problems and SimpleQA for knowledge-based problems. The system utilizes tools for search, webpage parsing, Python code execution, and access to academic publications and file analysis. It is tested with high-quality, high-uncertainty questions, PhD-level research questions, mathematical problems, and knowledge-based problems. 5 billion parameter model, efficiently utilizing only 3. On the newly released xbench-DeepSearch-2510 benchmark, the system achieved performance just below ChatGPT-5-Pro, demonstrating competitive results. Detailed analysis reveals an average performance of 32. 7% on BrowseComp-ZH. The system also achieved 70. 9% on xbench-DeepSearch, 75. 0% on WebWalkerQA, and 90.

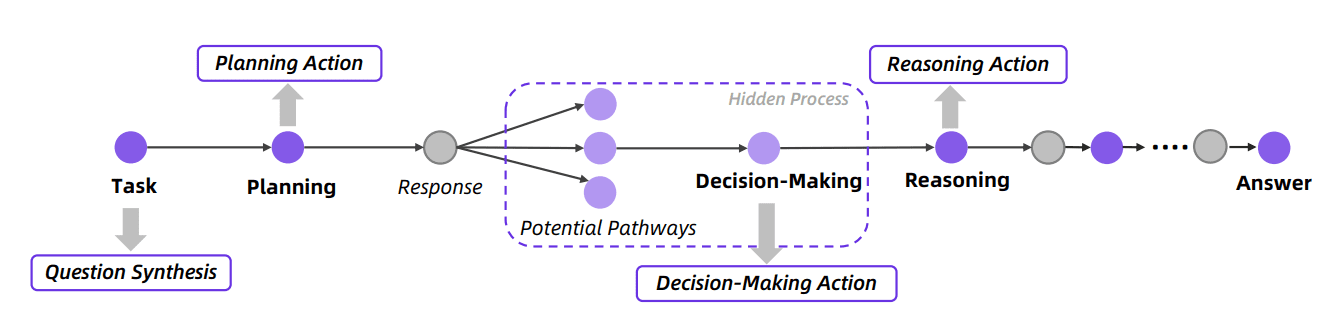

6% on FRAMES, demonstrating strong generalization across both English and Chinese tasks. Researchers introduced a “Heavy Mode” leveraging test-time scaling through a Research-Synthesis framework, utilizing parallel agents exploring diverse solution paths and intelligently aggregating their findings. Results show that the Heavy Mode achieves 38. This achievement stems from a novel training framework combining agentic mid-training and post-training, alongside a highly scalable data synthesis pipeline that reduces reliance on costly human annotation. A key innovation lies in the extensive use of synthetically generated data, which addresses the scarcity of high-quality, verifiable data required for training deep research agents.

By leveraging large language models to create research-level questions and agent behaviors, the team established a data flywheel that iteratively improves the agent’s reasoning and planning abilities. Furthermore, the system benefits from carefully designed training environments that promote stable learning and reduce the costs associated with real-world API interactions. The authors acknowledge limitations related to the potential biases present in the synthetic data and the challenges of fully replicating the complexity of real-world research scenarios. Future work will likely focus on mitigating these biases and exploring methods for incorporating more nuanced real-world feedback into the training process, ultimately enhancing the agent’s ability to conduct truly impactful research.

👉 More information

🗞 Tongyi DeepResearch Technical Report

🧠 ArXiv: https://arxiv.org/abs/2510.24701