Researchers are increasingly seeking to understand the fundamental principles governing the behaviour of deep neural networks. W. A. Zúñiga-Galindo, working independently, presents a rigorous mathematical framework for analysing the ‘critical organisation’ within these complex systems , the point at which a network transitions from a single, stable state to a multitude of possibilities. This study uniquely connects the thermodynamic limits of both standard and recurrent neural networks with the abstract mathematics of p-adic statistical field theories, utilising p-adic integers to model hierarchical network structures. By demonstrating this link, and illustrating it with a hierarchical edge detector model exhibiting strange attractor dynamics, this work offers a novel perspective on network stability and could pave the way for designing more robust and adaptable artificial intelligence systems. Furthermore, the analysis of random neural networks reveals a power-type expansion for output probability distributions, grounded in a Gaussian base , providing valuable insight into network predictability.

Network State Bifurcation in Parameter Space reveals emergent

Modeling Neural Network Topology using p-Adic Integers

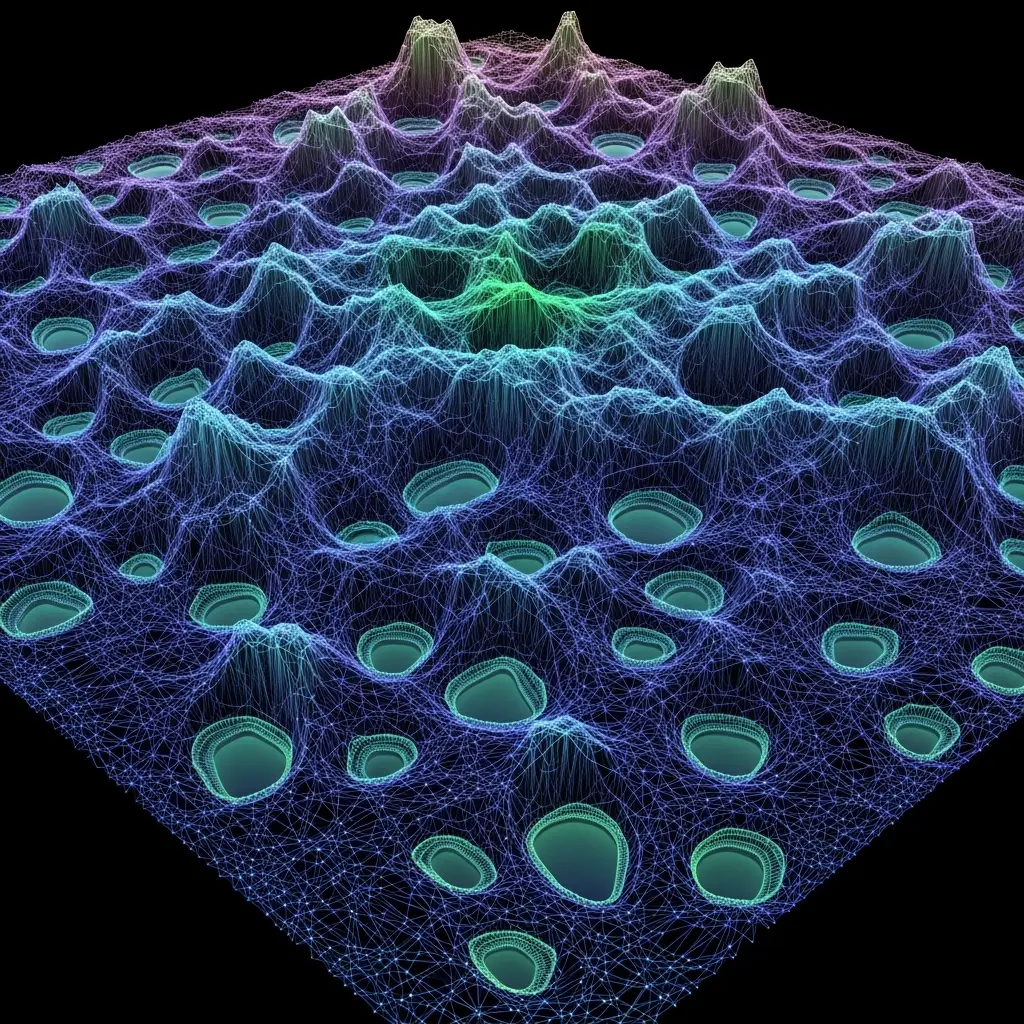

Scientists have rigorously studied the thermodynamic limit of Deep neural networks (DNNs) and recurrent neural networks (RNNs), assuming sigmoid activation functions are employed. A thermodynamic limit represents a continuous neural network where neurons form a continuous space with an infinite number of points, allowing for detailed analysis of network behaviour at scale. The research demonstrates that such a network admits a unique state within a specific region of its parameter space, crucially dependent on the network’s parameters, and this state undergoes a dramatic transition outside this region. Specifically, the unique state bifurcates into an infinite number of states, marking a critical organization where the network’s behaviour fundamentally changes, transitioning from a single, stable state to a multitude of possibilities.

The team achieved this by leveraging p-adic integers to codify hierarchical structures inherent in DNNs and RNNs, presenting an algorithm that recasts these topologies as p-adic tree-like structures. This innovative approach connects the hierarchical organization of the network with its critical organization, providing a novel framework for understanding complex network dynamics. Rigorous study of a toy model, a hierarchical edge detector for grayscale images based on p-adic cellular neural networks, reveals that the critical organization manifests as a strange attractor, suggesting chaotic yet bounded behaviour. Furthermore, the research extends to random versions of DNNs and RNNs, where parameters are defined as generalized Gaussian random variables within a space of quadratic integrable functions.

Experiments show that the probability distribution of the network’s output, given a specific input in the infinite-width case, admits a power-type expansion, with the constant term being a Gaussian distribution. This finding provides insights into the statistical properties of these networks and their ability to generalize from limited data. The work establishes a crucial link between the network’s architecture, its critical behaviour, and the underlying mathematical framework of p-adic analysis, opening avenues for designing more robust and efficient neural networks. This research not only advances our theoretical understanding of DNNs and RNNs but also offers potential for developing novel algorithms and architectures inspired by the principles of p-adic statistical field theories.

Unique States and Bifurcations in Neural Networks reveal

Scientists investigated the thermodynamic limit of deep neural networks (DNNs) and recurrent neural networks (RNNs), assuming sigmoid activation functions, to explore unique states within specific parameter regions. The research team rigorously examined continuous neural networks, where neurons form a continuum with infinitely many points, demonstrating that such networks admit a unique state when the Lipschitz constant of the activation function, Lφ, multiplied by the norm of the weights, ∥W∥2, is less than 1. A bifurcation occurs when Lφ ∥W ∥2 exceeds 1, signifying a transition from a unique state to an infinite number of states, a critical organization point in the parameter space. To codify hierarchical structures, the study pioneered an algorithm recasting DNN and RNN topologies as p-adic tree-like structures, utilising p-adic integers.

Applying Framework to Hierarchical Edge Detection Models

This innovative approach connects hierarchical and critical organizations, allowing researchers to rigorously study the critical organization of a toy model: a hierarchical edge detector for grayscale images based on p-adic cellular neural networks. The critical organization of this network was characterised as a strange attractor, revealing complex dynamical behaviour. Researchers then extended the analysis to random DNNs and RNNs, modelling parameters as generalized Gaussian random variables within a space of quadratic integrable functions. Experiments0.4) defines interactions between neurons across layers, enabling a reduction of DNN study to the p-adic domain. Furthermore,.

P-adic Networks Reveal Stability and Bifurcation in Dynamical

Establishing Connections between Limits and p-adic Numbers

Researchers have demonstrated a rigorous connection between the thermodynamic limit of deep neural networks (DNNs) and recurrent neural networks (RNNs), utilising p-adic numbers to model hierarchical structures within these networks. The study establishes that in a specific parameter region, these networks possess a unique stable state, but this stability breaks down beyond that region, transitioning into an infinite number of states, a critical organisation representing a bifurcation in the parameter space. A novel algorithm was presented, successfully recasting the hierarchical topologies of DNNs and RNNs into p-adic tree-like structures, thereby linking hierarchical and critical organisations. Furthermore, the investigation explored the critical organisation of a simplified model, a hierarchical edge detector based on p-adic cellular neural networks, revealing its behaviour as a strange attractor.

Random versions of DNNs and RNNs were also examined, calculating the probability distribution of outputs for infinite-width networks and finding a power-type expansion with a Gaussian constant term. The authors acknowledge a limitation in the scope of their model, focusing on sigmoid activation functions and specific network architectures, which may not fully capture the complexity of all neural networks. Future research could extend these findings to other activation functions and network types, potentially exploring the implications of these p-adic structures for network robustness and generalisation capabilities. These findings offer a novel mathematical framework for understanding the behaviour of deep learning models, suggesting that p-adic numbers can effectively represent and analyse the hierarchical organisation inherent in these networks. The identification of a critical organisation, marked by a transition from a unique to an infinite number of states, provides insights into the conditions under which network behaviour becomes unstable or unpredictable. While the current work focuses on theoretical foundations, it lays the groundwork for potentially developing more robust and interpretable neural network architectures, and offers a new lens through which to view the complex dynamics of deep learning.

🗞 Critical Organization of Deep Neural Networks, and p-Adic Statistical Field Theories

🧠 ArXiv: https://arxiv.org/abs/2601.19070