Researchers are tackling the considerable communication costs and data heterogeneity that plague federated graph neural networks (FGNNs), a promising approach to learning from decentralised, complex data. Xiuling Wang, Xin Huang, and Haibo Hu, from Hong Kong Baptist University, alongside Jianliang Xu, present a new paradigm , CeFGC , which dramatically reduces communication rounds to just three by ingeniously employing generative diffusion models. This innovative technique allows clients to share generative models, rather than raw data or frequent parameter updates, enabling the creation of synthetic data to augment local training and align global objectives. By minimising client-server exchanges, CeFGC not only accelerates FGNN training but also demonstrably improves performance on non-IID datasets, representing a significant step towards practical, scalable federated graph learning.

CeFGC reduces graph learning communication via diffusion effectively

Scientists have demonstrated a groundbreaking new approach to federated learning for graph neural networks, achieving efficient training with remarkably reduced communication overhead. Researchers introduced CeFGC, a novel federated learning paradigm that limits server-client communication to just three rounds, addressing key challenges in decentralized graph data training. The study reveals a solution to both the high communication costs and non-IID data characteristics that typically plague federated graph neural networks, paving the way for more practical and scalable applications. CeFGC leverages generative diffusion models to minimise direct communication, enabling clients to generate synthetic graphs that augment their local datasets and improve model performance.

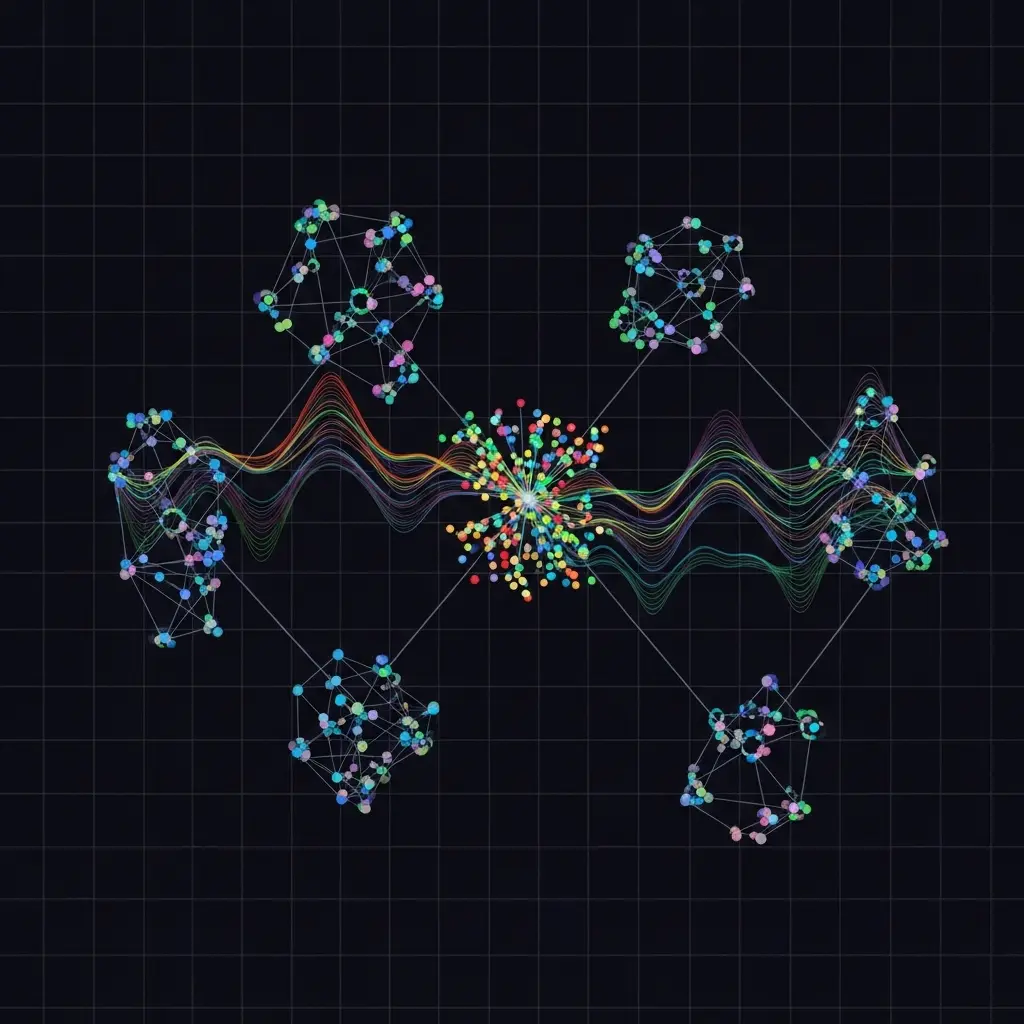

The core of this breakthrough lies in each client training a generative diffusion model to capture the unique distribution of its local graph data, then sharing this model with a central server. The server redistributes these generative models, alongside an initialised graph neural network, back to the clients, facilitating local model training with a combination of real and synthetic data. Experiments show that this process allows clients to train robust local graph neural network models, which are then uploaded to the server for aggregation into a global model. This innovative approach theoretically reduces communication complexity to a constant of three rounds, a significant improvement over existing methods that require numerous exchanges of parameters.

This research establishes a clear pathway to overcome the limitations of traditional federated graph neural networks, particularly in scenarios with bandwidth constraints or offline clients. The team achieved superior performance on non-IID graphs by aligning local and global model objectives and enriching the training set with diverse synthetic data. Theoretical analysis confirms that CeFGC reduces communication volume to a constant of three rounds, demonstrating a substantial reduction in I/O complexity. Extensive experiments on real-world graph datasets validate the effectiveness and efficiency of CeFGC against state-of-the-art competitors, highlighting its potential for applications in areas like recommender systems, social network analysis, and biological network analysis. Furthermore, the work opens up possibilities for more privacy-preserving and scalable graph learning, as the reduced communication requirements lessen the risk of data leakage and enable training on larger, more distributed datasets. By effectively combining generative diffusion models with federated learning, scientists have not only improved the efficiency of graph neural network training but also enhanced the robustness and generalisation capabilities of the resulting models, promising significant advancements in various data-intensive fields.

Three-Round Federated Learning via Graph Diffusion

Scientists developed CeFGC, a novel Federated Graph Neural Network (FGNN) paradigm designed to efficiently train GNNs across non-IID data using only three rounds of communication between the server and clients. The study pioneered a method leveraging generative diffusion models to minimise direct client-server exchanges, addressing key challenges in federated learning such as high communication overhead and non-IID data characteristics. Each client initially trains a generative diffusion model, specifically a Graph Generative Diffusion Model (GGDM), on its local graph data to capture the unique distribution present within that dataset. This locally trained GGDM is then transmitted to the server, constituting the first communication round of the process.

Subsequently, the server redistributes the received GGDMs back to all participating clients, enabling each client to generate synthetic graphs reflecting the collective data distribution. Clients then combine these synthetically generated graphs with their original local graphs, effectively augmenting their training dataset and enriching it with diverse graph structures. This augmented dataset is then used to train local GNN models, allowing for improved generalisation and performance on non-IID data. Finally, clients upload their trained GNN model weights to the server for aggregation, creating a global GNN model that benefits from the combined knowledge of all clients, this completes the third and final communication round.

The research team theoretically analysed the I/O complexity of this communication volume, demonstrating that CeFGC reduces communication to a constant of three rounds. Experiments employed several real graph datasets to rigorously evaluate the effectiveness and efficiency of CeFGC against state-of-the-art competitors. Results consistently reflected superior performance on non-IID graphs, achieved by aligning local and global model objectives and enriching the training set with diverse graphs. This approach enables a significant reduction in communication costs while maintaining high accuracy, making it suitable for bandwidth-constrained or offline clients. The technique reveals a pathway towards scalable and privacy-preserving graph learning in federated settings.

Three-Round Federated Learning via Diffusion Models enables efficient

Scientists have developed CeFGC, a novel Federated Graph Neural Network (FGNN) paradigm that achieves efficient GNN training over non-IID data with a remarkably limited three communication rounds between the server and clients. The core innovation lies in leveraging generative diffusion models to minimise direct client-server communication, a significant advancement in distributed learning. Each client trains a generative diffusion model capturing its local data distribution and shares this model, enabling the server to redistribute it to all clients for enhanced data synthesis. Experiments demonstrate that CeFGC reduces the I/O complexity of communication volume to a constant of just three rounds, a substantial reduction compared to existing methods.

The team measured the effectiveness of this approach on several real datasets, consistently achieving superior performance on non-IID data by aligning local and global model objectives and enriching the training set with diverse graphs. Results demonstrate that this alignment and enrichment significantly enhance the efficiency and effectiveness of graph classification, particularly when dealing with heterogeneous data distributions across clients. To address challenges related to the number of generative diffusion models required per client, researchers introduced a label channel into the training process. This innovative approach allows a single diffusion model to handle multiple data classes by varying the noise levels applied during training, reducing communication bandwidth and computational demands.

Furthermore, the team implemented privacy-enhancing solutions, including encrypted model sharing and random shuffling of models before distribution, to protect sensitive client data from potential inference or extraction. Tests prove that common inference and poisoning attacks ineffective against CeFGC, as these attacks rely on multiple communication rounds not present in the system. The work introduces two major advancements: a three-round communication framework and the integration of generative diffusion models to improve performance on non-IID graphs. Comprehensive experiments conducted on three non-IID settings with five real-world datasets consistently show CeFGC outperforming state-of-the-art baselines, despite its minimal communication requirements.

Three-Round Federated Learning with Generative Diffusion models improves

Scientists have developed CeFGC and CeFGC*, a new federated graph neural network (FGNN) paradigm designed to efficiently train models across decentralized, non-identical data distributions. This approach minimizes communication between clients and a central server to just three rounds, a significant reduction compared to existing methods, by leveraging generative diffusion models. Each client trains a generative model to capture its local data characteristics and shares. Future work could explore adaptive strategies for generative model training and distribution to further optimize communication efficiency and model accuracy, potentially extending the framework to other distributed learning scenarios.

🗞 Communication-efficient Federated Graph Classification via Generative Diffusion Modeling

🧠 ArXiv: https://arxiv.org/abs/2601.15722