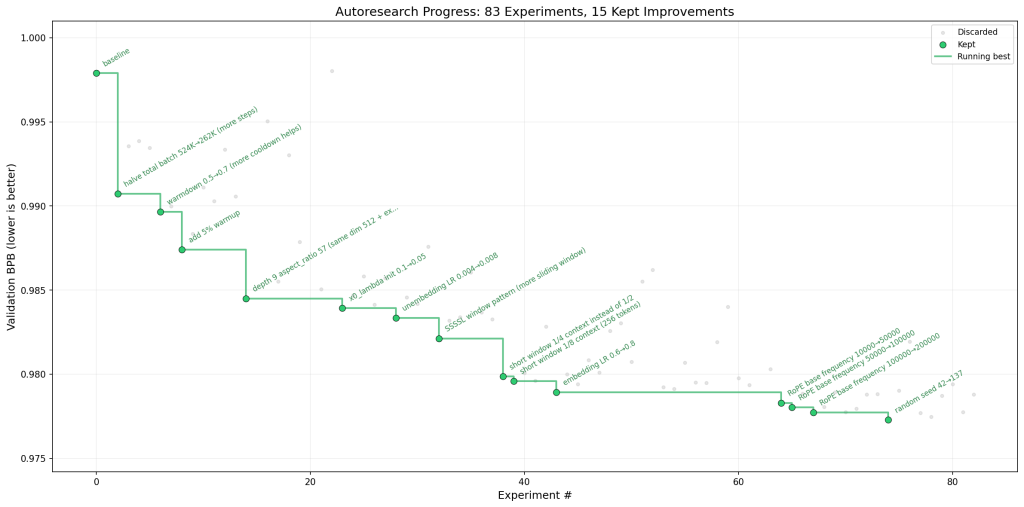

A new GitHub repository details how artificial intelligence is now capable of iteratively self-improving code, raising questions about the future of AI research and development. Created by Andrej Karpathy, the “autoresearch” repository contains just three files: one fixed, one for the AI agent’s domain, and a crucial Markdown document for human instruction. The agent autonomously modifies training code, runs five-minute training sessions, evaluates performance, and repeats the process, all without human intervention. “One day, frontier AI research used to be done by meat computers…That era is long gone,” Karpathy writes in the repository’s README, claiming the code base is already in its 10,205th generation, a claim that is difficult to verify given the system’s complexity.

AI Agent Autonomous Research Loop with program.md

The emergence of fully autonomous artificial intelligence research is actively unfolding, as evidenced by the recent release of the “autoresearch” repository. Developed by Andrej Karpathy, this system demonstrates a capacity for independent experimentation previously confined to human scientists, raising questions about the future of discovery itself. The core of the system lies in a remarkably simple structure: just three files govern the entire research process. The agent’s behavior is dictated not by complex code, but by a plain-text Markdown document, “program.md.” This file serves as the primary interface for directing the AI, outlining how it should approach research tasks. The agent then iteratively modifies the training code within “train.py,” initiating five-minute training sessions to assess the impact of each change. This continuous loop of modification, training, and evaluation allows the system to operate “all night without you,” according to Karpathy.

The repository’s structure is deliberately minimal, prioritizing manageability and reviewability of changes. This approach contrasts sharply with traditional research methods, where scientists meticulously adjust Python files and configurations. Instead, researchers now “program the program.md” files, essentially defining the parameters of an autonomous research organization. The system’s metric for success is “val_bpb (validation bits per byte) — lower is better,” providing a standardized measure for comparing the effectiveness of different architectural and hyperparameter adjustments. Karpathy envisions a future where research is entirely the domain of autonomous swarms of AI agents running across compute cluster megastructures in the skies, a vision that, while ambitious, is rapidly becoming more plausible.

Single-File Modification of train.py for Iteration

Artificial intelligence research increasingly features automated systems capable of independent experimentation, a departure from traditional methods reliant on human-driven code modification and analysis. While numerous approaches to AI-assisted research exist, a recent development focuses on a remarkably constrained system, limiting the scope of autonomous modification to a single Python file. This approach, detailed in the “autoresearch” repository, prioritizes manageability and interpretability in an effort to accelerate the pace of discovery. Rather than allowing an agent to alter an entire codebase, the system restricts its actions to “train.py,” the file containing the core GPT model, optimizer, and training loop. According to the repository documentation, “the agent only touches train.py,” a design choice intended to keep modifications focused and easily reviewable. This simplification allows researchers to observe the impact of specific changes without being overwhelmed by broader systemic alterations.

The agent operates within a strict five-minute training window, evaluating performance based on a single metric, validation bits per byte, allowing for direct comparison of experiments regardless of architectural changes. This deliberate limitation is coupled with a fixed time budget; “training always runs for exactly 5 minutes, regardless of your specific platform,” ensuring consistent evaluation parameters. The system is designed to be self-contained, relying only on PyTorch and a few small packages, avoiding the complexities of distributed training or elaborate configurations. The project’s creator envisions approximately 12 experiments per hour, or around 100 overnight, demonstrating a capacity for rapid iteration.

One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun, and synchronizing once in a while using sound wave interconnect in the ritual of “group meeting”. That era is long gone.

Five-Minute Training Runs

Andrej Karpathy’s recently released “autoresearch” repository is challenging conventional approaches to artificial intelligence research by automating the experimental process. Rather than researchers manually adjusting code and monitoring training runs, the system employs an agent that independently modifies a language model’s training code and evaluates performance. This shift is enabled by a deliberate design choice: limiting each training session to a fixed five-minute window, irrespective of the underlying hardware. According to the repository documentation, the goal is to achieve a “lower is better” score for val_bpb, providing a vocabulary-size-independent measure of model performance. The system’s architecture is intentionally minimal, consisting of only three core files: a fixed constants file, the editable training code in “train.py,” and “program.md,” a Markdown document that serves as the agent’s instruction set.

Karpathy emphasizes the simplicity of this structure, stating, “The repo is deliberately kept small and only really has a three files that matter.” This streamlined approach facilitates review of changes and allows the agent to rapidly iterate through potential improvements. The system is designed to run approximately twelve experiments per hour, or around one hundred overnight, enabling a high throughput of automated testing. While acknowledging potential limitations in cross-platform comparability, the fixed time budget ensures that results are consistent within a given computational environment, allowing autoresearch to “find the most optimal model for your platform in that time budget.”

You’re not touching any of the Python files like you normally would as a researcher. Instead, you are programming the program.md Markdown files that provide context to the AI agents and set up your autonomous research org.

autoresearch Repository Structure & Design Choices

The emergence of autonomous research systems is rapidly reshaping how artificial intelligence models are developed, and the structure of the “autoresearch” repository reveals a deliberate design prioritizing streamlined experimentation. Beyond simply automating tasks, this system, created by Andrej Karpathy, aims to offload the iterative process of model refinement to an AI agent, fundamentally altering the role of human researchers. The repository’s architecture is remarkably concise, consisting of just three core files, a choice intended to maintain manageability and facilitate analysis of the agent’s modifications. Central to this approach is the separation of concerns between fixed components and those subject to autonomous alteration. “prepare.py” remains constant, handling initial data preparation and runtime utilities, while “train.py” serves as the sole canvas for the agent’s experimentation, encompassing the model architecture, hyperparameters, and training loop. Crucially, the system operates within a fixed five-minute time budget for each training run, irrespective of the underlying hardware.

This constraint, according to Karpathy, ensures “experiments [are] directly comparable regardless of what the agent changes.” The third file, “program.md,” acts as the primary interface for human input, providing initial instructions and context for the agent. This Markdown document allows researchers to guide the AI’s exploration without directly manipulating the code. The design intentionally avoids complex configurations or distributed training, opting for a self-contained, single-GPU implementation to simplify the process and focus on core algorithmic improvements. Karpathy notes the system is “just a demonstration and I don’t know how much I’ll support it going forward,” but anticipates community contributions and forks to expand platform support.

One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun, and synchronizing once in a while using sound wave interconnect in the ritual of “group meeting”. That era is long gone.