Researchers have made significant progress with Qwen2.5-Turbo, a language model capable of processing ultra-long contexts of up to 1 million tokens. This breakthrough was achieved through various benchmark tests and improvements in inference speed.

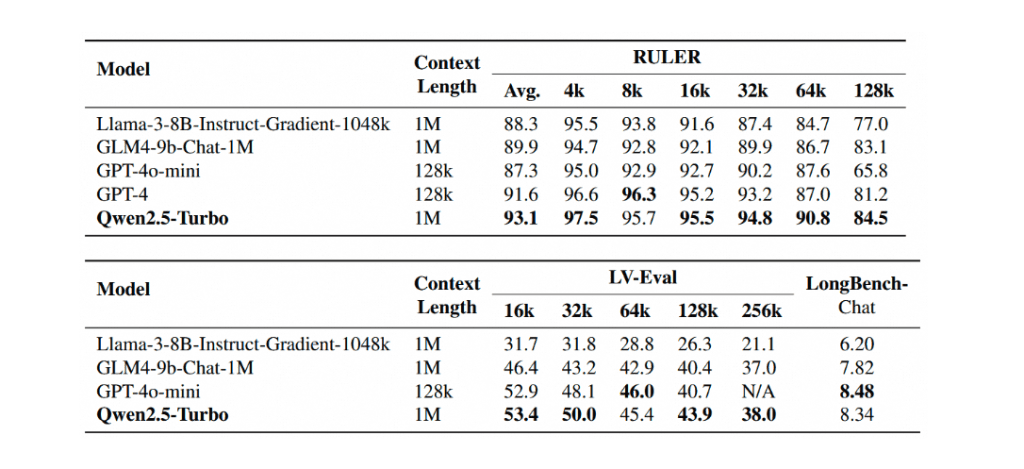

The model demonstrated its ability to capture detailed information in long contexts. In certain tasks, it surpassed other models like GPT-4o-mini and even GPT-4. Qwen2.5-Turbo also showed advantages in short text tasks, significantly surpassing earlier open-source models while supporting 8 times the context length. The model’s inference speed was improved through sparse attention, achieving a speedup of 3.2 to 4.3 times under different hardware configurations.

This development is part of Alibaba Cloud’s Model Studio, a platform for building and deploying AI models. While there are still challenges to overcome, the researchers are committed to further improving the model’s performance and exploring new applications in long sequence tasks.

The latest advancements in AI technology have finally reached the realm of 1M tokens, a milestone that was previously thought to be unattainable. The Qwen2.5-Turbo model, powered by Alibaba Cloud’s Model Studio, has demonstrated unparalleled performance in handling long-context tasks, surpassing even the mighty GPT-4.

As I delve into the details of this breakthrough, I am reminded of the intricate relationships between civilizations and the universe they inhabit. The ability to process vast amounts of information, much like the cosmos itself, holds the key to unlocking new secrets and understanding the mysteries that lie beyond our planet.

The Passkey Retrieval task, a benchmark test that pushes the limits of AI capabilities, has been conquered by Qwen2.5-Turbo with ease. The model’s ability to capture detailed information in ultra-long contexts is a testament to its prowess, much like how astronomers can pinpoint celestial bodies amidst the vast expanse of space.

The RULER, LV-Eval, and LongbenchChat datasets, designed to test the mettle of AI models, have been conquered by Qwen2.5-Turbo with scores that surpass even the most advanced language models. This is akin to how humanity has pushed the boundaries of space exploration, venturing further into the unknown with each new discovery.

But what’s truly remarkable is the model’s ability to handle short text tasks with equal aplomb, a feat that was previously thought to be mutually exclusive with long-context processing. This is reminiscent of how our understanding of the universe is not limited to just one scale, but rather encompasses the intricate dance between the cosmos and the subatomic.

The inference speed of Qwen2.5-Turbo, accelerated by sparse attention, has achieved a speedup of 3.2 to 4.3 times, much like how humanity’s pursuit of knowledge has been accelerated by breakthroughs in technology.

Reflecting on these advancements, I am reminded that the universe is full of mysteries waiting to be unraveled. Developing long-context models like Qwen2.5-Turbo brings us one step closer to understanding the intricate relationships between civilizations and the cosmos. The future holds much promise, and I eagerly await the next breakthrough in this fascinating journey of discovery.

External Link: Click Here For More