Researchers are increasingly concerned that the growing capabilities of low-compute artificial intelligence models present significant, and often overlooked, safety challenges. Prateek Puri from the Department of Engineering and Applied Sciences at RAND, alongside his colleagues, demonstrate a concerning trend: the decreasing model size required to achieve competitive performance on key language benchmarks. Their work, profiling over 5,000 large language models hosted on HuggingFace, reveals a more than tenfold decrease in the computational resources needed to reach comparable levels of performance in just the past year. This research is significant because it shows that malicious actors can now launch sophisticated digital harm campaigns , including disinformation, fraud and extortion , using readily available, consumer-grade hardware, highlighting a critical gap in current AI governance strategies focused primarily on high-compute systems.

This research profiles the rapid diffusion of advanced functionalities from large AI systems into low-resource models deployable on consumer devices, raising significant security concerns. This miniaturisation, driven by techniques like parameter quantization and agentic workflows, means that sophisticated AI is no longer limited to those with access to vast computational resources. The study unveils a critical vulnerability in current AI governance frameworks, which largely focus on regulating high-compute models.

Their findings indicate that nearly all studied campaigns can be easily executed on standard consumer-grade hardware, highlighting the potential for widespread malicious use. This work establishes that existing protection measures, designed for large-scale AI, leave substantial security gaps when it comes to their smaller counterparts. This position paper argues that the swift compression of AI capabilities into smaller, accessible models poses a significant and growing threat. The research quantitatively profiles the rate at which open-source LLMs have become both more performant and more compute-efficient over time, demonstrating a clear trend towards increasingly powerful AI on increasingly accessible hardware.

By outlining how this shift impacts the resources needed for societal harm campaigns, the study complicates existing AI risk mitigation strategies centred around high-compute models. Experiments show that the amount of compute required for both legitimate AI use cases and malicious campaigns often overlaps significantly, further blurring the lines for effective regulation. The team profiled the computational workloads of several academic and commercial AI applications, revealing a high degree of commonality. This overlap, combined with the relatively low computational cost of launching the simulated attacks, underscores the urgency of developing new governance strategies tailored specifically to low-compute AI threats. The work opens avenues for researchers and policymakers to focus on innovative solutions to address this emerging class of risks, as these threats are becoming more severe, frequent, and difficult to detect.

LLM performance tracking and harm simulation are crucial

This data formed the foundation for simulating realistic digital harm campaigns. The study employed consumer-grade hardware configurations to assess the feasibility of executing these campaigns with limited resources. Experiments demonstrated that nearly all simulated campaigns could be readily executed on readily available hardware, highlighting a critical vulnerability. The team quantified the resources required, noting the number of synthetic images, LLM-generated tokens, and words of voice-cloned audio an actor could generate with the compute available in a typical academic experiment. To further refine their analysis, the work considered the challenges of robust capability evaluations, acknowledging the technical expertise required and the rapid advancement of the field.

The study pioneered a methodology for assessing AI risk beyond simple compute metrics, recognising that bad-faith developers may circumvent regulatory benchmarks while maintaining harmful capabilities. This approach enables a more nuanced understanding of AI threats, complementing existing compute-based regulations. The research also explored defensive AI strategies, such as voice clone detection and cybersecurity agents, but cautioned that these may not be universally effective across all threat classes. Scientists also investigated inference time-filtering and watermarking techniques as potential protective measures, acknowledging their limitations in distinguishing between benign and harmful content.

The team demonstrated that even models with moderation safeguards can be manipulated to produce harmful outputs through strategic prompting. Finally, the study advocated for developing social AI resiliency through enhanced media literacy, AI incident reporting, and increased AI education, alongside safeguarding access to critical datasets and materials needed for AI attacks. This holistic approach aims to mitigate the impact of AI-powered attacks and foster a more resilient society.

Rapidly shrinking models approach peak LLM performance, challenging

Their work reveals that models requiring significantly less computational power are rapidly approaching the performance levels of their larger counterparts, raising substantial security concerns. This miniaturization is driven by techniques like agentic workflows and parameter quantization, allowing powerful AI to run on consumer devices. Results demonstrate that nearly all studied campaigns can be executed on consumer-grade hardware, specifically NVIDIA data-center GPUs and consumer MacBooks GPUs. The team extracted performance metrics from official Apple and NVIDIA product pages, confirming the accessibility of sufficient processing power for malicious activities.

Linear fits to the evolution of processing power in both NVIDIA and MacBook chips over time visually demonstrate the rapid increase in capability. Data shows a dramatic rise in AI-related security incidents. The FBI reported $2.9 billion lost to business-email-compromise scams in 2023, citing generative AI as a key driver. SlashNext recorded a $1,265% increase in phishing incidents between October 2022 and September 2023, coinciding with the proliferation of generative AI technologies. Furthermore, the FBI declared a “global sextortion crisis” fuelled by generative models, while McAfee reported that 25% of surveyed U.

S. adults have experienced or known someone affected by an AI voice scam. The original DeepNude app, a lightweight pix2pix model, continues to contribute to this crisis. Researchers tested the believability of outputs from compressed models, finding that open-source LLMs as small as 7 billion parameters were as politically persuasive as many larger LLMs and more persuasive than a human control group. In separate studies, human participants identified deepfakes generated by a StyleGAN2 model (less than 1 billion parameters) with only approximately 60% accuracy, barely above random chance. Similarly, synthetic audio samples from lightweight open-source models were detected in only around 60% of instances.

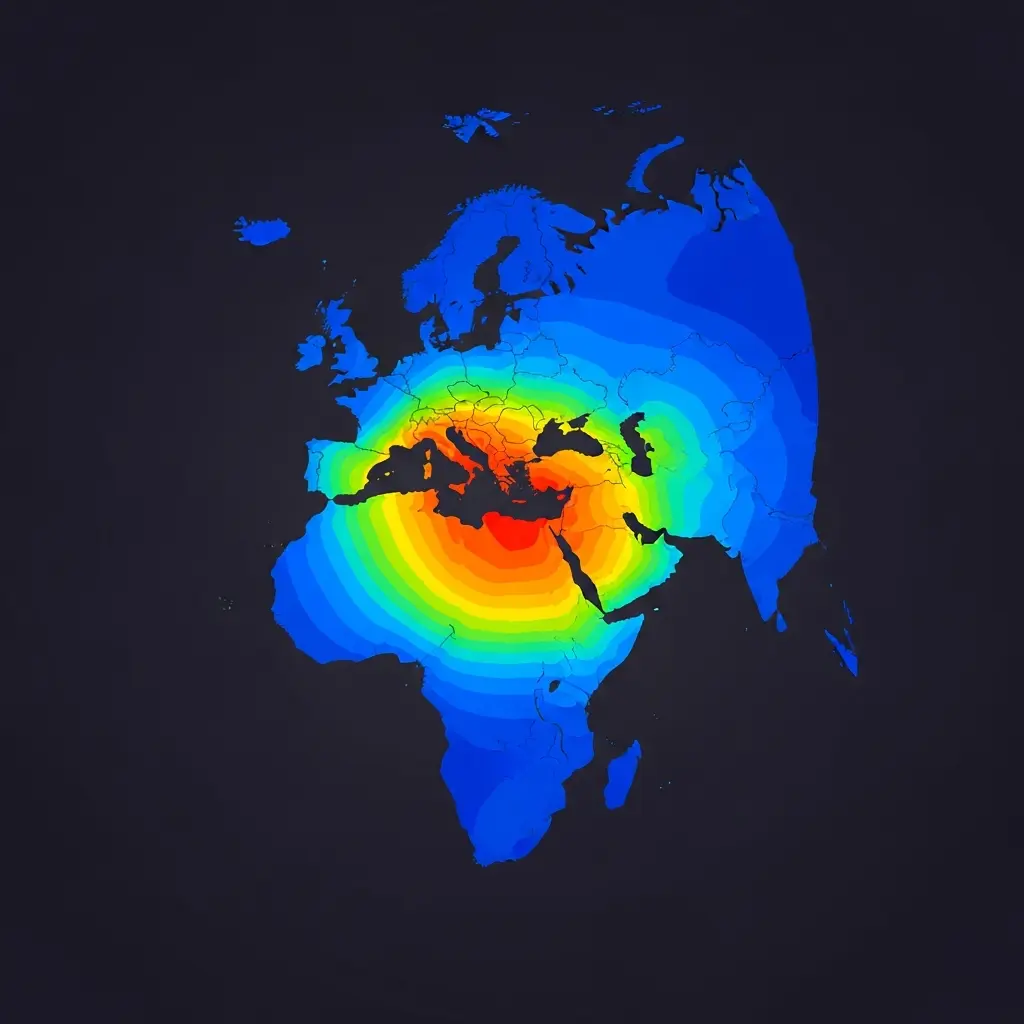

These findings demonstrate the dangerous capabilities present even in low-compute AI systems. Simulations, guided by the 2023 Executive Order on AI, assessed the compute required for disinformation, cybersecurity, voice cloning, and deepfake attacks. By decomposing historical case studies into constituent tasks, scientists estimated the computational demands for replicating these attacks. Analysis of the Brexit disinformation campaign and business compromise scams revealed that even large-scale campaigns are achievable with readily available hardware, highlighting the urgent need to address the security risks posed by low-compute AI.

Smaller models, greater harm potential revealed as accessibility

This miniaturisation of models, coupled with techniques like parameter quantization and agentic workflows, means powerful capabilities are no longer limited to large-scale systems. Results indicate that nearly all simulated attacks could be executed using consumer-grade hardware and increasingly capable low-compute models. This highlights a critical gap in current AI governance, which largely focuses on regulating high-compute systems. The authors acknowledge that addressing these challenges requires collaborative efforts between technical experts, policymakers, and industry. The findings underscore the urgency of moving beyond reliance on compute thresholds as the sole proxy for AI risk.

Policymakers must develop more nuanced frameworks that consider model capabilities, potential intent, and the possibility of harm, alongside computational requirements. The rapid pace of AI development necessitates a transition from discussion to concrete adaptations in order to address the evolving technological landscape. Future research should focus on developing comprehensive protection frameworks that account for a broader range of risks, rather than solely concentrating on high-compute threats.

👉 More information

🗞 Small models, big threats: Characterizing safety challenges from low-compute AI models

🧠 ArXiv: https://arxiv.org/abs/2601.21365