Scientists are tackling the challenge of efficient and high-fidelity image generation using representation encoders, but standard diffusion transformers often struggle to learn these representations effectively. Amandeep Kumar and Vishal M. Patel, both from Johns Hopkins University, alongside et al., have identified a fundamental geometric issue hindering this process. Their research demonstrates that standard Euclidean flow matching causes probability paths to traverse inefficient regions of the feature space, a phenomenon they term Geometric Interference. To address this, they propose Riemannian Flow Matching with Jacobi Regularization (RJF), a novel method that constrains generation to the natural geometry of the representation manifold and corrects for curvature. This allows standard DiT-B architectures to converge without computationally expensive scaling, achieving a significant result with an FID score of 3.37 where previous methods failed, and represents a substantial advance in generative modelling.

This breakthrough addresses a fundamental limitation in applying these models to high-fidelity synthesis, moving beyond the need for computationally expensive architectural modifications like width scaling.

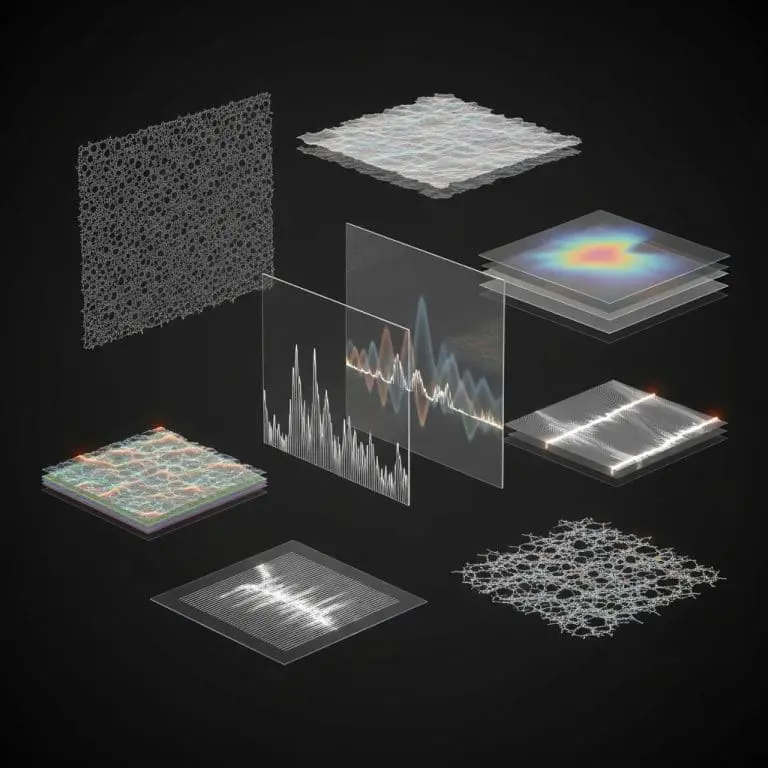

The research identifies ‘Geometric Interference’ as the core issue, revealing that standard Euclidean flow matching forces probability paths through low-density areas of the hyperspherical feature space inherent in representation encoders, rather than along the natural manifold. This work demonstrates that the failure of diffusion transformers isn’t due to insufficient model capacity, but rather a structural mismatch between the assumed Euclidean paths and the hyperspherical geometry of the latent space.

Detailed analysis reveals that representation encoders, such as DINOv2, confine information to a hypersphere, encoding data in angular vectors rather than filling the entire Euclidean space. Standard flow matching creates linear paths, essentially chords, through this hypersphere, traversing regions where the representation space is undefined.

RJF overcomes this by employing Spherical Linear Interpolation,

RJF overcomes this by employing Spherical Linear Interpolation, ensuring the generative process remains strictly on the manifold surface. Furthermore, a Jacobi Regularization term is introduced to account for curvature-induced distortion, reweighting the loss function to improve accuracy. Experiments utilising the LightingDiT-B architecture demonstrate the efficacy of RJF, achieving an FID of 4.95 without guidance, a significant improvement over the 15.83 achieved by the base VAE-based LightingDiT-B model. The work begins by implementing standard Diffusion Transformer architectures with a DiT-B configuration containing 131 million parameters, initially observing a failure to converge when applied directly to high-dimensional latent spaces.

Researchers identified Geometric Interference as the primary cause, noting that Euclidean flow matching forces probability paths through the low-density interior of the hyperspherical feature space, rather than along the manifold surface. To counteract this, RJF constrains the generative process to follow geodesics on the manifold, effectively learning paths along the surface of the hypersphere.

This is achieved through Jacobi Regularization, which corrects for curvature-induced error propagation during training. The study demonstrates that DINOv2 representations are strictly confined to a hypersphere, encoding information in angular vectors, and standard linear paths cut through the low-density interior of this hypersphere.

Experiments involved training the DiT-B architecture with RJF, achieving a Fréchet Inception Distance (FID) of 3.37, a significant improvement where prior methods failed to converge. Further analysis, using the LightingDiT-B architecture, yielded an FID of 4.95 without guidance, outperforming the base VAE-based LightingDiT-B which achieved an FID of 15.83. This result demonstrates a significant advancement in generative modelling, particularly given that prior methods failed to converge under similar conditions.

The DiT-B architecture, comprising 131 million parameters, converged

The DiT-B architecture, comprising 131 million parameters, converged effectively with RJF, establishing a baseline for further improvements. Semantic fidelity was also improved, with the model achieving an Inception Score of 186.2 and a Precision score of 0.82. Standard Euclidean flow matching techniques inadvertently guide probability paths through low-density regions of the hyperspherical feature space inherent in representation encoders, failing to follow the underlying manifold.

This phenomenon, termed Geometric Interference, leads to wasted computation and inefficient learning. Further scaling to the DiT-XL architecture yielded an FID of 3.62 in just 80 epochs, indicating efficient scaling with this approach.

The findings establish that respecting the latent topology is crucial for efficient generative modelling, rather than simply increasing model width. While amplification of the norm of generated latents improves fidelity, suggesting sensitivity of the decoder to feature magnitude, the authors acknowledge limitations in the projection radius used during inference. Future research may focus on optimising this radius and exploring the broader implications of manifold alignment for generative modelling across diverse latent spaces.

🗞 Learning on the Manifold: Unlocking Standard Diffusion Transformers with Representation Encoders

🧠 ArXiv: https://arxiv.org/abs/2602.10099