Researchers are tackling limitations in scaling Mixture-of-Experts (MoE) models, which have shown considerable promise in large-scale applications. Amirhossein Vahidi, Hesam Asadollahzadeh, and Navid Akhavan Attar from the Wellcome Sanger Institute and University of Melbourne, alongside Marie Moullet, Kevin Ly, and Xingyi Yang, present a new routing mechanism called Dirichlet-Routed MoE (DirMoE). This work is significant because it addresses the conflation of expert selection and contribution weighting inherent in existing, less scalable approaches. DirMoE employs a Dirichlet variational autoencoder framework to disentangle these routing problems, achieving full differentiability and precise control over model sparsity. The team demonstrate that their method matches or surpasses the performance of current state-of-the-art routers while simultaneously enhancing expert specialisation.

Existing MoE routers often rely on non-differentiable methods like Top-k+Softmax, hindering performance and scalability, while also conflating the distinct decisions of which experts to activate and how to distribute their contributions.

Separating Expert Selection from Contribution Distribution

This work introduces Dirichlet-Routed MoE (DirMoE), a system built on a Dirichlet variational autoencoder framework that fundamentally separates these two core routing problems. Expert selection is now modelled by a Bernoulli component, and expert contribution among chosen experts is handled by a Dirichlet component, enabling a more refined and controlled approach to MoE layers.

The entire forward pass within DirMoE remains fully differentiable through the implementation of Gumbel-Sigmoid relaxation for expert selection and implicit reparameterization for the Dirichlet distribution. A key innovation lies in the training objective, a variational Expectation-Maximisation (ELBO) that incorporates a direct sparsity penalty, precisely controlling the expected number of active experts.

This is further guided by a schedule of key hyperparameters, transitioning the model from an exploratory routing state to a definitive one. The resulting architecture not only matches or exceeds the performance of other current methods but also demonstrably improves expert specialisation. DirMoE addresses limitations in existing approaches, such as the lack of end-to-end gradients and the entanglement of expert selection with contribution weighting.

Enabling Explicit Sparsity Control and Calibration Mechanisms

By factorising routing into a binary selection vector and a Dirichlet distribution, the system offers explicit calibration and control. The total mass assigned to any subset of experts follows a Beta distribution, allowing for a one-parameter sparsity control that decouples the number of active experts from the overall contribution.

This design retains the benefits of full differentiability while adding a transparent sparsity knob for calibrating expert selection and contribution, paving the way for more efficient and interpretable large-scale language models. Demonstrations across multiple datasets and tasks confirm competitive zero-shot results and enhanced expert specialisation.

Bernoulli-Dirichlet Routing and Variational Expectation-Maximisation Training

Core Principles of the DirMoE Routing Framework

Dirichlet-Routed Mixture-of-Experts (DirMoE) employs a novel routing mechanism founded on a Dirichlet variational autoencoder framework to address limitations in existing sparse Mixture-of-Experts layers. The core of this work lies in disentangling expert selection from the distribution of contributions among those experts, a challenge present in standard Top-k+Softmax approaches.

This is achieved by implementing a Bernoulli component for expert selection and a Dirichlet component to manage expert contribution, ensuring a fully differentiable forward pass. Gumbel-Sigmoid relaxation facilitates expert selection, while implicit reparameterization handles the Dirichlet distribution, allowing gradients to flow unimpeded throughout the network.

The training objective utilizes a variational expectation-maximisation (ELBO) incorporating a direct sparsity penalty. This penalty precisely controls the expected number of active experts, guided by a hyperparameter schedule that transitions the model from exploratory routing to a definitive state. Input token embeddings are processed by two heads, αhi(x) and αlo(x), which learn the concentration of active and inactive experts respectively, alongside gating logits l(x).

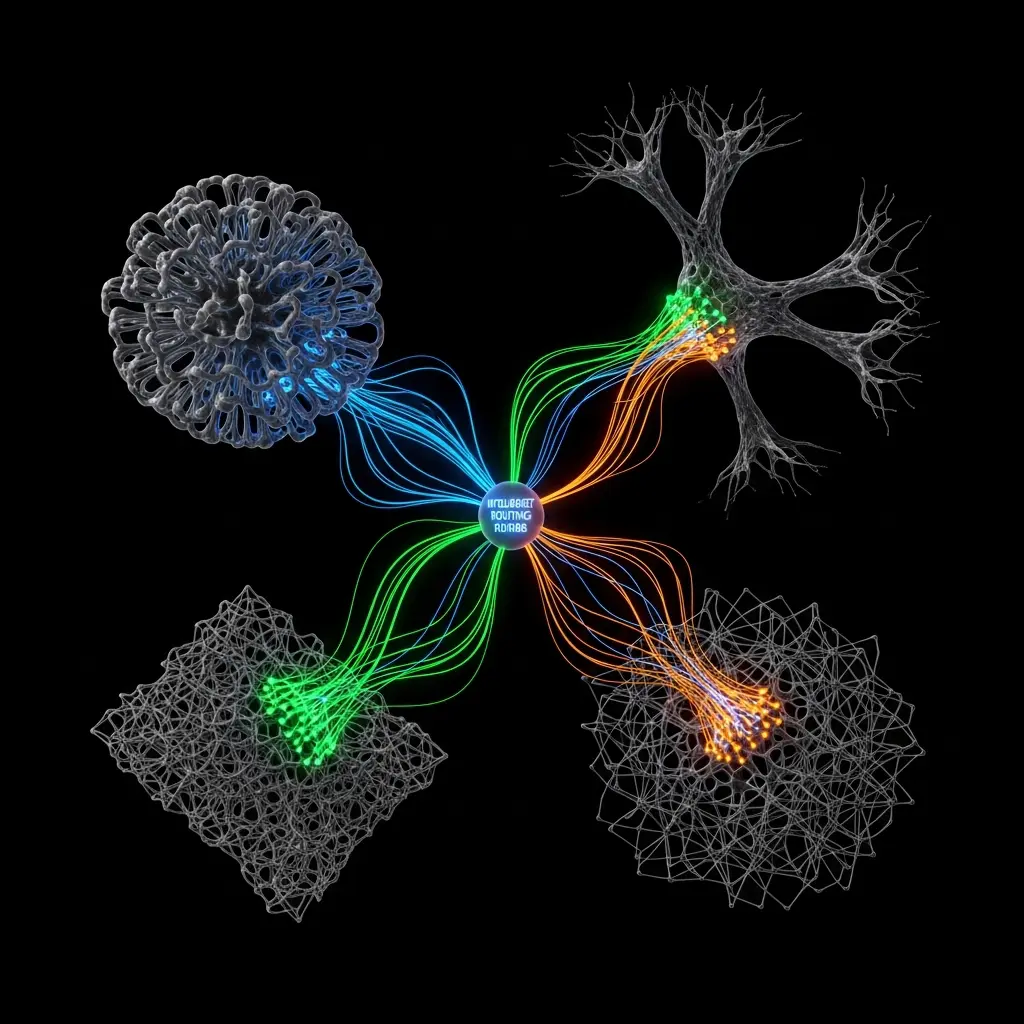

The resulting routing probabilities are calculated as the normalized product of the expert selection vector and the Dirichlet probabilities, representing expert contribution. Figure 1 illustrates this process, demonstrating how the router generates a relaxed expert selection vector and a Dirichlet distribution.

This design allows for explicit control over both the number of participating experts and the allocation of probability mass among them. A key innovation is the use of a one-parameter sparsity knob, calibrated via a Beta distribution, to decouple the number of active experts from their collective contribution. This calibration mechanism enhances interpretability and stability, improving expert specialisation and addressing issues of uneven load distribution observed in previous methods.

Demonstrable expert specialisation via controllable sparsity in Dirichlet-Routed Mixture-of-Experts

Dirichlet-Routed Mixture-of-Experts (DirMoE) introduces a novel routing mechanism achieving full differentiability and improved expert specialisation. The research details a system where the Simpson Index, a sparsity metric, reached values between 1 and 1/E, demonstrating controllable distribution concentration.

This metric quantifies sparsity, with higher values indicating greater concentration of probability mass on fewer experts. The work models MoE routing using a spike and slab prior, separating expert selection from contribution distribution. Specifically, the DirMoE router utilises Gumbel-Sigmoid relaxation for expert selection and implicit reparameterisation for Dirichlet samples, enabling end-to-end gradients.

A sparsity coefficient, termed the ‘sparsity knob’, allows precise control over router sparsity, providing a calibration mechanism for specialisation. The design fundamentally disentangles expert selection, handled by a Bernoulli component, and expert contribution among chosen experts, managed by a Dirichlet component.

The study introduces a variational ELBO training objective, incorporating a direct sparsity penalty that controls the expected number of active experts. Key hyperparameters are scheduled to guide the routing process from exploratory to definitive states. The Dirichlet distribution is sampled via normalised Gamma with implicit reparameterisation, maintaining full differentiability throughout the forward pass.

The system employs a two-level concentration parameter, α, with values αhi > αlo > 0, and a global scale λ, to regulate the concentration of active and inactive experts. Furthermore, the research demonstrates strong performance across multiple datasets and tasks, achieving competitive zero-shot results and enhanced expert specialisation. The use of relaxed gates avoids mixing discrete and continuous objects, maintaining a closed-form Kullback-Leibler divergence for efficient training.

Disentangling expert selection and weighting enhances specialisation and sparsity

Dirichlet-Routed Mixture-of-Experts (DirMoE) introduces a new differentiable routing mechanism for large-scale models. This approach addresses limitations in existing routers that conflate expert selection and contribution weighting, instead disentangling these processes into separate Bernoulli and Dirichlet components.

The resulting design allows for explicit control over sparsity and calibrated concentration of expert activations, all while maintaining full differentiability throughout the forward pass. Empirical evaluation demonstrates that DirMoE achieves strong performance and improved expert specialisation compared to conventional Mixture-of-Experts architectures.

Specifically, the method exhibits better domain specialisation across layers, avoiding the homogenisation of experts seen in systems that enforce equal contribution. The training objective incorporates a sparsity penalty, precisely controlling the expected number of active experts and guiding the routing process from exploration to definitive assignment.

Acknowledged limitations include the need for further investigation into model robustness, although the probabilistic formulation and concentration control offer potential improvements in this area. Future research directions focus on leveraging the disentangled routing variables to enhance interpretability, potentially revealing clearer and more focused expert specialisations. These findings establish a pathway towards more controllable and efficient large language models with improved expert utilisation and potentially greater resilience.

🗞 DirMoE: Dirichlet-routed Mixture of Experts

🧠 ArXiv: https://arxiv.org/abs/2602.09001