Researchers are beginning to unravel the mystery of how artificial intelligence ‘feels’, with a new study pinpointing a key area within large language models responsible for processing emotion. Zhen Zhang, Runhao Zeng, and Sicheng Zhao from Shenzhen MSU-BIT University and Tsinghua University, alongside Xiping Hu, investigated the internal workings of these powerful multimodal foundation models to understand how they represent and generate affective responses. Their work represents a significant step forward, demonstrating that emotional intelligence doesn’t reside within the entire model architecture, but specifically localises to a component called the ‘gate_proj’ , a feed-forward gating projection. By focusing adaptation on this relatively small part of the model (approximately 24.5% of parameters), the team achieved performance remarkably close to much larger, more comprehensive training regimes, suggesting a pathway to far more efficient and understandable AI emotion processing.

The study unveils that emotion-oriented supervision doesn’t primarily reshape the attention modules, but instead concentrates adaptation within the feed-forward gating projection, termed \texttt{gate_proj}. This discovery challenges previous assumptions linking affective capabilities to mechanisms similar to those used for reasoning, suggesting a distinct structural basis for emotional intelligence in artificial systems.

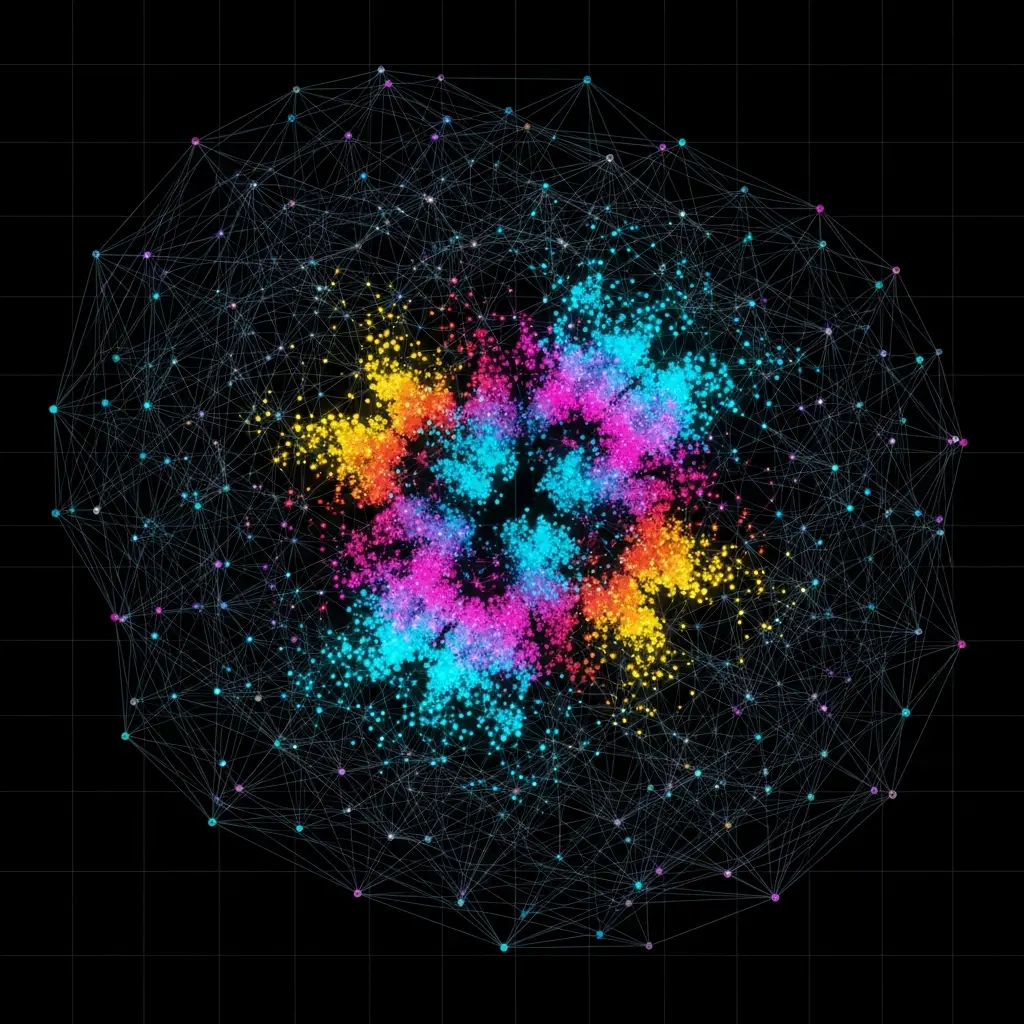

Architectural Evidence: How Affective Skills Emerge

The team achieved this understanding by meticulously analysing parameter changes in multiple foundation models, including multimodal emotion models, a depression recognition model, and a text-based sentiment model. By comparing base models with their affectively adapted counterparts, they consistently observed significantly larger and more structured changes in the \texttt{gate_proj} compared to other components. Controlled module transfer experiments further solidified this finding, demonstrating that transferring only the \texttt{gate_proj} parameters from an affective model into a base model consistently improved performance across various emotion-related tasks, all while preserving general language fluency. This suggests that the \texttt{gate_proj} is not merely correlated with affective understanding, but is fundamentally necessary for it.

Notably, the research establishes that \texttt{gate_proj} is both sufficient and efficient for affective processing. Through targeted single-module adaptation, the scientists proved that fine-tuning only this module yields more effective and stable affective performance than focusing on attention-based projections. This led to the development of Gate-Focused Efficient Tuning (GET), a novel strategy that restricts affective adaptation solely to the gating pathway. Remarkably, GET achieves 96.6% of the average performance of AffectGPT across eight affective tasks, but only requires tuning approximately 24.5% of the parameters used by AffectGPT, a substantial improvement in parameter efficiency.

These findings provide empirical evidence that affective capabilities in foundation models are structurally mediated by feed-forward gating mechanisms, identifying \texttt{gate_proj} as a central architectural locus for affective modelling. The work opens exciting possibilities for designing more efficient, controllable, and interpretable affective AI systems, potentially leading to advancements in areas like mental health support, human-computer interaction, and emotionally intelligent robotics. This mechanistic understanding represents a significant step towards truly ‘opening the black box’ of affective computing and building AI that can genuinely understand and respond to human emotions.

Affective Parameter Adaptation in Multimodal Foundation Models enhances

Mechanistic Study of Emotion in Multimodal Foundations

Scientists initiated a systematic mechanistic study to unravel affective modelling within multimodal foundation models, focusing on how emotion-oriented supervision reshapes internal parameters. Researchers analysed multiple architectures, training strategies, and affective tasks to pinpoint the architectural locus of affective understanding and generation, moving beyond simply improving task accuracy. The study pioneered a module-wise parameter analysis, comparing affectively adapted models with their base counterparts to determine if adaptation was structurally selective, rather than diffuse, and thereby attributable to affective supervision itself. Experiments employed a diverse collection of affective foundation models and tasks, including multimodal emotion understanding, sentiment analysis, and mental health assessment, regarding them as controlled instances of affective adaptation.

The team meticulously isolated the large language model (LLM) component, freezing multimodal encoders during fine-tuning to concentrate exclusively on architectural mechanisms responsible for affective modelling. Specifically, researchers examined the attention projection layers {q, k, v, o} proj and the feed-forward network (FFN) projections {gate, up, down} proj, enabling a fine-grained analysis of how affective supervision interacts with distinct functional components of the Transformer block. This decomposition allowed for precise identification of parameter reconfiguration patterns. The work revealed a consistent and robust pattern: affective adaptation primarily localises to the feed-forward gating projection (\texttt{gate_proj}).

Controlled module transfer experiments demonstrated that \texttt{gate_proj} is sufficient, efficient, and necessary for both affective understanding and generation, proving its central role. Targeted single-module adaptation further validated this finding, confirming the importance of this specific projection layer. Notably, by tuning only approximately 24.5% of the parameters tuned by AffectGPT, the approach achieved 96.6% of its average performance across eight affective tasks, highlighting substantial parameter efficiency. This innovative methodology, focusing on module-level reconfiguration, enabled the team to demonstrate that affective capabilities are structurally mediated by feed-forward gating. The study’s findings provide empirical evidence identifying \texttt{gate_proj} as a central architectural locus of affective modelling, offering a significant advancement in understanding the internal workings of these complex systems. The approach enables a deeper understanding of how these models ‘feel’, moving beyond purely empirical performance metrics.

Gate_proj drives affective modelling in AI

Gate Projection: Central Locus for Affective AI Modeling

Scientists have pinpointed the feed-forward gating projection, or \texttt{gate_proj}, as the primary architectural locus for affective modeling in multimodal foundation models. The research team conducted a systematic mechanistic study, analysing how emotion-oriented supervision reshapes internal model parameters across multiple architectures and training strategies. Results consistently demonstrate that affective adaptation localises to the \texttt{gate_proj} rather than the attention module, revealing a clear and robust pattern in how models process emotion. Through controlled module transfer experiments, the team proved that \texttt{gate_proj} is both sufficient and necessary for affective understanding and generation, establishing its critical role in emotional AI.

Experiments revealed that tuning only approximately 24.5% of the parameters used by AffectGPT, their approach, Gate-Focused Efficient Tuning (GET), achieves 96.6% of AffectGPT’s average performance across eight diverse affective tasks. This highlights a substantial improvement in parameter efficiency, suggesting that focusing on \texttt{gate_proj} significantly reduces computational cost without sacrificing performance. Data shows that emotion-oriented supervision induces larger and more structured changes in \texttt{gate_proj} compared to other components, indicating selective feature modulation rather than global information reorganization is key to affective modelling. Researchers further validated these findings through targeted single-module adaptation, demonstrating that \texttt{gate_proj} contributes more effectively and stably to affective performance than attention-based projections.

Parameter inheritance experiments showed that transferring \texttt{gate_proj} parameters from an affective model into a base model yields consistent improvements in emotion-related tasks, while preserving general language fluency. Destructive ablation studies confirmed the necessity of \texttt{gate_proj} for affective capabilities, solidifying its central role in the process. The breakthrough delivers empirical evidence that affective capabilities in foundation models are structurally mediated by feed-forward gating mechanisms, offering a mechanistic basis for designing more efficient and controllable affective adaptation. Measurements confirm that GET achieves comparable performance to AffectGPT while drastically reducing the number of tuned parameters, paving the way for more sustainable and accessible affective AI systems. This work identifies \texttt{gate_proj} as a central architectural locus, opening the black box of affective modelling and providing valuable insights for future research.

Gate_proj controls emotional response generation in the model

Scientists have identified a key architectural component within large language models responsible for processing and generating emotional responses. Researchers conducted a systematic mechanistic study of multimodal foundation models to understand how emotion-oriented supervision reshapes internal parameters, revealing that adaptation primarily localizes to the feed-forward gating projection, or ‘gate_proj’. Through controlled experiments involving module transfer and ablation, the team demonstrated that this ‘gate_proj’ is both sufficient and necessary for affective understanding and generation, functioning as a central locus for emotional modelling. Notably, tuning only approximately 24.5% of the parameters, a fraction of those used in models like AffectGPT, achieves 96.6% of its performance across eight affective tasks, demonstrating substantial parameter efficiency.

This finding suggests that affective capabilities aren’t broadly distributed throughout the model, but are structurally mediated by this specific gating mechanism, offering a pathway to more streamlined and effective emotional AI. The authors acknowledge that their analysis focuses on a specific set of models and tasks, potentially limiting the generalizability of their findings. Future research could explore whether similar patterns emerge in different model architectures or with more diverse affective datasets, potentially extending the observed phenomenon to other modalities beyond text and image.

🗞 Opening the Black Box: Preliminary Insights into Affective Modeling in Multimodal Foundation Models

🧠 ArXiv: https://arxiv.org/abs/2601.15906