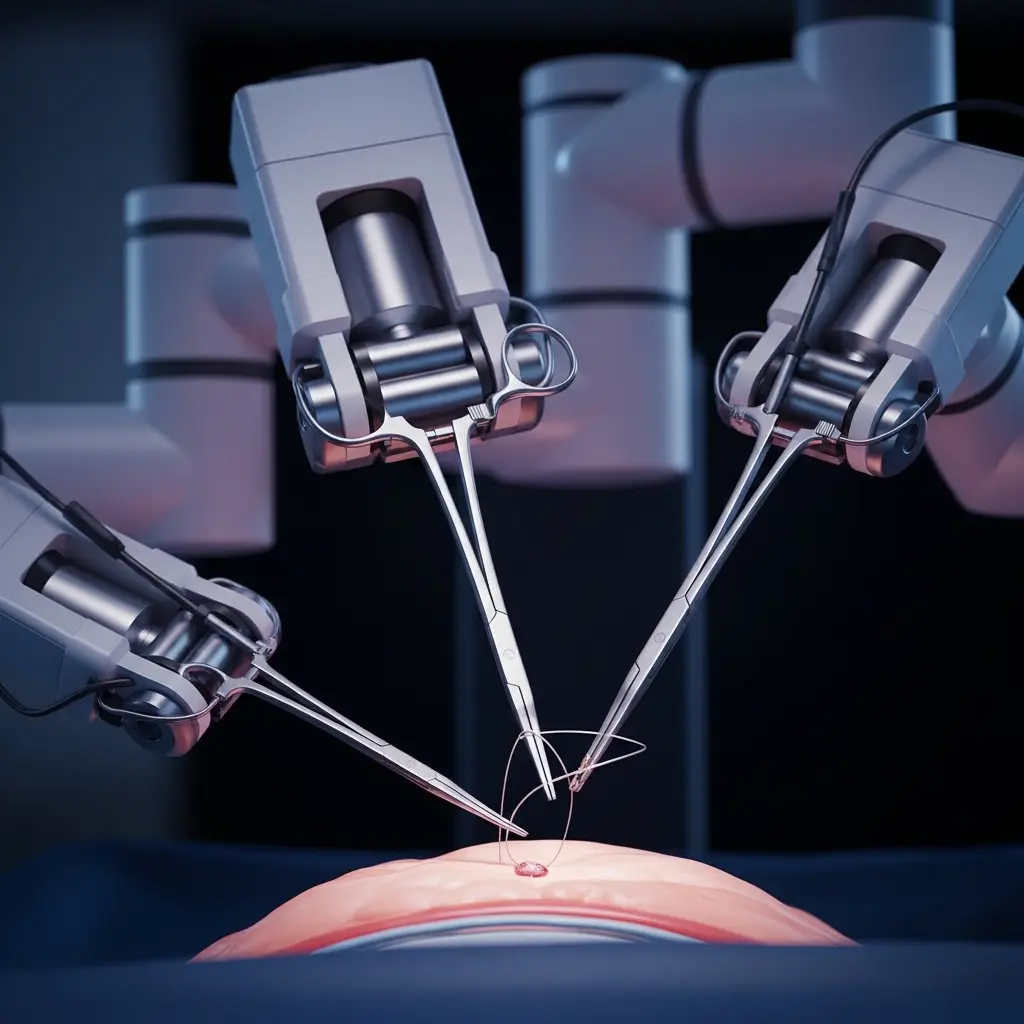

Surgical robotics increasingly demands robust and adaptable imitation learning policies, yet progress is hampered by limited training data and the critical need for precision. Lorenzo Mazza, Ariel Rodriguez, and Rayan Younis, from the Technical University of Dresden and the University Hospital Carl Gustav Carus, alongside Martin Lelis, Ortrun Hellig and Chenpan Li, address this challenge with MoE-ACT, a novel supervised Mixture-of-Experts architecture designed to enhance surgical imitation learning. Their research demonstrates that even with fewer than 150 demonstrations and utilising only stereo endoscopic images, MoE-ACT significantly improves performance over existing methods like Language-Action models and standard Action Chunking (ACT) policies. This advancement is particularly significant as it shows improved robustness to variations in lighting, occlusion, and viewpoint, and crucially, facilitates zero-shot transfer to in vivo porcine tissue, paving the way for safer and more effective robotic surgical assistance.

MoE architecture learns sparse surgical manipulation of model

Designing the Mixture-of-Experts for Surgery

Scientists have demonstrated a significant advancement in surgical robotics through the development of a supervised Mixture-of-Experts (MoE) architecture for complex, phase-structured manipulation tasks. This research addresses the critical challenges of data scarcity, constrained workspaces, and the paramount need for safety and predictability inherent in surgical applications of imitation learning. Unlike previous approaches requiring multi-camera setups or extensive datasets of thousands of demonstrations, the team achieved robust learning of intricate surgical manipulations using less than 150 demonstrations and solely stereo endoscopic images. The core innovation lies in augmenting a lightweight action decoder policy, Action Chunking Transformer (ACT), with their MoE architecture, enabling the robot to interpret visual cues, grasp deformable tissue, and perform sustained retraction during collaborative surgical procedures.

The study focused on the collaborative surgical task of bowel grasping and retraction, where a robotic assistant supports a human surgeon. Researchers benchmarked their MoE-ACT system against state-of-the-art Vision-Language-Action (VLA) models and the standard ACT baseline, revealing that generalist VLAs struggled to fully acquire the task even under ideal conditions. While standard ACT achieved moderate success, the integration of the supervised MoE architecture substantially improved performance, yielding significantly higher success rates and demonstrating enhanced robustness in challenging out-of-distribution scenarios. These scenarios included novel grasp locations, reduced illumination, and partial occlusions, proving the system’s adaptability.

Notably, the MoE-ACT system generalized to unseen testing viewpoints and, crucially, transferred zero-shot to ex vivo porcine tissue without any additional training. This zero-shot transfer capability represents a promising step towards eventual in vivo deployment within live surgical environments. Supporting this claim, the researchers present preliminary qualitative results from policy roll-outs during in vivo porcine surgery, showcasing the system’s potential in a realistic surgical setting. These findings establish that supervised MoE architectures offer a data-efficient pathway for learning multi-step dexterous manipulation in visually constrained environments, paving the way for more effective and reliable surgical robotic assistance.

MoE Architecture for Sparse Surgical Robotics Data enables

Scientists developed a supervised Mixture-of-Experts (MoE) architecture to address challenges in applying imitation learning to surgical robotics, specifically data scarcity and constrained workspaces. The research team engineered this architecture as an add-on to existing autonomous policies, focusing on phase-structured surgical manipulation tasks. Unlike previous surgical robot learning approaches, this study employed a lightweight action decoder policy, Action Chunking Transformer (ACT), and trained it using solely stereo endoscopic images with fewer than 150 demonstrations. This innovative approach bypasses the need for multi-camera setups or extensive demonstration datasets typically required in the field.

Evaluating Robustness in Varied Surgical Conditions

Experiments centred on a collaborative surgical task involving bowel grasping and retraction, where a robotic assistant interprets visual cues from a surgeon and performs precise tissue manipulation. Researchers meticulously collected data from human surgeons performing the task, capturing visual input from the stereo endoscopic camera system and corresponding robot actions. The study pioneered a benchmarking process, comparing the MoE-enhanced ACT policy against state-of-the-art Vision-Language-Action (VLA) models and the standard ACT baseline. Quantitative performance was assessed using success rates, measuring the robot’s ability to accurately grasp and retract the bowel tissue.

The team rigorously tested the robustness of their method under out-of-distribution scenarios, including variations in grasp locations, illumination levels, and the presence of partial occlusions. To evaluate generalisation capabilities, the trained policy was deployed on unseen testing viewpoints and, crucially, transferred zero-shot to ex vivo porcine tissue without any further training. This transfer learning demonstrated the potential for in vivo deployment. Furthermore, preliminary qualitative results from policy roll-outs during in vivo porcine surgery were presented, showcasing the feasibility of the approach in a realistic surgical environment. The MoE architecture significantly boosted ACT’s performance, yielding higher success rates and improved robustness compared to the baseline and VLA models, demonstrating a data-efficient approach for complex surgical manipulation.

Achieving Zero-Shot Generalization in Manipulation Tasks

MoE architecture excels at surgical grasping with remarkable

Scientists achieved a breakthrough in surgical robotics by developing a supervised Mixture-of-Experts (MoE) architecture for phase-structured surgical manipulation tasks. The team demonstrated that this architecture, added to existing autonomous policies, can learn complex, long-horizon manipulation from fewer than 150 demonstrations using only stereo endoscopic images. Experiments focused on the collaborative surgical task of bowel grasping and retraction, where a robotic assistant interprets visual cues and performs targeted grasping on deformable tissue. Results demonstrate that generalist Vision-Language-Action (VLA) models failed to fully acquire the task, even with standard in-distribution conditions.

Furthermore, while the standard Action Chunking Transformer (ACT) baseline achieved moderate success, the MoE architecture significantly boosted performance, yielding higher success rates in-distribution and improved robustness in out-of-distribution scenarios. Specifically, the MoE system maintained performance even with novel grasp locations, reduced illumination, and partial occlusions. Notably, the trained policy generalized to unseen testing viewpoints and transferred zero-shot to ex vivo porcine tissue without additional training, suggesting a viable pathway towards in vivo deployment. Preliminary results from in vivo porcine surgery policy roll-outs support these findings, demonstrating the feasibility of the approach in a live surgical setting.

Measurements confirm that the supervised MoE architecture provides a data-efficient approach for learning multi-step dexterous manipulation in visually constrained environments. The research addresses key limitations of prior work, including the need for multi-camera setups and large demonstration datasets, previously requiring approximately 16,000 demonstrations for comparable tasks. This new method achieves robust performance with a significantly reduced dataset of less than 150 demonstrations. The team’s work highlights the potential for lightweight architectures to enable real-time control on resource-constrained surgical robots, addressing critical staff shortages and the growing demands of an ageing population.

Tests prove that explicitly supervising the gating network with phase labels ensures stable convergence and clear functional specialization for each expert within the MoE system. This approach is particularly relevant for surgical tasks, where distinct phase transitions are readily observable, allowing for decomposition into simpler, manageable sub-components. The successful zero-shot transfer to porcine tissue demonstrates the system’s ability to generalize beyond the training environment, paving the way for future clinical applications in minimally-invasive surgery.

MoE architecture boosts surgical robotic imitation learning performance

Scientists have developed a supervised Mixture-of-Experts (MoE) architecture to improve imitation learning for surgical robotics, addressing challenges posed by limited data and the need for safety and precision. This new architecture enhances lightweight action decoder policies, such as Action Chunking (ACT), enabling them to learn complex surgical manipulation tasks from fewer than 150 demonstrations using only stereo endoscopic images. The research focused on the collaborative surgical task of bowel grasping and retraction, where a robotic assistant responds to a surgeon’s visual cues and manipulates deformable tissue. The findings demonstrate that the MoE architecture significantly improves performance compared to state-of-the-art Language-Action (VLA) models and the standard ACT baseline, both in standard conditions and in more challenging out-of-distribution scenarios.

Specifically, the enhanced policy exhibited superior robustness to variations like novel grasp locations, reduced illumination, and partial occlusions, and successfully transferred zero-shot to ex vivo porcine tissue. Preliminary in vivo porcine surgery roll-outs also support these findings, suggesting a pathway towards real-world application. The authors acknowledge a limitation in their current framework: the reliance on manual phase supervision for the MoE gating network. Future research will explore unsupervised learning methods to automatically identify task skills from demonstration data. Additionally, they plan to integrate real-time depth vision from stereoscopic endoscope feeds to improve 3D spatial understanding and robustness for in vivo deployment, motivated by observations from preliminary in vivo experiments. This work establishes that imitation learning, combined with a Mixture-of-Experts architecture, can automate multi-step minimally-invasive surgical tasks using only endoscopic vision, potentially enabling the deployment of learned policies in live surgical settings.

🗞 MoE-ACT: Improving Surgical Imitation Learning Policies through Supervised Mixture-of-Experts

🧠 ArXiv: https://arxiv.org/abs/2601.21971