On April 19, 2025, researchers at HKUST presented ‘SG-Reg,’ an advanced technique for efficient scene graph registration in robotics, effectively tackling real-world challenges through a novel data generation methodology.

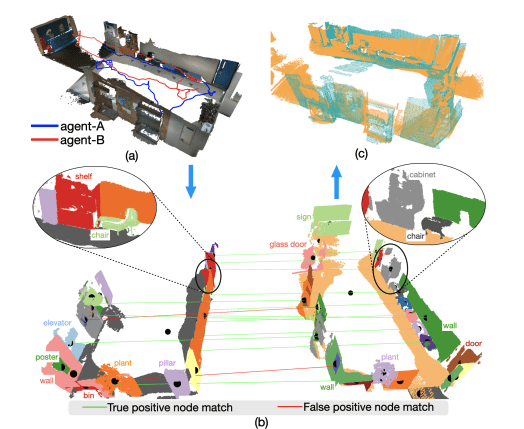

The paper presents a novel method for registering semantic scene graphs using a scene graph network that encodes multiple modalities: open-set features, spatial topology, and shape. These are fused into compact node features, enabling coarse-to-fine correspondence matching and robust pose estimation. The approach maintains sparse, hierarchical representations, reducing GPU resources and communication bandwidth in multi-agent tasks. A new data generation method using vision foundation models replaces reliance on ground-truth annotations. Validation shows significant improvement over baselines, achieving higher registration success rates and recall with minimal 52 KB bandwidth per query frame.

In recent years, robotics has emerged as a transformative field, driving advancements across various industries. Researchers at the Hong Kong University of Science and Technology (HKUST) have made significant contributions to this domain, developing technologies that promise to reshape our technological landscape.

The research team at HKUST is addressing diverse challenges within robotics, leveraging unique expertise to advance the field. Their work spans several critical areas, including unmanned aerial vehicles (UAVs), spatial perception, mapping, and autonomous navigation systems. These innovations are designed to enhance efficiency, safety, and functionality across a range of applications.

Peize Liu’s research focuses on UAVs, with an

Peize Liu’s research focuses on UAVs, with an emphasis on improving state estimation and swarm behavior. This work aims to enable more efficient and coordinated flight operations, which could have implications for logistics, surveillance, and other aerial applications. Meanwhile, Chuhao Liu is advancing spatial perception and mapping technologies, crucial for enabling robots to navigate complex environments with greater accuracy.

Zhijian Qiao’s research delves into robust state estimation and crowd-sourced mapping, improving the reliability of location data in dynamic settings. This work has potential applications in urban planning and emergency response operations, where real-time data is critical. Jieqi Shi contributes to advancements in visual depth estimation and V2X cooperation perception, technologies that are vital for the development of autonomous navigation systems.

Ke Wang’s research on localization and mapping further strengthens these technologies, ensuring that robots can operate effectively in real-world scenarios. The collective efforts of these researchers create a synergistic environment where individual strengths combine to address complex robotics challenges. Their collaborative approach fosters the development of comprehensive solutions, from improving UAV swarm dynamics to enhancing autonomous navigation systems.

The implications of this research are vast. Enhanced localization and mapping technologies could improve urban planning and emergency response operations, while innovations in state estimation for aerial swarms could revolutionize logistics and surveillance. Additionally, advancements in visual depth estimation and V2X cooperation perception pave the way for safer and more efficient autonomous vehicles.

In conclusion, the work being done at HKUST

In conclusion, the work being done at HKUST underscores the potential of robotics to transform our world. By addressing key challenges in state estimation, localization, and mapping, these researchers are laying the groundwork for future technologies that will enhance efficiency, safety, and functionality across various sectors. Their contributions not only advance the field of robotics but also pave the way for a more connected and automated future.

The innovative research at HKUST is driving significant progress in robotics, with promising applications that extend beyond the laboratory into real-world implementations. As these technologies continue to evolve, they hold the potential to redefine how we interact with and utilize robotic systems in our daily lives.

🗞 SG-Reg: Generalizable and Efficient Scene Graph Registration

🧠 DOI: https://doi.org/10.48550/arXiv.2504.14440