Researchers are tackling the complex logistical challenges of electric, on-demand transport with a novel approach to the Electric Dial-a-Ride Problem (E-DARP). Sten Elling Tingstad Jacobsen, Attila Lischka, and Balázs Kulcsár, all from Chalmers University of Technology, alongside Anders Lindman from Volvo Cars, present a deep reinforcement learning method that moves beyond traditional route optimisation techniques. Their work is significant because it directly addresses the limitations of existing solutions when applied to real-world scenarios involving limited battery capacity and fluctuating energy demands. By learning directly from road network attributes, the team’s policy jointly optimises routing, charging schedules, and service quality, achieving substantial improvements in both solution quality and computational speed compared to established metaheuristic methods like Adaptive Large Neighborhood Search.

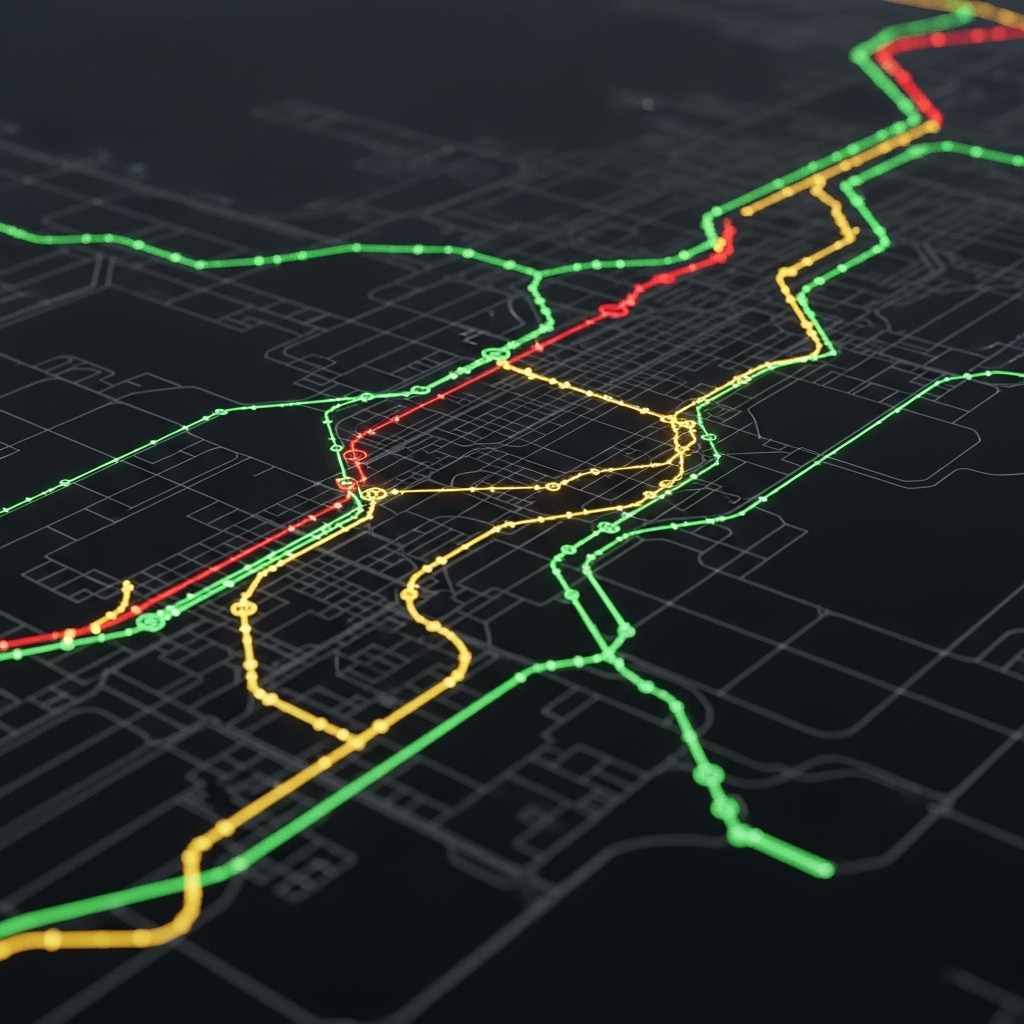

The research addresses the increasing demand for electric, on-demand services and the operational difficulties of managing fleets under energy and service-quality constraints. This study introduces a novel method based on a graph neural network encoder and an attention-driven route construction policy, enabling the joint optimisation of routing, charging, and service quality. By directly processing edge attributes like travel time and energy consumption, the team’s approach accurately captures the non-Euclidean, asymmetric, and energy-dependent routing costs found in real-world road networks.

The core innovation lies in the learned policy’s ability to navigate the E-DARP without relying on traditional Euclidean assumptions or manually designed heuristics. Researchers evaluated the method using ride-sharing data from San Francisco, demonstrating its effectiveness on both benchmark instances and large-scale scenarios. On standard benchmark problems, the deep reinforcement learning approach achieved solutions within 0.4% of the best-known results, while simultaneously reducing computation times by several orders of magnitude. This significant speedup is crucial for real-time applications where rapid decision-making is essential.

Further investigation involved large-scale instances with up to 250 request pairs, incorporating realistic energy models and nonlinear charging dynamics. This contrasts sharply with the hours required by the metaheuristic, highlighting the potential for substantial efficiency gains. The team also conducted sensitivity analyses, quantifying the impact of key parameters such as battery capacity, fleet size, and reward weights, alongside robustness experiments confirming the policy’s generalizability under stochastic conditions.

This breakthrough establishes a new paradigm for solving the E-DARP, offering a scalable and efficient alternative to traditional optimisation methods. The work opens avenues for deploying intelligent, on-demand electric vehicle fleets that can effectively balance passenger needs, energy consumption, and operational costs. The research team engineered a system based on a graph neural network encoder and an attention-driven route construction policy to optimise routing, charging, and service quality simultaneously. This method directly processes edge attributes, including travel time and energy consumption, enabling the capture of non-Euclidean, asymmetric, and energy-dependent routing costs within road networks. Experiments employed ride-sharing data from San Francisco to evaluate the performance of the learned policy.

The study pioneered the use of a graph neural network to encode the problem space, allowing the model to learn representations of the road network and request patterns. Researchers then trained the attention-driven policy to construct routes by selectively focusing on relevant edges in the graph, effectively prioritising efficient and energy-conscious paths. The approach achieves solutions within 0.4% of best-known results on benchmark instances, while significantly reducing computation times. A second case study investigated large-scale instances with up to 250 request pairs, incorporating realistic energy models and nonlinear charging dynamics.

The team implemented a detailed energy model that accounts for battery drain during travel and the varying charging rates at different charging stations. This allowed for a more accurate simulation of real-world electric vehicle operation. Furthermore, sensitivity analyses quantified the impact of key parameters such as battery capacity, fleet size, ride-sharing capacity, and reward weights on the overall performance. Robustness experiments demonstrated that deterministically trained policies generalise effectively even under stochastic conditions, highlighting the adaptability of the approach. The team measured performance gains on ride-sharing data from San Francisco, achieving solutions within 0.4% of best-known results on benchmark instances while simultaneously reducing computation times by orders of magnitude. Experiments revealed that the learned policy effectively optimizes routing, charging schedules, and service quality without relying on traditional Euclidean assumptions or manually designed heuristics. This breakthrough delivers a significant advancement in real-time fleet management capabilities.

The research focused on operating directly on edge attributes, including travel time and energy consumption, to accurately capture the non-Euclidean, asymmetric, and energy-dependent routing costs inherent in real road networks. Data shows the learned policy successfully navigates these complexities, jointly optimizing multiple factors crucial for efficient service delivery. A second case study, utilising large-scale instances with up to 250 request pairs, realistic energy models, and nonlinear charging dynamics, demonstrated even more substantial improvements. Scientists recorded that sensitivity analyses quantified the impact of key parameters, including battery capacity, fleet size, ride-sharing capacity, and reward weights, providing valuable insights into system behaviour. Robustness experiments confirmed that deterministically trained policies generalise effectively even under stochastic conditions, ensuring reliable performance in dynamic environments. The breakthrough delivers a scalable solution for managing electric vehicle fleets in complex urban settings.

Measurements confirm the approach’s ability to handle realistic constraints and optimise performance across various operational scenarios. The learned policy’s efficiency stems from its ability to learn directly from data, bypassing the limitations of traditional optimisation methods. Further analysis quantified the impact of varying battery capacities, revealing how adjustments to fleet size and ride-sharing capacity influence overall system performance. This new approach utilises an encoder and attention-driven route construction policy, allowing it to optimise routing, charging, and service quality simultaneously. By directly analysing edge attributes like travel time and energy consumption, the method effectively captures the non-Euclidean and energy-dependent costs inherent in real-world road networks. Sensitivity analyses highlighted the importance of battery capacity and fleet size, while robustness experiments showed effective generalisation under stochastic conditions, even when trained on deterministic data. The authors acknowledge limitations related to the assumption of deterministic demand arrival, suggesting this could significantly impact completion rates in sequential re-planning scenarios. Future research could explore methods to address this uncertainty more directly. However, the findings establish a valuable framework for fleet planning, demonstrating the potential for efficient and reliable operation of electric vehicle fleets in urban environments, and offering insights into critical operational trade-offs regarding resource allocation and service quality.

👉 More information

🗞 Learning to Dial-a-Ride: A Deep Graph Reinforcement Learning Approach to the Electric Dial-a-Ride Problem

🧠 ArXiv: https://arxiv.org/abs/2601.22052