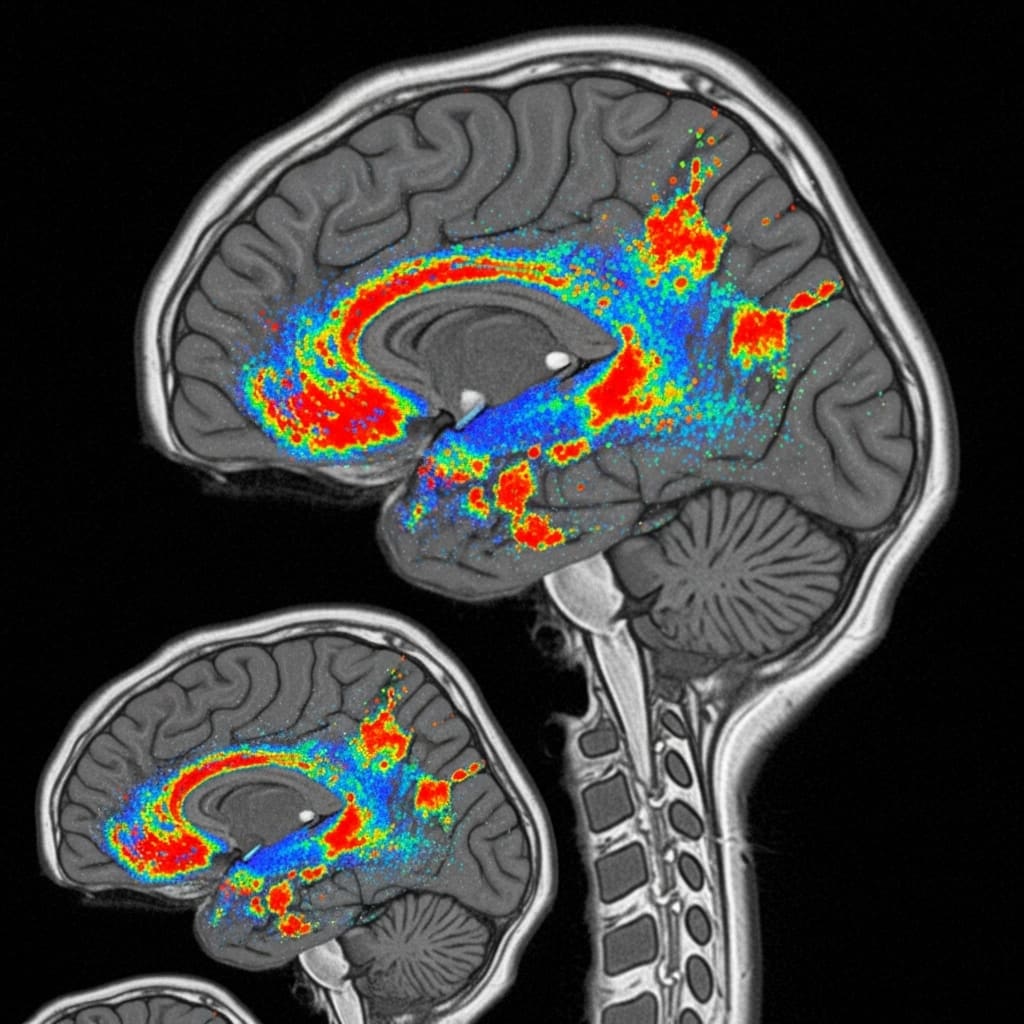

Researchers are tackling limitations within functional magnetic resonance imaging (fMRI) analysis by introducing a new approach that moves beyond reliance on pre-defined brain region definitions. Mo Wang, Wenhao Ye, and Junfeng Xia, from the Department of Biomedical Engineering at the Southern University of Science and Technology, alongside Junxiang Zhang, Xuanye Pan, and Minghao Xu et al., present Omni-fMRI, a universal, atlas-free foundation model operating directly on voxel-level signals. This innovation is significant because it sidesteps potential biases introduced by traditional atlas-dependent methods and enables scalable pre-training using a dynamic patching mechanism applied to nearly 50,000 fMRI sessions. By establishing a robust benchmark suite across eleven datasets, the team demonstrates Omni-fMRI consistently exceeds the performance of existing techniques, offering a reproducible framework for advanced brain representation learning.

The research addresses limitations in current fMRI analysis, which often relies on predefined brain region parcellations that discard crucial fine-grained information and introduce biases dependent on the chosen atlas.

To enable large-scale pretraining, the team introduced a dynamic patching mechanism, substantially reducing computational costs while preserving essential spatial structure within the fMRI data. This innovative approach allows for scalable pretraining using 49,497 fMRI sessions sourced from nine distinct datasets.

The study establishes a comprehensive benchmark suite encompassing 11 datasets and a diverse range of both resting-state and task-based fMRI tasks, facilitating reproducible and fair comparisons between models. Omni-fMRI’s dynamic patching mechanism intelligently assigns larger patches to less informative areas, while maintaining high resolution in functionally significant regions, optimising both memory usage and computational efficiency.

Experiments demonstrate that Omni-fMRI consistently outperforms existing fMRI foundation models, establishing a new standard for scalable and reproducible atlas-free brain representation learning. This breakthrough reveals a significant advancement in fMRI analysis by eliminating the need for predefined brain atlases, thereby reducing inter-subject variability and improving the accuracy of functional data interpretation.

The research establishes a framework for more robust and transferable fMRI models, capable of generalising across diverse populations and tasks. By operating directly on voxel-level signals, Omni-fMRI preserves critical information often lost in region-based approaches, potentially unlocking deeper insights into brain function and connectivity.

Furthermore, the creation of a standardised benchmark suite and the release of code and experiment logs promote transparency and facilitate future research in the field. The work opens avenues for improved clinical diagnostics, personalised medicine, and a more nuanced understanding of the human brain, with potential applications ranging from early disease detection to cognitive enhancement strategies. The team’s achievements provide a powerful new tool for neuroscientists seeking to unravel the complexities of the brain.

Voxel-wise self-supervised pretraining using dynamic patching and a comprehensive benchmark enables robust 3D medical image analysis

Scientists developed Omni-fMRI, an atlas-free framework operating directly on voxel-level fMRI signals to address limitations of existing self-supervised methods reliant on predefined region-level parcellations. To enable scalable pretraining across 49,497 fMRI sessions from nine datasets, the research pioneered a dynamic patching mechanism that substantially reduces computational cost while preserving crucial spatial structure.

The study established a comprehensive benchmark suite encompassing 11 datasets and diverse resting-state and task-based fMRI tasks to support reproducibility and facilitate fair comparison of performance. Researchers formulated the problem as self-supervised pretraining of foundation models on voxel-wise resting-state fMRI data, denoting a 4D fMRI volume as X ∈ RH×W×D×T.

The team aimed to learn a task-agnostic encoder fθ: X → Z, capturing intrinsic spatiotemporal brain dynamics without predefined atlases or region-level aggregation. Recognizing the irregular spatiotemporal field and heterogeneous information density inherent in voxel-wise fMRI, the work jointly designed a tokenization, embedding, and self-supervised objective.

This involved a dynamic patch tokenization mechanism adapting spatial granularity to local spatiotemporal complexity, a dual-path multi-scale embedding stabilizing optimization under heterogeneous patch resolutions, and a scale-aware masked autoencoding objective enabling principled self-supervised learning. To address computational limitations, scientists implemented a dynamic patching strategy, reducing the token sequence length from approximately 14,000 to 4,300.

This efficiency allowed the use of a standard ViT architecture with global self-attention, effectively capturing long-range brain activities. Local spatiotemporal complexity was estimated using time-aggregated intensity variance, calculated as σ²P = 1/T Σt=1 EP[I²t] − (EP[It])², where It represents voxel intensity at time t and EP[·] is implemented via 3D average pooling.

Background patches with mean intensity below a predefined threshold were explicitly removed, and remaining foreground regions underwent adaptive granularity determination. Patches exhibiting low complexity (σ²P A dual-path multi-scale embedding module was employed to project heterogeneous tokens into a shared latent space.

Patches at the base resolution were embedded directly using a 3D convolutional tokenizer, while larger patches utilized a composite embedding z = φ(P↓) + ZeroMLP(Conv(φ(Pgrid))) + ppos, where φ denotes the patch tokenization module, P↓ is a downsampled representation, Pgrid represents a grid of sub-patches, and ppos denotes the positional embedding. The aggregation operator Conv, implemented as a strided convolution, fused sub-patch features to recover structural details lost during downsampling, demonstrating a novel approach to handling multi-scale data in fMRI analysis.

Dynamic patching enhances performance of a large-scale atlas-free fMRI foundation model by enabling efficient adaptation to new datasets

Scientists have developed Omni-fMRI, a novel atlas-free foundation model that operates directly on voxel-level signals from functional Magnetic Resonance Imaging (fMRI). The research team successfully pretrained the model on an extensive dataset of 49,497 fMRI sessions, sourced from nine distinct datasets.

To facilitate this large-scale pretraining, they introduced a dynamic patching mechanism which substantially reduces computational cost while maintaining crucial spatial structure within the data. Experiments revealed that Omni-fMRI consistently outperforms existing foundation models across a comprehensive benchmark suite encompassing 11 datasets.

This benchmark includes a diverse set of both resting-state and task-based fMRI tasks, ensuring robust evaluation. The dynamic patching mechanism assigns larger patches to background regions and maintains fine-grained resolution in functionally active areas, demonstrably reducing memory and computational demands.

Measurements confirm that linear probing with Omni-fMRI achieves superior performance compared to the fully fine-tuned results of several established fMRI models. Tests prove that the model avoids atlas-induced misalignment by eliminating reliance on predefined parcellations, thereby preserving fine-grained functional information.

Researchers established standardized datasets, evaluation protocols, and released complete experiment logs to ensure reproducibility and facilitate future research. The breakthrough delivers a scalable and reproducible framework for atlas-free brain representation learning, opening avenues for improved cognitive, demographic, and clinical prediction settings.

Scalable representation learning from extensive fMRI data without atlas constraints

Researchers have developed Omni-fMRI, a novel atlas-free approach to functional magnetic resonance imaging (fMRI) analysis that operates directly on voxel-level signals. This method addresses limitations found in existing self-supervised fMRI techniques, which often rely on predefined brain region parcellations that can introduce bias and discard detailed voxel information.

Omni-fMRI employs a dynamic patching mechanism to reduce computational demands while maintaining crucial spatial structure, enabling scalable pretraining across a large dataset of 49,497 fMRI sessions from nine different sources. Experimental results demonstrate that Omni-fMRI consistently outperforms current methods across a comprehensive benchmark suite encompassing 11 datasets and a variety of resting-state and task-based fMRI tasks.

The framework establishes a reproducible foundation for learning brain representations without the need for predefined atlases. The authors acknowledge a limitation in the reliance on specific preprocessing steps, such as resampling and normalization, which could influence the results. Future work could explore the robustness of the model to variations in these parameters and investigate its application to even larger and more diverse datasets.

This research signifies a substantial advancement in fMRI analysis by offering a scalable and reproducible atlas-free framework. By operating at the voxel level and employing a dynamic patching strategy, Omni-fMRI unlocks the potential for more nuanced and unbiased brain representation learning. The consistent performance gains observed across multiple datasets and tasks suggest that this approach could improve the accuracy and reliability of various neuroimaging applications, including disease diagnosis and understanding of cognitive processes.

👉 More information

🗞 Omni-fMRI: A Universal Atlas-Free fMRI Foundation Model

🧠 ArXiv: https://arxiv.org/abs/2601.23090