Simulating the Milky Way Galaxy with enough detail to track individual stars presents a formidable challenge for computational astrophysics, often hampered by the computational cost of modelling small-scale events like supernova explosions. Keiya Hirashima from RIKEN, Michiko S. Fujii and Naoto Harada from The University of Tokyo, along with Takayuki R. Saitoh and colleagues, now overcome this limitation with a groundbreaking new approach. The team develops a simulation scheme that integrates traditional -body/hydrodynamics with a machine learning ‘surrogate model’, effectively bypassing the need for extremely short timesteps during supernova events and dramatically improving computational scalability. This innovation allows them to achieve a simulation of 300 billion particles, breaking through a long-standing barrier in galactic modelling and enabling, for the first time, a truly star-by-star simulation of our galaxy with unprecedented resolution and performance across a vast number of processors.

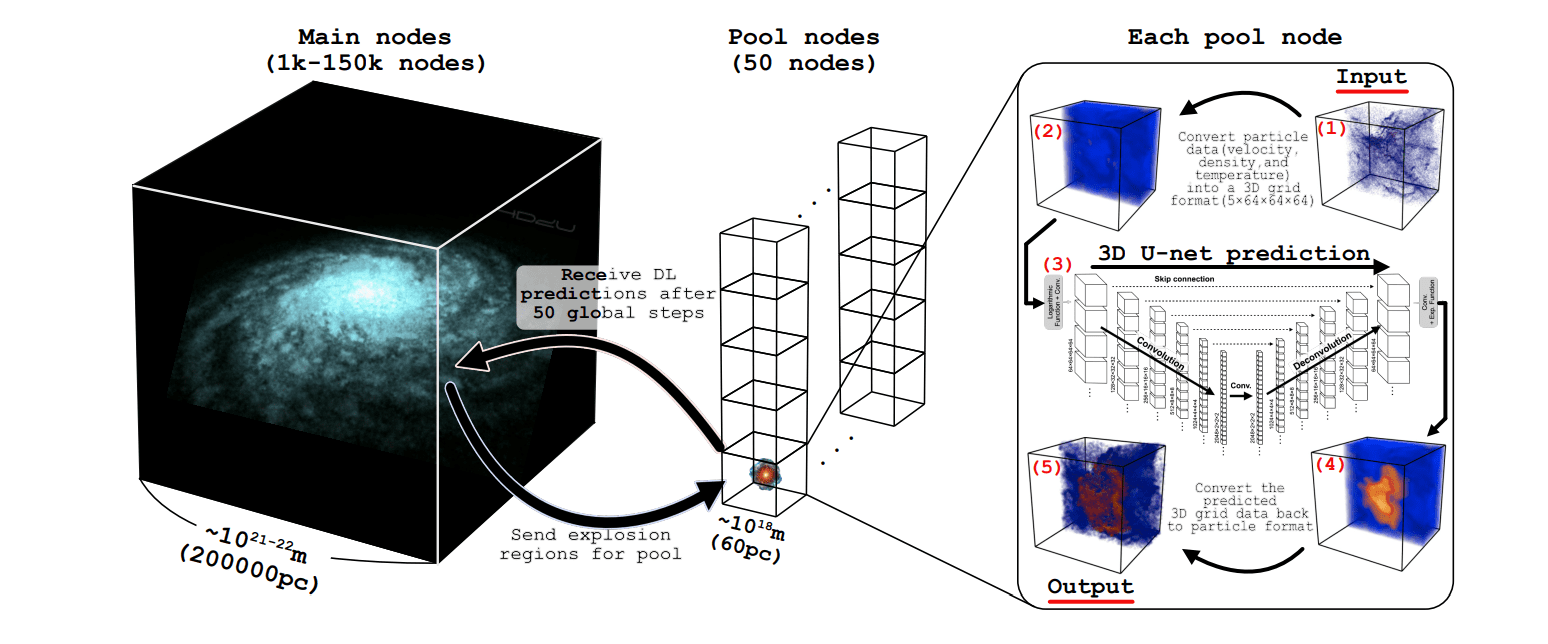

This breakthrough addresses a fundamental limitation in computational astrophysics, where simulations often struggle to accurately represent small-scale, short-timescale phenomena like supernova explosions. The team engineered a novel integration scheme for simulating the gravitational interactions of numerous bodies and the flow of interstellar gas, incorporating machine learning to overcome the restrictive timesteps traditionally imposed by supernova events, thereby significantly improving the simulation’s efficiency. The core of this innovation lies in a surrogate model, which effectively predicts the system’s behavior, allowing researchers to avoid the need for extremely small timesteps during supernova explosions.

Stars and dark matter are modeled as individual particles interacting gravitationally, while interstellar gas is represented using smoothed-particle hydrodynamics, distributing gas across numerous particles. This approach enables the simulation of a galactic halo extending to 200,000 parsecs, while still resolving features on the scale of a few parsecs, such as supernova shells. To achieve this massive scale, the study harnessed a high-performance computing environment consisting of 148,900 nodes, equivalent to 7,147,200 CPU cores. This computational power enabled the team to surpass the billion-particle barrier previously limiting state-of-the-art galaxy simulations, and the performance scales effectively with the number of CPU cores utilized. The resulting simulation resolves individual stars within the Milky Way, providing an unprecedented level of detail for studying galactic evolution and the lifecycle of stars, from their formation to their explosive deaths as supernovae. The simulation accurately models temperature variations from 10 Kelvin in star-forming regions to 107 Kelvin in supernova remnants, and captures the timescales of both galactic rotation and supernova expansion.

Astrophysical Simulations, Machine Learning, and Accuracy

This research represents a comprehensive effort to improve the accuracy and efficiency of astrophysical simulations, particularly those focused on galaxy formation and evolution. The work details computational methods and addresses the challenges of balancing numerical accuracy with computational cost, separating true physical effects from numerical artifacts. The research encompasses a wide range of topics, including star formation, supernova feedback, the interstellar medium, and galactic winds. The team utilizes sophisticated computational methods, including N-body simulations, hydrodynamics, and adaptive mesh refinement.

They address accurately simulating complex astrophysical processes, such as handling gravity, hydrodynamics, radiative transfer, and chemical reactions, while also improving the efficiency and accuracy of simulations. Increasingly, the authors are leveraging machine learning techniques, including neural networks and emulators, to speed up simulations, analyze data, and create surrogate models. Detailed modeling of the interstellar medium, including its multi-phase nature, chemistry, and interaction with star formation and feedback, is also a key focus. The research drives towards higher resolution to capture more detailed physics and reduce numerical artifacts.

The team utilizes various tools, including the Barnes-Hut algorithm for approximating gravitational forces, and U-Net, a convolutional neural network architecture, for analyzing simulation data. They also employ optimization algorithms like Adam and interpolation methods like Shepard interpolation. The research explores topics such as the Milky Way’s mass distribution, variable interstellar radiation fields, dust and chemistry in galaxies, early stellar feedback, cosmological structure formation, supernova clustering, and non-equilibrium chemistry. The team successfully simulated a galaxy containing 300 billion particles, surpassing the billion-particle barrier previously limiting state-of-the-art simulations. This advancement stems from a new integration scheme combining N-body and hydrodynamic simulations with machine learning, effectively bypassing computational bottlenecks caused by short-timescale events like supernova explosions. The resulting simulation represents the first star-by-star model of an entire galaxy, resolving individual stars within the Milky Way and offering a detailed view of galactic structure and evolution.

The method demonstrates excellent scalability, performing efficiently across a substantial number of CPU cores. While acknowledging limitations inherent in numerical simulations, the authors highlight the potential for future work to refine the model and explore specific astrophysical phenomena with even greater accuracy. Further research will focus on incorporating more complex physics and validating the simulation results against observational data, ultimately enhancing our understanding of galaxy formation and evolution.

👉 More information

🗞 The First Star-by-star -body/Hydrodynamics Simulation of Our Galaxy Coupling with a Surrogate Model

🧠 ArXiv: https://arxiv.org/abs/2510.23330