Researchers are increasingly scrutinising the longevity of code produced by artificial intelligence as it becomes integrated into open-source software development. Musfiqur Rahman and Emad Shihab, both from Concordia University Montréal, alongside their colleagues, investigated whether this AI-generated code is truly ‘disposable’, quickly implemented but soon abandoned , a concern that could significantly increase the maintenance burden for developers. Their analysis of over 200,000 code units across 201 open-source projects reveals a surprising result: AI-authored code doesn’t appear to be discarded any faster than human-written code, exhibiting a notably lower rate of modification. While AI code shows a slight tendency towards corrective changes, the differences are minimal, suggesting the key to successful integration lies not in generation quality, but in the organisational practices surrounding its long-term maintenance and evolution.

AI code survives longer than expected

Challenging the ‘Disposable’ Nature of AI Code

Scientists have begun to unravel the long-term fate of code generated by AI coding assistants, challenging the prevailing notion that such code is merely “disposable”. A central hypothesis within the software engineering community posited that AI-generated code, while quickly integrated, would be discarded shortly thereafter, potentially shifting the maintenance burden from initial generation to post-deployment remediation. Contrary to expectations, the study reveals that AI-authored code demonstrates significantly longer survival rates, exhibiting a 15.8 percentage-point lower modification rate at the line level and a 16% lower hazard of modification (HR = 0.842, p This breakthrough establishes that AI-generated code isn’t simply integrated and then quickly abandoned, but rather persists within codebases for a considerable duration.

The research team employed survival analysis, a statistical framework borrowed from medical and reliability engineering, to model the time-to-event data, carefully accounting for right-censored observations to ensure accurate comparisons. By tracking code from its initial merge into a project through matched observation windows, the scientists ensured that both AI-authored and human-authored code were exposed to comparable temporal conditions. This meticulous approach allowed for a robust assessment of code longevity, moving beyond simple metrics of initial correctness to evaluate long-term integration and stability. However, the study unveils nuanced differences in modification profiles between AI- and human-authored code.

While AI-generated code exhibits modestly elevated corrective rates, indicating a slightly higher need for bug fixes (26.3% versus 23.0%), human code demonstrates a greater propensity for adaptive modifications, responding to changes in the surrounding environment. Importantly, the effect sizes of these differences are small (Cramér’s V = 0.116), and variation between different AI agents exceeds the overall difference between AI and human code. This suggests that the performance of AI coding assistants is not monolithic, and individual agent capabilities play a significant role in code quality and longevity. Furthermore, the researchers explored the potential for predicting which code units are prone to modification, achieving an AUC-ROC of 0.671 using textual features.

AI and Human Code Lifespan Analysis reveals critical

Using Survival Analysis for Code Longevity Study

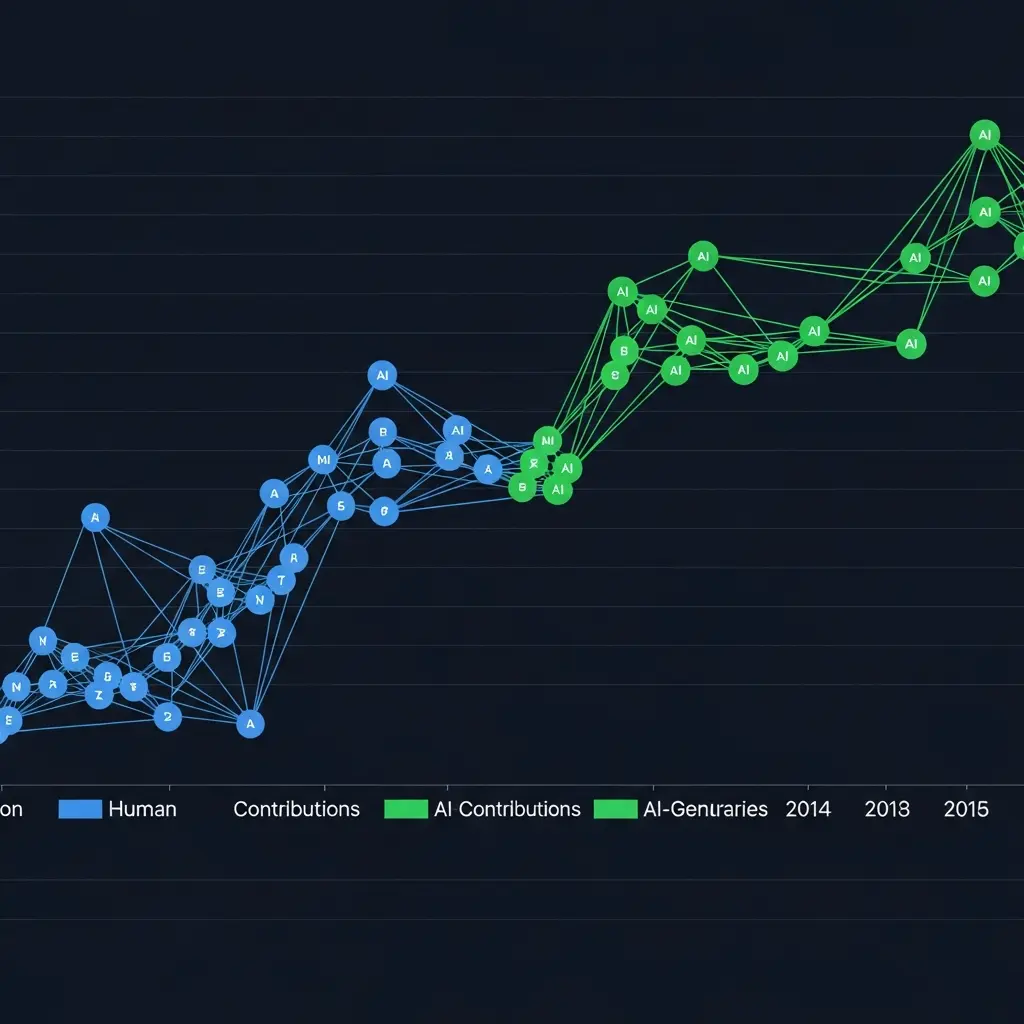

Scientists initiated a comprehensive survival analysis, meticulously tracking over 200,000 code units authored by AI agents and humans across 201 open-source projects sourced from the AIDev dataset. The research team employed survival analysis, a statistical framework borrowed from medical and reliability engineering, to model the lifespan of code units, accounting for instances where observation periods ended before modification occurred, termed ‘right-censoring’. Experiments began by establishing comparable temporal exposure for both agent- and human-authored code, tracking each unit from its initial merge into a project through matched observation windows. The study pioneered a detailed examination of code modification rates, revealing that AI-authored code exhibits a significantly lower modification rate of 15.8 percentage points at the line level.

Researchers calculated a hazard ratio of 0.842, indicating a 16% reduction in the hazard of modification for agent-generated code compared to human-written code (p However, the effect sizes were small, as measured by Cramér’s V at 0.116, and variation within each category exceeded the difference between agent- and human-authored code. To assess predictive capabilities, scientists harnessed textual features to identify code prone to modification, achieving an Area Under the Receiver Operating Characteristic curve (AUC-ROC) of 0.671. Despite this ability to pinpoint modification-prone code, predicting when modifications would occur proved considerably more challenging, yielding a Macro F1 score of only 0.285. This suggests that the timing of modifications is heavily influenced by external organisational dynamics rather than inherent code characteristics, implying that the true bottleneck for AI-generated code lies not in its initial quality, but in the practices governing its long-term evolution.

Stability Findings and External Modification Factors

AI-generated code exhibits greater long-term stability than manually

Scientists achieved a surprising result regarding code generated by AI coding assistants, challenging the widely held belief that this code is inherently “disposable”. The research team investigated this hypothesis through survival analysis of 201 open-source projects, meticulously tracking over 200,000 code units created by AI agents versus human developers. Contrary to expectations, -authored code demonstrates significantly longer survival times; at the line level, the modification rate is 15.8 percentage points lower, indicating greater stability. Furthermore, the hazard of modification, the probability of code being altered at any given time, is 16% lower for -authored code (HR = 0.842, p Experiments revealed that the team analysed survival at both file and line levels to capture varying degrees of code churn.

File-level tracking monitored individual source code files, defining their lifespan from merge commit to subsequent modification, while line-level tracking focused on individual lines of code, attributing authorship to either the AI agent or a human developer. This granular approach allowed for precise comparison, resolving the issue of mixed authorship often found within files. The study meticulously recorded 15,990 file-level survival events and 210,184 line-level events, with 12,804 files (80.1%) and 129,484 lines (61.6%) undergoing modification during the observation period. Data shows that while -authored code exhibits modestly elevated corrective modification rates (26.3%) compared to human code (23.0%), the effect size is small (Cramér’s V = 0.116).

Importantly, the variation in modification rates exceeds the difference between -authored and human code, suggesting external factors play a significant role. Tests prove that textual features can identify modification-prone code with an AUC-ROC of 0.671, but predicting when modifications will occur remains difficult, yielding a Macro F1 score of only 0.285. This suggests the timing of code changes is heavily influenced by organizational dynamics rather than inherent code quality. Measurements confirm that the median duration of survival for code at the file level was 15.9 days, with a mean duration of 64.3 days.

At the more precise line-level granularity, the median survival duration increased to 118.4 days, and the mean reached 120.5 days. Kaplan-Meier estimation and log-rank tests demonstrated a statistically significant difference in survival distributions between -authored and human-authored code. Cox Proportional Hazards Regression further quantified this effect, revealing a hazard ratio of 0.842 (95% CI: 0.833-0.852, p.

AI code survives longer than human code because

Researchers have challenged the widely held belief that code generated by AI coding assistants is inherently “disposable” within software development projects. Through a survival analysis of over 200,000 code units from 201 open-source projects, they demonstrated that AI-authored code persists significantly longer than code written by humans, exhibiting a 15.8 percentage-point lower modification rate and a 16% lower hazard of modification. While AI-generated code showed a slightly higher rate of corrective modifications, human-authored code demonstrated a greater tendency towards adaptive changes; however, these differences were relatively small. This study’s findings suggest that the longevity of AI-generated code is not necessarily limited, and the initial concerns about a rapid cycle of generation and discard may be unfounded.

The research indicates that the primary challenge lies not with the quality of the generated code itself, but with the organisational practices surrounding its integration and maintenance, including ownership, review processes, and responsibility assignment. The team acknowledges limitations in their analysis, noting that the predictive power of static code features for identifying modification timing is modest, and that their ownership hypothesis requires further investigation through developer surveys. Future research should explore the impact of dynamic signals, such as CI/CD failures and production error logs, on predicting code modifications, as well as developing methods for attributing authorship in scenarios involving iterative human refinement of AI suggestions, and extending the analysis to closed-source enterprise environments to assess broader generalizability.

🗞 Will It Survive? Deciphering the Fate of AI-Generated Code in Open Source

🧠 ArXiv: https://arxiv.org/abs/2601.16809