Researchers are tackling the challenge of bridging the accuracy gap between artificial neural networks (ANNs) and their energy-efficient counterparts, spiking neural networks (SNNs). Zhengzheng Tang from Boston University, along with colleagues, present NEXUS, a novel framework demonstrating bit-exact equivalence between ANNs and SNNs , achieving mathematically identical outputs without the approximations common in existing SNN approaches. This significant advance stems from constructing all arithmetic operations using neuron logic gates implementing IEEE-754 floating-point arithmetic, and enables identical task accuracy (with 0.00% degradation) on models up to the LLaMA-2 70B scale, alongside substantial energy reductions of 27-168,000 on hardware. NEXUS’s unique spatial bit encoding also provides inherent immunity to membrane potential leakage and tolerance to synaptic noise, paving the way for truly accurate and efficient neuromorphic computing.

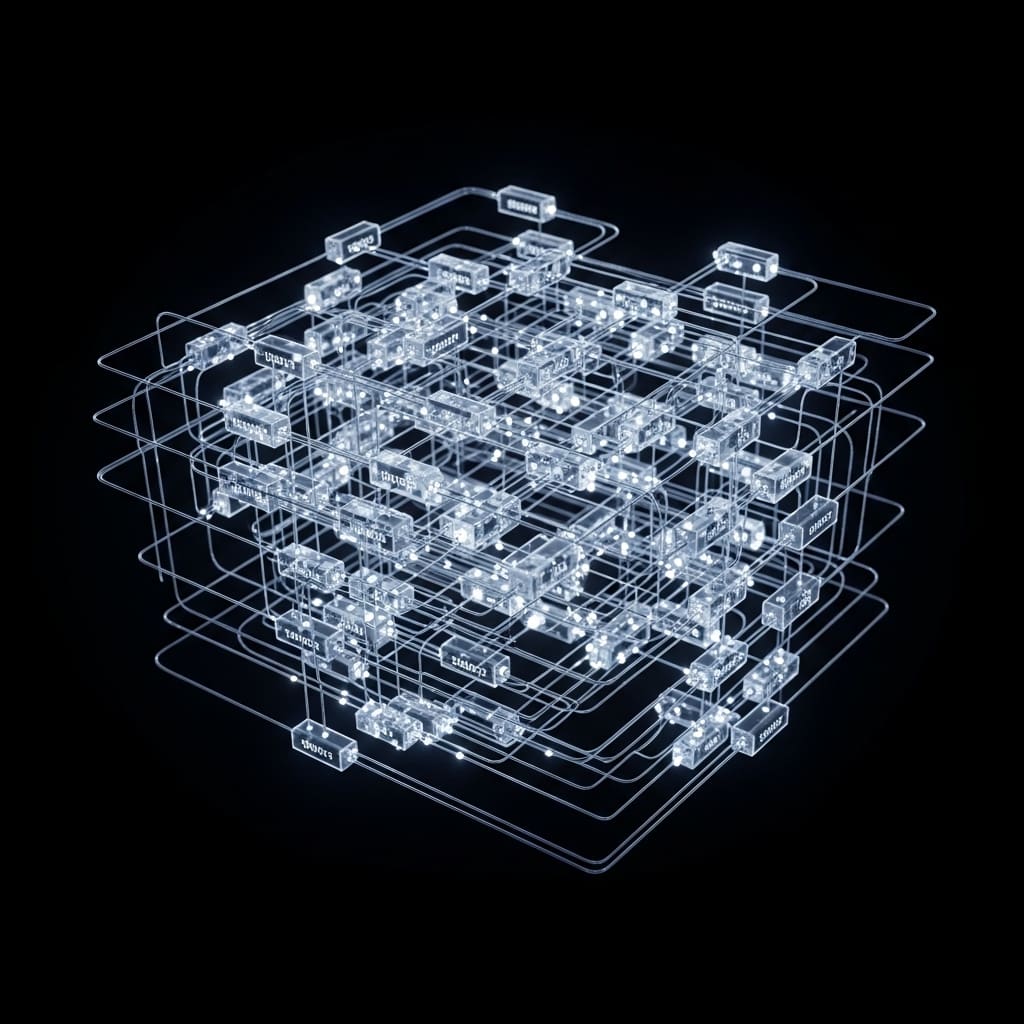

The research addresses a fundamental limitation of SNNs , the approximation of continuous values with discrete spikes , by constructing all arithmetic operations from pure Integrate-and-Fire (IF) neuron logic gates that implement IEEE-754 compliant floating-point arithmetic. This innovative approach bypasses the need for approximations in encoding, nonlinear computation, and training, offering a unified solution for zero computational error, limited only by machine-precision fluctuations. Through spatial bit encoding, hierarchical neuromorphic gate circuits, and surrogate-free Straight-Through Estimator (STE) training, NEXUS delivers outputs mathematically identical to standard ANNs.

The team achieved this breakthrough by employing spatial bit encoding, a method that directly maps IEEE-754 floating-point representations to spike sequences, achieving zero encoding error by construction. Each 32-bit pattern of an FP32 value is mapped to 32 parallel spike channels via bit reinterpretation, operating within a fixed, short time window of only 16 steps, a significant reduction compared to the 1,024 steps required by Time-To-First-Spike (TTFS) methods to achieve comparable precision. This spatial encoding design inherently eliminates susceptibility to membrane potential leakage, maintaining 100% accuracy across all decay factors, a common challenge in physical neuromorphic hardware. Furthermore, the framework tolerates synaptic noise up to σ = 0.2 with greater than 98% gate-level accuracy, demonstrating robustness in noisy environments.

Experiments conducted on models up to LLaMA-2 70B demonstrate identical task accuracy , a 0.00% degradation , with a mean ULP error of only 6.19, while simultaneously achieving an energy reduction of 27-168,000x on neuromorphic hardware. The hierarchical architecture of NEXUS constructs complex nonlinear functions from basic IF neuron logic gates, cascading from primitive operations to complete transformer layers, ensuring bit-exact forward computation. Crucially, the surrogate-free STE training mechanism allows gradients to flow directly through these bit-exact modules, as the forward computation is mathematically equivalent to ANNs, eliminating the need for heuristic approximations. This research establishes a new paradigm for SNN development, moving beyond approximation towards true equivalence with ANNs, and opens avenues for ultra-efficient, high-performance neuromorphic computing. The framework’s inherent immunity to membrane potential leakage and tolerance to synaptic noise make it particularly well-suited for deployment on dedicated neuromorphic hardware, promising significant advancements in low-power applications and edge computing. The ability to achieve ANN-level accuracy with substantial energy savings positions NEXUS as a compelling alternative for a wide range of machine learning tasks, paving the way for more sustainable and scalable artificial intelligence systems.

Spatial Bit Encoding for ANN-SNN Equivalence achieves efficient

Scientists developed NEXUS, a novel framework achieving bit-exact equivalence between Artificial Neural Networks (ANNs) and Spiking Neural Networks (SNNs), eliminating the approximation errors inherent in existing SNN approaches. The research team engineered a system where all arithmetic operations, both linear and nonlinear, are constructed from fundamental Integrate-and-Fire (IF) neuron logic gates implementing IEEE-754 compliant floating-point arithmetic. This innovative design bypasses the need for surrogate gradients or spike-friendly approximations, enabling mathematically identical outputs between ANNs and SNNs up to machine precision. Crucially, the study pioneered Spatial Bit Encoding, directly mapping 32-bit IEEE-754 floating-point values to 32 parallel spike channels, achieving zero encoding error by construction.

Experiments employed a hierarchical architecture of neuromorphic gate circuits, building from basic logic gates and full adders to complete transformer layers, demonstrating the scalability of the approach. Reconstruction fidelity was assessed using Mean Squared Error (MSE) across 10,000 random values, revealing NEXUS achieves an MSE of 1.28x 10⁻⁶ with 8 time steps, significantly outperforming rate coding (7.70x 10⁴ with 16 steps) and Time-To-First-Spike (TTFS) encoding (3.68x 10⁵ with 16 steps). The team validated NEXUS on models up to LLaMA-2 70B, demonstrating identical task accuracy (0.00% degradation) with a mean ULP error of only 6.19. This work harnessed a surrogate-free Straight-Through Estimator (STE) training method, where the identity mapping allows gradients to flow directly through the bit-exact modules without requiring smooth surrogate functions. Furthermore, the single-timestep design of spatial bit encoding renders the framework inherently immune to membrane potential leakage, maintaining 100% accuracy across all decay factors, even with a 90% decay per timestep (β = 0.1). The system delivers substantial energy reduction, achieving 27-168,000x lower energy consumption on hardware.

ANNs and SNNs achieve bit-exact equivalence with NEXUS

Scientists have developed NEXUS, a novel framework achieving bit-exact equivalence between Artificial Neural Networks (ANNs) and Spiking Neural Networks (SNNs), eliminating the accuracy sacrifices typically associated with SNN approximations. The research demonstrates that outputs from NEXUS are mathematically identical to standard ANNs, up to machine precision, through the construction of all arithmetic operations from pure Integrate-and-Fire (IF) neuron logic gates. Experiments on models up to LLaMA-2 70B confirmed identical task accuracy, 0.00% degradation, with a mean Unbiased Logical Error (ULP) error of only 6.19. The team measured a substantial energy reduction ranging from 27 to 168,000 on hardware, highlighting the potential for highly efficient neuromorphic computing.

Crucially, the framework’s spatial bit encoding, which maps IEEE-754 floating-point representations directly to parallel spike channels, achieves zero encoding error by construction. Tests confirm 100% accuracy across all decay factors, rendering NEXUS inherently immune to membrane potential leakage, a common limitation in other SNN approaches. Furthermore, the system tolerates synaptic noise with greater than 98% gate-level accuracy, demonstrating robustness in noisy environments. Researchers implemented a hierarchical architecture, building from basic IF neuron logic gates to complete transformer layers, ensuring bit-exact computation throughout the forward pass.

Nonlinear functions, including exponential, sigmoid, GELU, Softmax, and RMSNorm, are decomposed into sequences of IEEE-754 FP32 operations, each implemented by the same IF neuron gate primitives used for linear arithmetic. This eliminates the need to treat nonlinear functions as requiring special handling, achieving bit-exact results without architectural substitution. For the backward pass, the Straight-Through Estimator (STE) becomes an exact identity mapping, as the forward computation is mathematically equivalent to the ANN, enabling surrogate-free training and enhanced stability. Spatial bit encoding utilizes a direct bit-level mapping between IEEE-754 floating-point values and parallel spike channels, preserving every bit of information without approximation.

The team demonstrated multi-precision support, dynamically slicing neuron parameters to accommodate FP8, FP16, and FP64 formats, avoiding memory fragmentation. The IF neuron model, with a soft reset mechanism, functions as a universal logic primitive, capable of implementing basic logic gates, AND, OR, and NOT, through careful threshold selection. Empirical validation across a range of decay factors, β ∈ [0.1, 1.0], confirmed the inherent immunity to membrane potential leakage, a significant advancement over temporal encoding schemes susceptible to information decay. These findings pave the way for energy-efficient and highly accurate neuromorphic systems capable of running complex AI models.

Bit-exact ANN to SNN conversion with NEXUS enables

Scientists have developed NEXUS, a new framework achieving bit-exact equivalence between artificial neural networks (ANNs) and spiking neural networks (SNNs). This means the SNNs produced by NEXUS yield mathematically identical outputs to their ANN counterparts, unlike previous approaches that relied on approximations using discrete spikes. The core of NEXUS lies in constructing all arithmetic operations, both linear and nonlinear, from integrate-and-fire (IF) neuron logic gates implementing IEEE-754 compliant floating-point arithmetic. Through spatial bit encoding, hierarchical gate circuits, and surrogate-free straight-through estimator (STE) training, NEXUS maintains identical task accuracy, demonstrated on models up to LLaMA-2 70B with 0.00% degradation, while achieving significant energy reductions of 27, 168,000times on hardware.

Crucially, the spatial bit encoding design renders the framework immune to membrane potential leakage and tolerant of synaptic noise, with over 98% gate-level accuracy under realistic conditions. These results suggest that bit-exact SNN computation, energy efficiency, and robustness to hardware imperfections are not mutually exclusive goals. The authors acknowledge that while their framework demonstrates robustness to membrane leakage and noise, further investigation into the limits of noise tolerance and the impact of more complex hardware variations is warranted. Future research directions could explore the application of NEXUS to a wider range of neural network architectures and datasets, as well as the development of dedicated neuromorphic hardware optimised for this bit-exact SNN computation. This work represents a substantial step towards realising the potential of energy-efficient and robust neuromorphic computing by bridging the gap between the theoretical benefits of SNNs and their practical implementation.

👉 More information

🗞 NEXUS: Bit-Exact ANN-to-SNN Equivalence via Neuromorphic Gate Circuits with Surrogate-Free Training

🧠 ArXiv: https://arxiv.org/abs/2601.21279